Now Reading: Integrating AI into Business: Tools and Best Practices

-

01

Integrating AI into Business: Tools and Best Practices

Integrating AI into Business: Tools and Best Practices

Strategic Alignment and Vision for AI

Strategic alignment starts with translating broad business goals into AI-enabled outcomes that can be measured, funded, and governed. For most organizations, the first priority is to define a limited set of high-impact use cases that align with the company’s core value proposition, competitive differentiators, and customer expectations. This requires executive sponsorship, a clear mandate, and a portfolio view that balances short-term wins with longer-term capability building. Leaders should articulate success in terms of revenue, cost reduction, risk mitigation, or customer experience, and establish thresholds for go/no-go decisions that reflect risk tolerance and operational feasibility. Without a concrete direction, AI efforts drift into experimentation without scale.

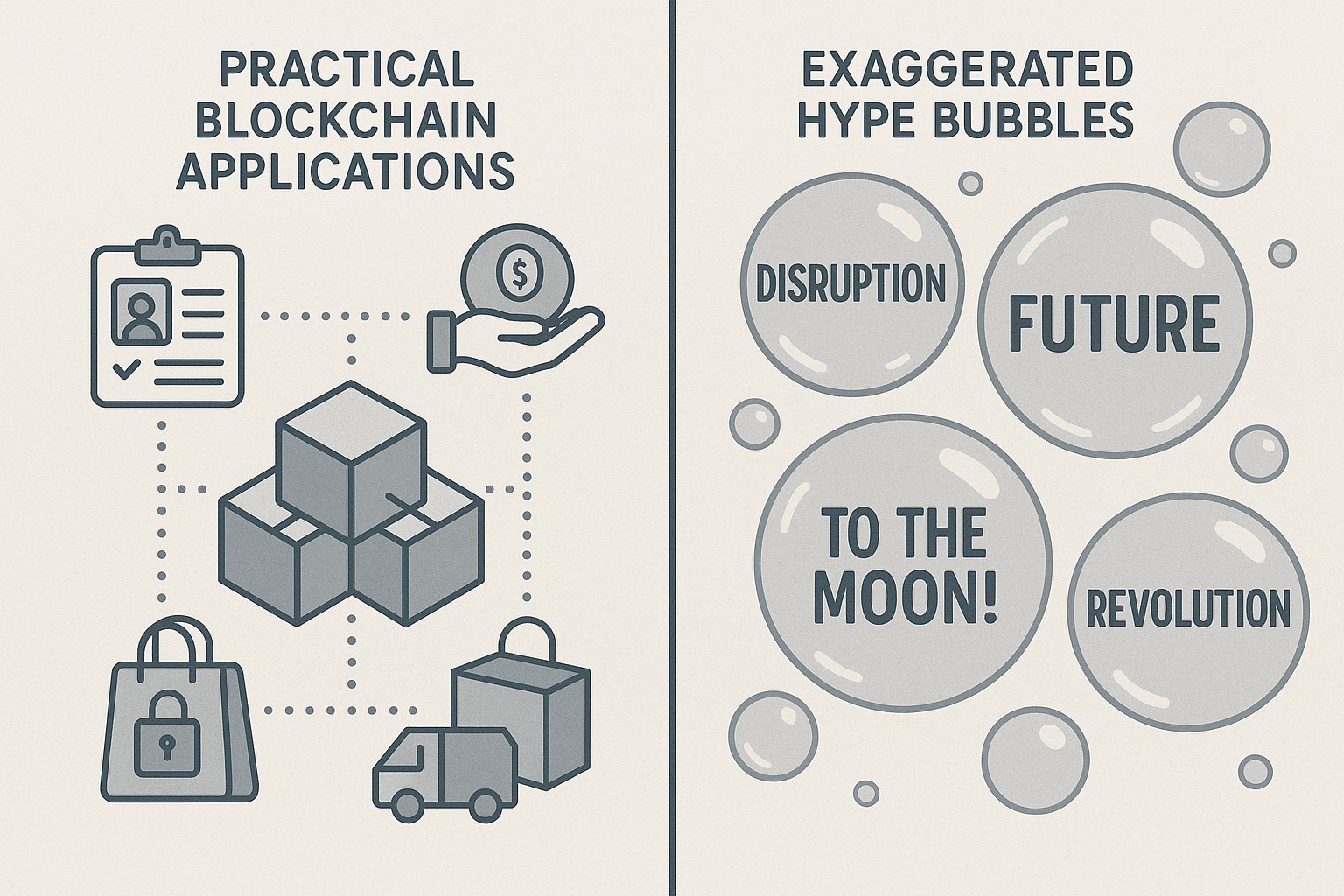

A disciplined approach to selecting use cases helps avoid projects that promise novelty but deliver limited business value. Establish criteria around data availability, model feasibility, operational impact, and governance requirements before committing resources. Build a lightweight scoring framework that weighs each candidate by value, effort, risk, and scalability, and maintain a living backlog that adapts as data ecosystems evolve. In parallel, define an organization model that names accountable owners, cross-functional teams, and a cadence for reviews, ensuring that AI initiatives are not siloed within IT or data science groups alone. This structure creates clarity for teams and signals to the market that AI is part of a broader strategy, not a one-off experiment.

With clarity on direction and governance, organizations can craft an implementation roadmap that links development cycles to business calendars. Adopt an approach that blends experimentation with disciplined rollout, using pilots to validate assumptions and establish indicators for success. Schedule regular stakeholder reviews, align funding with milestones, and embed AI initiatives into planning processes, product roadmaps, and customer journeys. The outcome should be a repeatable framework that scales impact while maintaining control over risk, privacy, and quality, ensuring that every AI initiative contributes to strategic objectives and measurable business results.

Data Readiness, Architecture, and Tooling

Data readiness is the foundation of any credible AI program. Without trusted data, models will underperform, predictions will be unreliable, and regulatory exposure can increase. Start by mapping data assets across the enterprise, establishing data ownership, and implementing a defensible data architecture that supports discoverability, lineage, and privacy. Prioritize data quality through automated checks for missing values, inconsistencies, and drift, and design processes for data enrichment, versioning, and secure access. A robust data governance model should balance speed with control, enabling teams to collaborate while protecting sensitive information and maintaining auditability for regulators and customers. In practice, this means a living catalog of data sources, documented data flows, and clear responsibilities for data stewards and custodians.

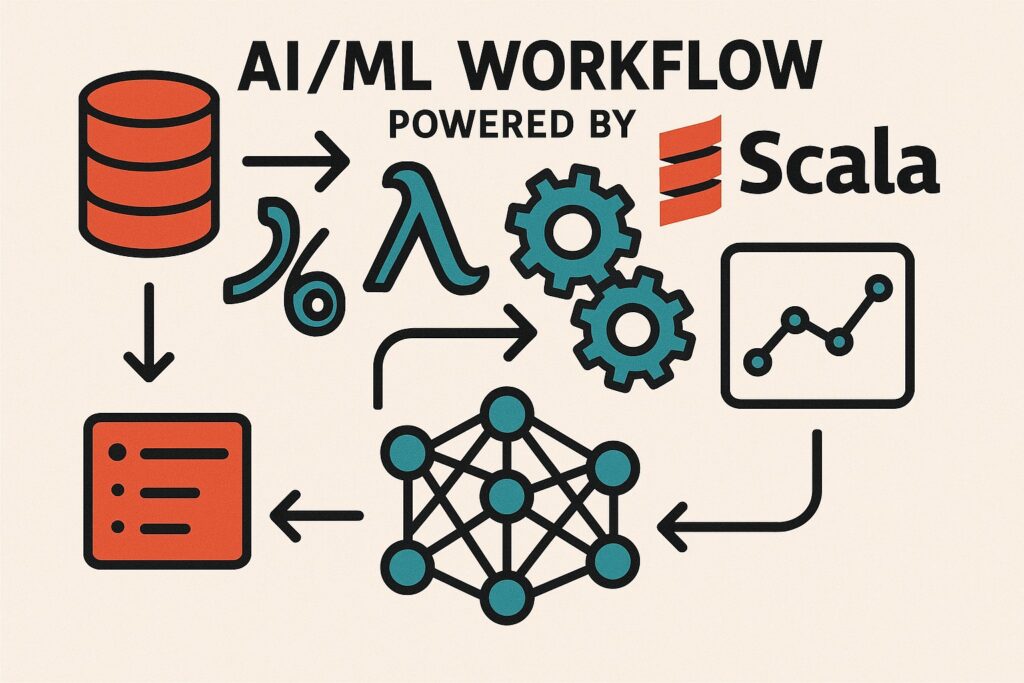

Architectural decisions determine how AI moves from prototype to production. Consider a modular stack that includes data ingestion pipelines, a centralized data store, feature stores for consistent serving, and a deployment layer that supports continuous integration and delivery of models. Emphasize MLOps practices to manage experimentation, reproducibility, monitoring, and rollback. Define clear service interfaces, secure APIs, and observable telemetry so that stakeholders can understand model behavior and intervene when needed. When evaluating tooling, weigh considerations such as interoperability, scalability, vendor risk, and the ability to meet regulatory requirements for privacy and explainability. A well-designed architecture reduces handoffs, accelerates deployment, and creates a foundation for scalable AI across domains.

While technology choices matter, people and processes matter even more. Choose a pragmatic mix of commercial platforms and open-source components that fit your organization’s risk posture and culture. Create a standards catalog for model development, data handling, and deployment, and establish a vendor management plan that includes due diligence, contract terms, and exit provisions. In this context, governance is not a bureaucratic burden but a mechanism that speeds execution by reducing ambiguity and rework.

- Data catalog and lineage established

- Data quality metrics defined and tracked

- Access controls and privacy compliance in place

- Data versioning and reproducibility mechanisms

AI Safety, Governance, and Risk Management

AI safety and governance address the social, ethical, and operational dimensions of intelligent systems. As organizations scale, unintended consequences—such as biased recommendations, opaque decision making, or sensitive data leakage—become more likely. Proactive governance includes defining guardrails, setting risk appetites, and building in human-in-the-loop oversight for high-stakes decisions. Establish biases testing, scenario analyses, and decision traceability to support accountability. A mature program recognizes that governance is ongoing work, not a one-time checklist, and it requires ongoing education, documentation, and auditing. Align governance with regulatory expectations and industry standards to maintain trust among customers and partners.

Risk management extends beyond data and models to processes, people, and partnerships. Implement threat modeling for AI systems, secure development practices, and incident response playbooks that cover data breach, model tampering, and degraded performance. Require explainability for critical applications, maintain audit trails for data and model lineage, and require periodic reviews of third-party components and data sources. Integrate privacy by design and data minimization, so that systems collect only what is necessary and preserve user trust. This holistic view helps organizations respond quickly to incidents and demonstrate responsible AI stewardship.

Implementation Roadmap, Change Management, and Adoption

Implementing AI at scale demands a pragmatic, phased roadmap that minimizes disruption while delivering measurable value. Start with small, well-scoped pilots that address concrete business questions, then translate lessons into repeatable playbooks that can be applied to additional domains. Build cross-functional squads that include product, analytics, engineering, legal, and security professionals, and establish a cadence of reviews that aligns with governance requirements. Emphasize modularity and interoperability so that new models can be integrated without reworking existing systems. A clear rollout plan should specify success criteria, budgets, and risk controls for each phase. This approach reduces risk while delivering incremental value that stakeholders can see and trust.

Change management is as important as technical capability. Communicate a compelling rationale for AI initiatives and articulate how it affects roles, workflows, and incentives. Invest in training that covers not only model usage but also data literacy, privacy, and ethical considerations. Establish updated policies for model monitoring, incident escalation, and continuous learning, and embed AI performance into performance management cycles. A governance framework that supports experimentation while safeguarding customers and operations speeds adoption and reduces resistance. By involving people early and giving them a path to impact, organizations increase the likelihood of sustained adoption and a culture of continuous improvement.

Alongside people and process, governance and supplier relationships play a critical role in sustaining AI momentum. Develop a vendor risk program that assesses data handling, security posture, and long-term support; implement service level expectations for reliability and explainability; and maintain clear exit provisions to prevent vendor lock-in. Finally, plan for long-term maintenance, including retraining schedules, data drift detection, and the allocation of budget for ongoing monitoring and improvement.

- Phase 1: Discover and Prioritize

- Phase 2: Build and Validate

- Phase 3: Scale and Govern

Measuring Success and Driving Continuous Improvement

Measuring success in AI initiatives requires a balanced scorecard that links financial impact to customer outcomes and operations. Establish a baseline before you deploy, and decide on the metrics that matter most—such as throughput, accuracy, precision, recall, and lift in business metrics. Create a dashboard that is accessible to executives and line managers, and implement a cadence for weekly or monthly reviews that inform decision-makers about progress, bottlenecks, and opportunities for adaptation. Ensure that data quality and governance metrics are tracked alongside model performance to provide a holistic view of AI health and reliability. A transparent measurement framework helps translate model performance into tangible business value and informs future investment decisions.

Finally, cultivate a culture of continuous improvement by capturing lessons learned from each cycle and adjusting strategies accordingly. Use retrospective analyses, post-implementation reviews, and ongoing experimentation to refine models, processes, and governance. Align incentives with long-term value creation rather than short-term throughput, and ensure that the organization remains nimble enough to respond to shifting customer needs, competitive pressures, and regulatory changes. By embedding feedback loops into planning and execution, organizations sustain momentum and unlock ongoing value from their AI investments.

FAQ: What are the first steps to start AI integration in a mid-market company?

Begin with executive sponsorship and a cross-functional steering group that includes business leaders, IT, data science, and legal. Inventory candidate processes, assess data readiness, and select a small number of high-impact use cases with clear success criteria. Develop a lightweight pilot plan that demonstrates value quickly, define governance and risk controls, and communicate early wins to build support. Establish a simple measurement framework to track impact, learn from early iterations, and scale successful pilots into broader programs.

FAQ: How do we measure ROI and success for AI projects?

ROI should combine financial metrics (cost savings, revenue uplift) with non-financial indicators (customer satisfaction, cycle time, quality). Establish baselines and track changes against them, using control groups where feasible. Include total cost of ownership for data, infrastructure, and people, and account for ongoing maintenance. Regularly review results, adjust scope, and ensure governance processes are in place to sustain long-term value and manage risk across the lifecycle.

FAQ: What governance practices should we implement when integrating AI?

Implement an AI governance board with representation from business units, IT, risk, privacy, and compliance. Create model risk management practices, data stewardship roles, and clear policy guidelines for explainability, monitoring, and incident response. Maintain audit trails for data and model lineage, conduct regular third-party risk assessments, and define escalation paths for any ethical or regulatory concerns. Ensure training and documentation are up to date and that governance evolves with new AI capabilities and domains.

FAQ: What are common pitfalls to avoid during AI integration?

Avoid a lack of strategy and unclear ownership, which lead to scope creep and misaligned investments. Do not neglect data quality and governance, as poor data undermines model performance and trust. Underestimate the importance of change management, training, and stakeholder engagement, which can stall adoption. Be wary of overreliance on a single vendor or technology, ignore biases and privacy considerations, and fail to plan for scaling beyond pilots. Finally, neglecting ongoing monitoring and maintenance can turn initial AI gains into regression over time.