Now Reading: AI Regulation 2026: EU AI Act & Global Policy Updates

-

01

AI Regulation 2026: EU AI Act & Global Policy Updates

AI Regulation 2026: EU AI Act & Global Policy Updates

Overview and market impact

The EU AI Act, together with related policy announcements and guidelines, signals a fundamental shift in how AI technologies are developed, sold, and deployed across markets. For businesses operating in or serving customers within the European Union, the act creates a common baseline for risk assessment, transparency, and accountability that extends beyond traditional software compliance. In practice, this means that many AI-enabled products and services will require formal risk categorization, documentation, and, in some cases, third-party assessments before they can be marketed or deployed. For companiestechtarget.com readers and the broader tech ecosystem, the regulatory environment is becoming as consequential as the technical design choices themselves, shaping product roadmaps, vendor selection, and governance programs. As the Act aligns with global policy momentum, organizations should prepare for a dynamic regime where regulatory expectations evolve with the technology’s capabilities and observed societal impact.

Beyond the EU, international policy initiatives are converging on similar themes—risk management, data governance, transparency, and human oversight. This convergence creates opportunities for cross-border collaboration and harmonized standards, but it also raises the bar for interoperability and due diligence. Enterprises that plan global AI deployments must consider the EU framework as a reference point for regional compliance while remaining alert to country-specific nuances, enforcement approaches, and sector-specific rules. In short, the 2026 horizon is not simply about ticking regulatory boxes; it is about embedding responsible AI practices into product design, vendor management, and operational decision making to sustain trust, reduce risk, and maintain competitive advantage in a complex policy landscape.

Scope and key definitions

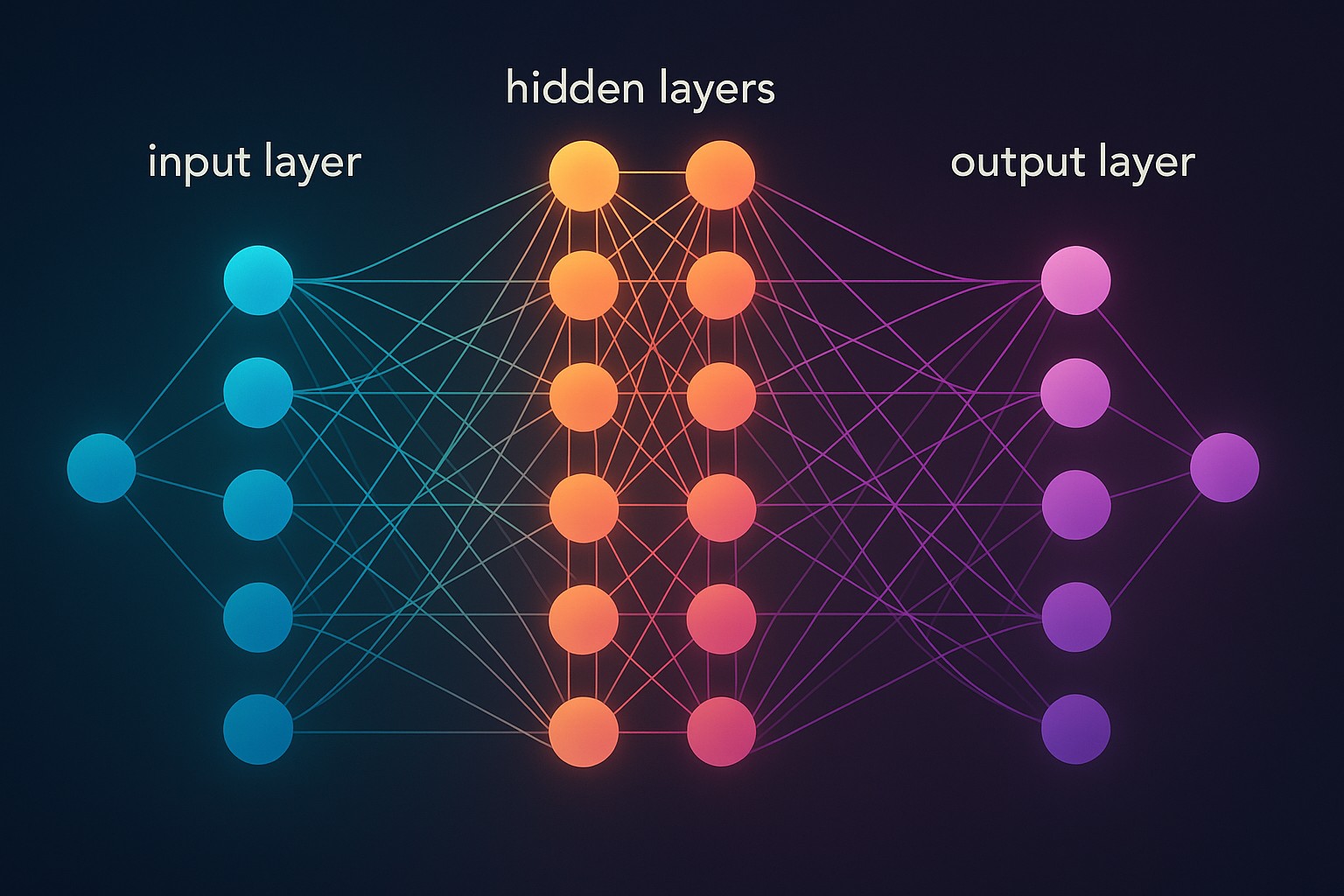

The EU AI Act adopts a risk-based approach that defines which AI systems fall under regulation and the roles of various actors in the supply chain. The scope is intentionally broad, capturing not only standalone AI products but also many AI-enabled services and updates that affect end users. For companies serving enterprises and consumers, understanding the scope is critical to identifying responsibilities across development, deployment, procurement, and governance. This section outlines core terms and how they translate into practical obligations for organizations operating in the EU and beyond.

- AI System: A software or hardware-software combination that generates outputs, decisions, or recommendations based on statistical patterns, machine learning, or rule-based logic, including updates or iterations that materially alter behavior.

- Provider: The entity that develops, supplies, or makes available an AI system, including licensors, vendors, and distributors who sell or deploy the technology in the EU market.

- User: The natural or legal person who uses or is intended to use the AI system, including organizations implementing AI within their operations or offering it to customers.

- High-Risk AI: Systems assessed to pose substantial risks to health, safety, fundamental rights, or the environment, such as those used in critical infrastructure, education, recruitment, or essential public services.

- Conformity Assessment: The process by which an AI system demonstrates compliance with the Act’s requirements through documentation, testing, and, in some cases, independent evaluation.

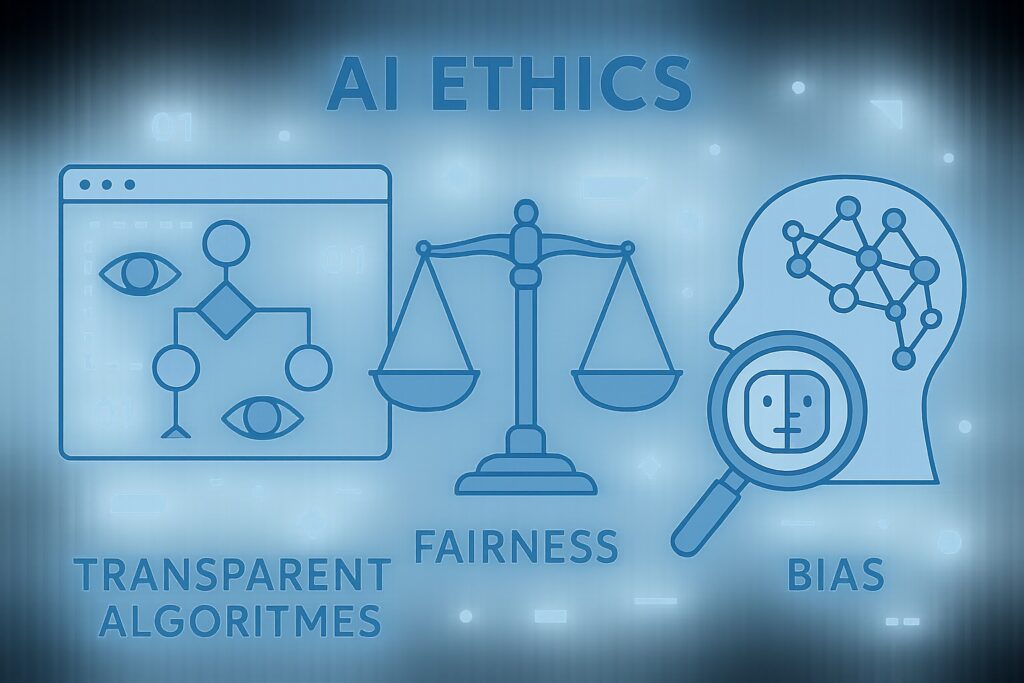

- Data Governance and Training Data: Standards for data quality, provenance, bias management, data minimization, and security used to train, validate, and test AI systems.

- Post-Market Monitoring: Ongoing oversight after deployment, including performance tracking, incident reporting, and accountability measures for responsible AI use.

- Exclusions: Certain activities, such as pure research or non-consumer-facing tools that do not affect end users’ rights, may fall outside the immediate scope or be subject to separate guidance.

EU AI Act: core obligations for providers and users

The Act places distinct but complementary responsibilities on providers and users of AI systems. Providers must implement robust governance, maintain documentation, and facilitate oversight across the lifecycle of the AI system. Users—ranging from enterprise buyers to internal teams deploying AI—must integrate risk controls, monitor performance, and ensure appropriate use-case alignment. Across both groups, the emphasis is on transparency, accountability, and human oversight where required by risk level. The following steps outline the practical pathway to compliance for most organizations preparing for the EU market in 2026 and beyond.

- Determine risk category: Assess the intended use, context, and potential harm to identify whether the AI system is designated as high-risk or falls into a lower-risk category with lighter obligations.

- Implement governance and data management: Establish data quality controls, bias mitigation processes, documentation of training data sources, and clear data handling policies aligned with the AI lifecycle.

- Provide technical documentation and risk management: Create and maintain risk assessments, model documentation, and traceability records that describe performance, limitations, and safeguards.

- Conformity assessment and labeling: Where applicable, engage in conformity assessments and, when required, affix appropriate labeling or disclosures to inform users about the AI system’s capabilities and constraints.

- Post-market monitoring and incident reporting: Implement monitoring mechanisms, procedures for incident reporting, and corrective actions to address deviations or harm discovered after deployment.

EU regulatory timeline and implementation milestones

The EU’s regulatory timeline centers on the 2026 effective date while recognizing transitional periods for specific obligations and sectors. For many organizations, the first priority is mapping the Act’s categories to their product lines, followed by aligning internal governance with the expected documentation, testing, and reporting requirements. The timeline includes preparatory periods for providers to establish governance, ensure data resilience, and complete any required third-party assessments before product launch or continued distribution within the EU market. Given the pace of digital innovation, proactive readiness—such as market data collection, risk registries, and governance roadmaps—will help organizations avoid delays or compliance bottlenecks when enforcement intensifies. As practice evolves, firms should maintain close alignment with national guidance, sectoral rules, and updates to Brussels-wide guidance that refine how categories apply to emerging AI capabilities.

In parallel, several compliance programs in multinational environments are already aligning with the Act by adopting common documentation templates, cross-border data controls, and standardized risk assessments. This harmonization supports faster go-to-market decisions while preserving the flexibility needed to adapt to country-specific interpretations and enforcement priorities. For readers targeting the EU market, the recommended operational posture is to treat compliance as an ongoing capability—embedded in product management, engineering sprints, and vendor governance—rather than a one-off regulatory checkpoint. The practical effect is better risk visibility, streamlined audits, and more predictable deployment cycles across EU member states.

Global policy updates and cross-border considerations

Beyond the EU, global policy initiatives are shaping how organizations design, deploy, and govern AI systems. While each jurisdiction implements its own rules, there is growing emphasis on risk management, data governance, transparency, and human oversight that facilitates responsible AI. For multinational teams, these developments create a framework for consistent governance while respecting local regulatory requirements. Key issues include how to balance innovation with safety, how to document decisions and outcomes, and how to establish accountability across dispersed teams and supply chains. The following policy landscape highlights the most influential international trends for global AI programs and procurement strategies.

- OECD AI Principles and updates: Core guidelines for trustworthy AI, with ongoing revisions to reflect practical governance challenges such as data quality and explainability.

- US National AI Initiative and sectoral rulemaking: A coordinated federal approach to research, procurement, and safety standards that intersects with EU expectations in areas like transparency and risk management.

- United Kingdom AI Strategy and sector-specific guidance: A post-Brexit framework emphasizing safety, fairness, and economic competitiveness and detailing how organizations should implement governance practices.

- China’s governance rules and data security policies: A robust regulatory environment focusing on data localization, security reviews, and controllability of AI-enabled technologies.

- G7/G20 safety standards and codes of conduct: International forums promoting best practices, interoperability, and cross-border cooperation on AI risk management and ethical use.

- NIST AI RMF and global adoption: Widely referenced risk management framework that informs how organizations structure compliance programs and conduct assessments in diverse markets.

Sector-specific considerations for tech-target vendors and customers

Tech-target vendors face a dual challenge: delivering innovative AI capabilities while meeting a growing set of regulatory expectations. For procurement teams and engineering leaders, this means integrating regulatory considerations into product roadmaps, vendor assessments, and risk controls. Practical steps include mapping each AI product to its risk category, documenting intended use, and ensuring that governance processes cover data provenance, model updates, and incident response. As regulatory scrutiny intensifies, clear communication with customers about capabilities, limitations, and safeguards becomes a competitive differentiator rather than a compliance burden. In addition, privacy, security, and ethics concerns must be woven into the design and testing lifecycle to avoid retrospective remediation and potential reputational risk.

From a cross-border perspective, global organizations should establish a unified AI governance framework that accommodates diverse regulatory requirements while maintaining a consistent standard for risk management. This includes harmonizing data handling practices, adopting shared documentation templates, and implementing vendor screening programs that reflect both EU requirements and regional rules. For teams serving enterprise customers, transparent disclosure about risk categories, model performance, and governance controls can reduce contract negotiation friction and support faster adoption cycles in regulated industries such as finance, healthcare, and critical infrastructure.

Implementation challenges and best practices

Regulatory compliance is not a standalone project; it is an ongoing discipline embedded in product development, operations, and governance. Common challenges include data quality gaps, bias mitigation at scale, and the complexity of documenting model behavior in a regulatory-friendly format. Organizations that invest in modular governance architecture—where risk assessments, data lineage, and testing results are traceable and reusable—tend to achieve smoother audits and faster iteration cycles. Practical best practices emphasize cross-functional collaboration, early risk identification, and a culture of continuous improvement that aligns with both business objectives and regulatory expectations. Ultimately, responsible AI governance helps preserve trust with customers, partners, and regulators while unlocking responsible innovation.

To operationalize these ideas, companies should implement starter programs that scale: create a risk register aligned to AI use cases, establish data stewardship roles, and integrate post-market surveillance with product analytics. Documentation should be living rather than static, updated with model updates, retraining events, and observed performance changes. Training and awareness programs for engineering, legal, and product teams are essential to ensure consistent interpretation of risk categories and regulatory requirements. By weaving governance into the development lifecycle, organizations can reduce fragmentation and turn compliance from a burden into a strategic advantage.

Future outlook and policy evolution

Looking ahead, the regulatory landscape for AI is likely to become more granular, with sector-specific guidance, updated risk criteria, and enhanced enforcement mechanisms. As AI systems become more capable and embedded in critical decisions, regulators may tighten definitions, tighten reporting timelines, and require more robust third-party evaluations for a broader set of use cases. For businesses, this means staying alert to regulatory guidance, accelerating data governance maturity, and investing in scalable, auditable processes that can adapt to evolving rules. The 2026 deadline is a milestone, not a final destination; sustained investment in governance, transparency, and responsible innovation will be essential to navigate ongoing policy evolution and to maintain market access across multiple jurisdictions.

FAQ

When does the EU AI Act take effect and what does the 2026 timing mean for my business?

The EU AI Act’s key compliance dates center on the 2026 effective date, with transitional timelines that vary by risk category and use case. For many organizations, this means completing risk assessments, establishing governance structures, and preparing testing and documentation well in advance. The period up to 2026 is a window to align product design, data practices, and supplier relationships with the Act’s requirements, so that deployments in the EU can proceed with minimum disruption once the enforcement phase begins.

How does the EU Act classify high-risk AI systems and why does that matter for implementation?

High-risk AI systems are those that pose substantial risks to health, safety, or fundamental rights in critical domains such as employment, education, public services, and essential infrastructure. Classification determines the stringency of obligations, including documentation, conformity evaluation, data governance, and post-market monitoring. Understanding whether a given AI solution falls into a high-risk category shapes the required controls, testing standards, and the level of regulatory oversight an organization must commit to before and after deployment.

What should global companies do to align with EU rules and other international initiatives?

Global organizations should build a unified AI governance framework that maps EU requirements to internal policies while maintaining flexibility for country-specific rules. This includes establishing robust data provenance, clear model documentation, risk management processes, and a mechanism for ongoing monitoring and incident reporting. By leveraging international standards such as the NIST AI RMF and OECD principles, companies can achieve consistent governance across regions and reduce the cost of compliance through reusable controls and centralized oversight.

How do international frameworks like OECD principles and NIST RMF relate to EU regulation?

International frameworks provide practical guidance that complements EU requirements by offering repeatable methods for risk assessment, governance, and testing. While EU law is binding within its jurisdiction, adopting frameworks like OECD principles and NIST RMF helps organizations implement defensible processes that are broadly respected by regulators and customers. Aligning with these frameworks can also streamline cross-border audits and assist with vendor due diligence, creating a common language for responsible AI across markets.

What are common pitfalls for AI vendors, and how can they be avoided?

Common pitfalls include incomplete data governance, insufficient documentation of model behavior and risk management, and underestimating the importance of post-market monitoring. Vendors should avoid cramming compliance into a final sprint; instead, they should embed governance throughout the development lifecycle, maintain traceable data lineage, and establish clear incident response processes. Proactively engaging with regulators, customers, and independent evaluators can also reduce the risk of delays or disputes during market introduction and ongoing operations.