Now Reading: Code Review Best Practices for Quality Code

-

01

Code Review Best Practices for Quality Code

Code Review Best Practices for Quality Code

Scope and objectives of a code review

Code reviews operate as a collaborative quality gate that sits between writing code and integrating it into a shared codebase. Their purpose is not to punish contributors, but to raise the overall quality of the software, reduce defect density, and improve long‑term maintainability. In practice, a well‑run review accelerates delivery by catching issues early and sharing domain knowledge across the team. For this reason, scope and objectives should be defined up front: what problems the change is intended to solve, what risks are acceptable in this cycle, and what acceptance criteria will determine success. This framing helps reviewers focus on outcomes rather than personal preferences and aligns the effort with business goals, security requirements, and performance targets. A healthy review culture treats mistakes as learning opportunities and emphasizes constructive collaboration rather than criticism.

In most teams, the immediate objective of a code review is to improve correctness, readability, and maintainability without blocking delivery. The scope can be lightweight for small changes or more formal for architectural decisions, security‑sensitive work, or changes that touch critical modules. In either case, the review should consider both the short‑term impact on the current release and the long‑term implications for future contributors. Establishing a timebox and clear roles helps prevent review fatigue: a reviewer should be asked to focus on specific risks, a maintainer should determine when the change is ready to merge, and the author should respond with concrete fixes or explanations. The objective is not perfection, but a balanced improvement of code quality while preserving velocity and team morale.

- Clarify scope and objectives with the author

- Define success criteria and acceptance tests

- Establish timebox and participants

- Ensure access to relevant docs, tests, and design notes

- Identify dependencies and risk areas

What to look for during a review

Reviewers should examine both the content and the implications of changes. Focus on readability, naming, and the organization of code into cohesive units. Evaluate whether the logic handles edge cases, whether error handling is robust, and whether dependencies are minimized or well documented. Consider how the change interacts with existing tests and whether it affects performance or resource usage in ways that could matter under real load. Security considerations—such as input validation, proper sanitization, and secure defaults—should be part of every review of user‑facing or data‑handling code. Finally, assess how well the change fits with the team’s architectural patterns and long‑term maintainability goals, including documentation updates where appropriate.

When analyzing concrete patterns, keep an eye on implementation details that commonly degrade maintainability. For example, in languages that build strings through repeated concatenation in loops, performance and memory usage can suffer. Inline code examples help illustrate points: a pattern like for (let i = 0; i < n; i++) { s += parts[i]; } can be less efficient than accumulating in an array and joining at the end, as in parts.push(item) and parts.join('') before use. Emphasize concrete alternatives rather than vague critiques, and note where a proposed change may require accompanying tests or documentation. The following focus areas capture the core concerns you should track during most reviews.

- Readability and naming: clear intent, self‑documenting constructs, minimal cognitive load

- Correctness and edge cases: correct handling of unusual inputs, nulls, and failure modes

- Test coverage and reliability: unit tests, integration tests, and observable behavior

- Performance and resource usage: algorithmic efficiency, memory footprint, and scalability

- Security and input validation: correct handling of untrusted data and proper sanitization

- API design and maintainability: stable interfaces, clear contracts, and extensibility

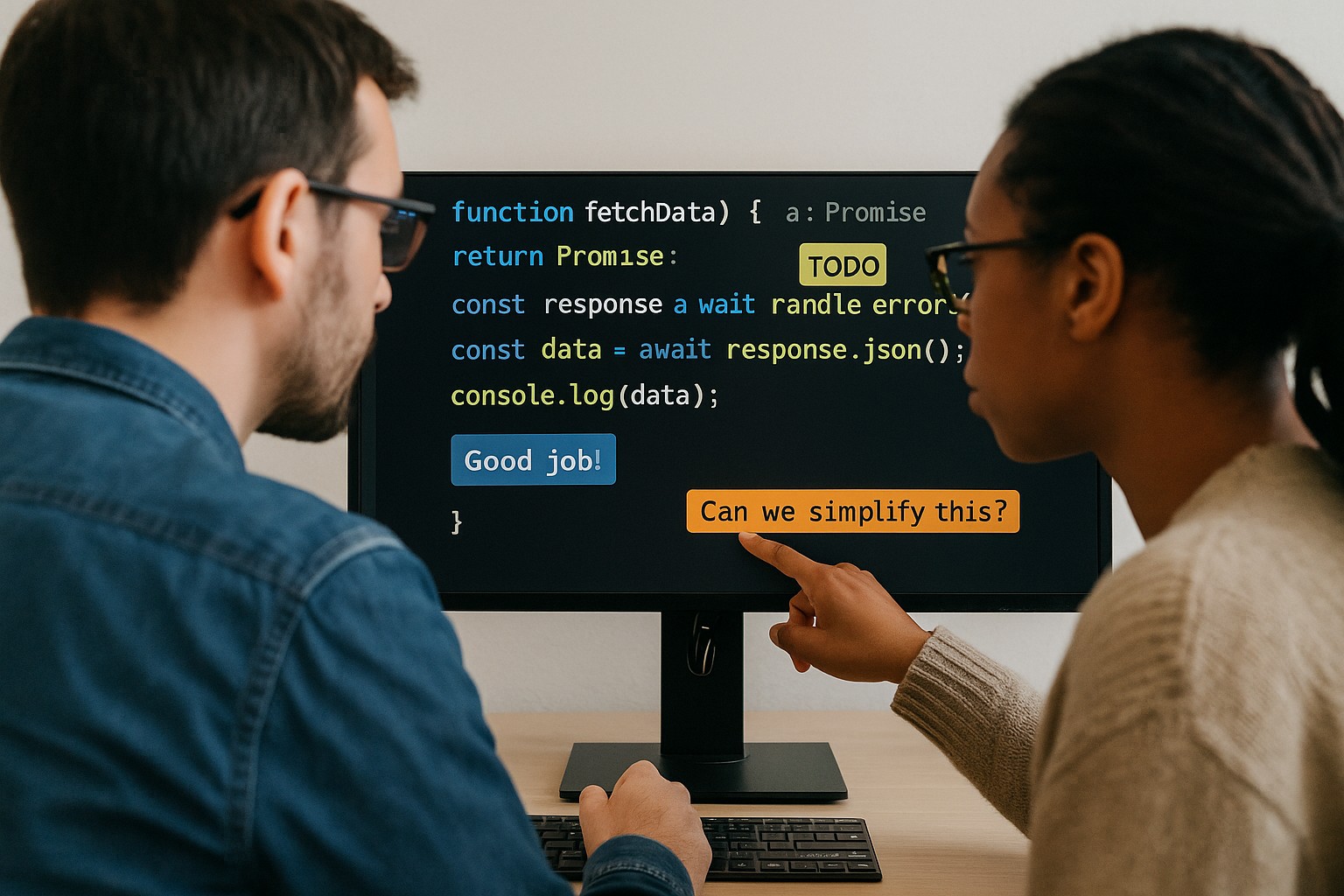

Constructive feedback techniques

Feedback should be actionable, respectful, and targeted at the code and the decisions behind it, not at the author. Begin with what is working well to reinforce positive behavior, then present issues in a way that invites collaboration rather than defensiveness. Frame observations in terms of impact on the system, the team, and the customer, and always attach a concrete suggestion for a fix or an alternate approach. When possible, reference existing guidelines, design notes, or tests so the reviewer’s rationale is grounded in shared expectations. Keep feedback concise, precise, and focused on the smallest relevant portion of the change to avoid overwhelming the author.

A structured approach to feedback helps reduce ambiguity. Describe the issue, explain its potential impact, propose a concrete fix or an alternative, and point to tests or documentation that would verify the change. If a broader architectural concern is raised, explain the trade‑offs and offer a path to incremental improvement rather than a single, sweeping mandate. Where multiple reviewers are involved, aggregate common themes to avoid repetitive comments and ensure consistency across the team. A well‑executed feedback loop shortens iteration times and strengthens trust between contributors and reviewers.

- Identify the issue and its source

- Explain the impact on correctness, performance, or maintainability

- Suggest a concrete fix or safer alternative

- Provide relevant references, tests, or docs

- Offer optional enhancements or refactoring opportunities

- Re‑check after changes and close the loop

Communication and culture

Code review culture sets the pace for how teams collaborate under pressure. A healthy culture emphasizes psychological safety, where contributors feel comfortable raising concerns and asking questions without fear of blame. Asynchronous reviews, clear expectations, and timeboxed feedback help maintain momentum across time zones and schedules. Reviewers should remain mindful of tone, avoid demeaning language, and acknowledge constraints such as tight deadlines or competing priorities. The goal is to cultivate a learning environment where knowledge is shared and quality improves, not a static gate that disciplines contributors. When issues arise, use the opportunity to document decisions, capture context in design notes, and align on next steps so that future changes follow a known path.

To scale culture with growth, establish lightweight governance that roles and responsibilities reflect. Define who can approve changes of certain risk levels, when a design review is required, and how to escalate conflicts. Encourage documentation of decisions within the pull request or linked design docs, and tie reviews to the broader release and deployment process. By making expectations explicit and consistent, teams can reduce cycle time while preserving the integrity and reliability of the code base.

Metrics, documentation, and follow-up

Measuring the effectiveness of code reviews helps teams optimize their process and demonstrate continuous improvement. Avoid vanity metrics that reward speed at the expense of quality. Instead, track a mix of quality and flow indicators, such as defect density discovered during reviews, the time spent in the review stage, and the rate at which issues are closed after feedback. Correlate these with release outcomes, customer impact, and post‑deployment stability to ensure that improvements are translating into real value. Documentation updates, test additions, and architectural notes should be counted as part of the review effort when applicable, since they reduce future ambiguity and support onboarding of new contributors.

Keeping traceability is essential for long‑term maintainability. Attach review notes to the pull request, reference related design documents, and ensure acceptance criteria remain aligned with the implemented changes. Regularly review and refine the criteria used to judge successful reviews, and adjust timeboxes or participation as the team evolves. When a change is merged, summarize key decisions and ensure the corresponding tests, docs, and deployment notes are updated accordingly to prevent drift between the code and its documentation or monitoring.

- Track defect density discovered during reviews

- Monitor review velocity and time to merge

- Link review notes to PRs and design documents

- Anchor acceptance criteria to tests and documentation

- Review and adjust governance as the team grows

Workflow, governance, and scaling reviews

As teams scale, it becomes essential to formalize workflows that preserve quality without creating bottlenecks. Define a clear definition of done that includes code, tests, documentation, and security checks. Establish code owners for critical subsystems and use automated checks in the CI pipeline to enforce gating rules. Apply consistent branch strategies and ensure that reviews occur within an agreed timebox so that developers can plan their work around predictable review windows. In larger teams, segment reviews by area of expertise or risk level to keep feedback focused and actionable. The careful balance between rapid iteration and thorough validation is what maintains momentum while safeguarding the product’s reliability and security.

Beyond processes, governance should codify escalation paths for conflicting feedback and clearly delineate when a review qualifies for a design or security sign‑off. Provide mentors or senior reviewers who can guide junior contributors and help them grow via example and structured feedback. With scalable practices in place, organizations can maintain consistent quality as the code base expands and the development velocity increases, ensuring that every change contributes to a more robust and maintainable system.

What is the ideal length of a code review?

The ideal review length balances thoroughness with momentum. Short, timeboxed reviews—often in the 30–60 minute range for typical changes—tend to keep focus and reduce cognitive load. For larger or riskier changes, it is appropriate to schedule multiple passes or split the work into smaller, incremental pull requests. The key is to ensure that reviewers have enough context to understand the decision and that the author can respond with concrete fixes or clarifications within a reasonable timeframe.

How should conflicts in feedback be handled?

Conflicts are best resolved by separating code decisions from design or architectural concerns, then bringing in the appropriate stakeholders for a quick alignment. Establish a clear escalation path and use existing governance mechanisms to reach consensus. Document the final decision and rationale so future contributors understand the trade‑offs and the chosen approach, reducing repeat debates on similar topics.

How can you measure the success of code reviews?

Success can be assessed using a combination of quality and process metrics. Look at defect density identified in reviews, the rate of rework needed after merge, and the time taken to close reviews. Complement these with qualitative indicators such as reviewer engagement, the percentage of changes that include tests and documentation updates, and feedback on the clarity of PR descriptions and comments. A balanced scorecard approach helps avoid optimizing for a single metric at the expense of overall quality.

How should security concerns be integrated into reviews?

Security should be treated as a first‑class criterion in every review. Check input validation, error handling, access controls, and sensitive data exposure. Encourage reviewers to ask whether threat models or risk assessments were considered for the change and to verify that any security fixes are tested in representative environments. Maintain a checklist that mirrors OWASP Top 10 considerations relevant to the project and reference it during reviews to ensure consistency across teams.

How can new team members be trained in code review best practices?

Effective training pairs new contributors with experienced reviewers in a structured onboarding program. Use real PRs with guided walkthroughs that highlight the reasoning behind each comment and illustrate how to phrase feedback constructively. Provide a concise reference of established conventions, common pitfalls, and a glossary of terms the team uses in reviews. Regular feedback loops and lightweight mentorship help new members integrate quickly while building confidence in contributing high‑quality code.

How should code reviews be integrated with CI/CD?

Code reviews should be tightly integrated with automated checks in the CI/CD pipeline. Enforce gating rules that require passing tests, static analysis, and security checks before a merge can proceed. Ensure reviewers have visibility into CI results and that failures or flaky tests are treated as review blockers when relevant. This integration helps maintain a consistent standard while keeping the human review focused on design intent, correctness, and maintainability rather than low‑level mistakes that tooling can catch.