Now Reading: Monolithic vs SOA vs Microservices: Architecture Evolution

-

01

Monolithic vs SOA vs Microservices: Architecture Evolution

Monolithic vs SOA vs Microservices: Architecture Evolution

Architecture Evolution: From Monoliths to SOA and Microservices

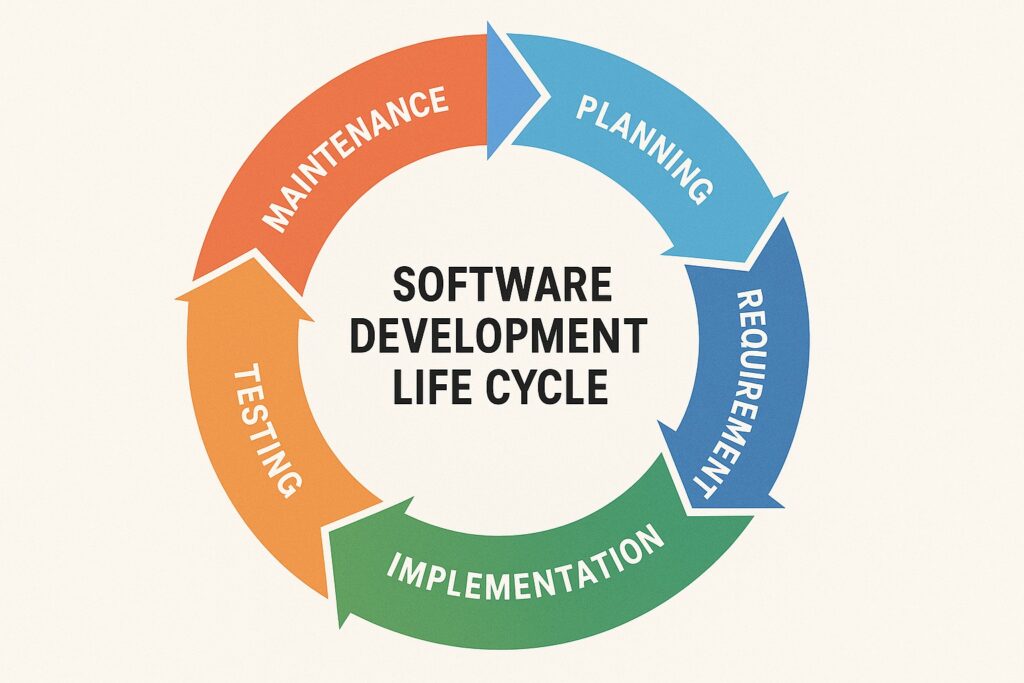

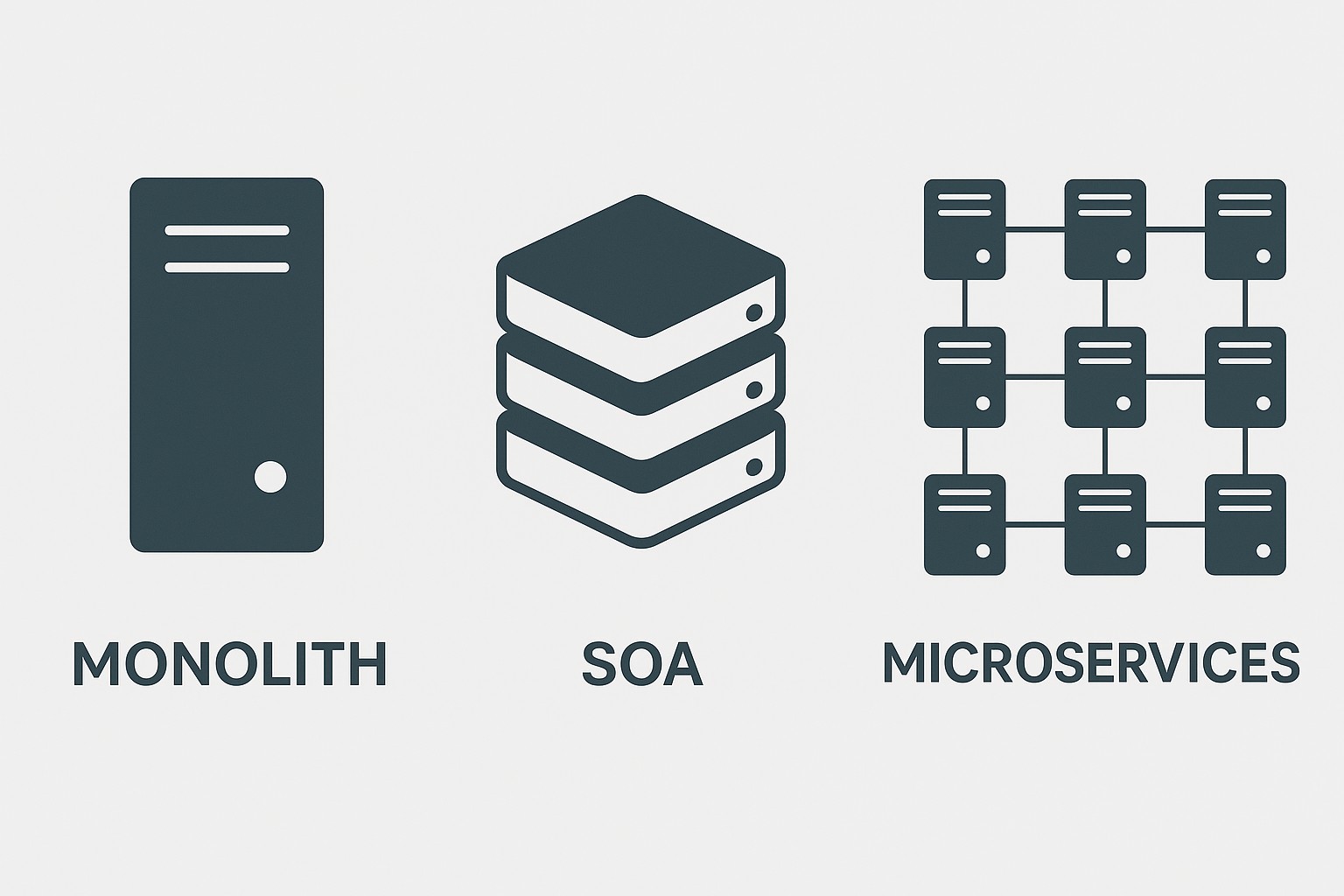

The software industry has traveled a long road from the early, single-threaded applications run on a single stack to the modern approaches that emphasize autonomy, scalability, and rapid iteration. In the classic era, many organizations built large, tightly connected applications as one cohesive unit—an architecture that could deliver a complete feature set but imposed a heavy burden when changes were needed. Monolithic systems typically started as pragmatic answers to immediate business needs: a unified codebase, a shared data model, and a single deployment artifact. As business demands grew—support for more users, more regions, and more complex integration with external systems—the same design that once enabled speed began to constrain it. The result was longer release cycles, higher risk, and more challenging maintenance as teams struggled to reason about the entire system in one mental model.

Over time, engineers began to recognize the benefits of partitioning software into well-defined boundaries. This led to discussions about service boundaries, interoperability, and governance mechanisms that could enable teams to work more independently without sacrificing overall coherence. The move toward service-oriented thinking introduced the idea that a system could be composed of modular services that communicate through shared contracts. As organizations matured, microservices emerged as an evolutionary step: small, independently deployable services aligned with business capabilities, each with its own data boundaries and lifecycle. The progression from monoliths to service-oriented approaches revealed a core truth: architecture is not just about technical elegance, but about enabling teams to operate at the speed of business while managing risk. In practice, the journey often involves hybrid models, incremental migration, and a deliberate focus on bounded contexts and platform capabilities that support autonomy without fragmenting the enterprise.

Today’s landscape sits at the intersection of organizational structure, technology choices, and operating models. The architecture evolution reflects a shift from centralized, all-encompassing systems to distributed, contract-driven ecosystems that balance independence with integration. Enterprises ask not only whether a system can scale technically, but whether it can scale in terms of governance, security, and human coordination. This broader perspective helps explain why many organizations adopt a staged approach: starting with a monolith for simplicity, moving to SOA to enable reusable services across lines of business, and then embracing microservices to empower autonomous teams to innovate rapidly. The result is a tapestry where boundaries are drawn not only around code, but around teams, data ownership, and the events that shape the business.

Monolithic Architecture: Characteristics, Benefits, and Drawbacks

A monolithic architecture is defined by a single, unified deployable unit that contains the full application—its presentation, business logic, and data access layers. In practice, this means one codebase, one runtime, and often one database. Monoliths are straightforward to build, test, and deploy when the scope of the domain is narrow and the team footprint is small. They offer a coherent end-to-end experience for developers working within a familiar stack and can yield strong performance characteristics due to tight integration and in-process calls. However, the simplicity can become a source of rigidity as the system grows, making it harder to isolate failures, scale selectively, or adopt new technologies without risking ripple effects across the entire application.

The benefits of a monolithic approach are most evident in the early stages of a product’s life cycle. A single deployment unit reduces orchestration complexity, simplifies debugging, and enables rapid iteration when changes affect many parts of the system at once. A unified data model and shared libraries create a consistent developer experience, lowering the cognitive overhead for newcomers and enabling cohesive testing strategies. Yet these advantages come with trade-offs: scaling decisions are tied to the entire application rather than to individual components, so resources are often wasted on features that do not require heavy load. A centralized codebase can also slow down delivery as the system grows, because any modification—regardless of scope—must be coordinated across teams and subsystems. The risk of cascading failures increases when a single bug or performance bottleneck threatens the entire application, and organizational alignment around a single technology stack can impede modernization efforts or experimentation with newer patterns.

In practice, monolithic architectures remain a practical choice under certain conditions. When the domain is relatively small, the team is tightly aligned, and the application’s performance profile fits within the capabilities of a single deployment, a monolith can deliver speed and simplicity. For startups or small product teams where time-to-value is paramount, a monolith can serve as a bridge to more modular architectures as needs evolve. Additionally, for regulated environments where governance, auditability, and data consistency are paramount, a unified system can be easier to manage and reason about. As organizations grow and requirements become more complex—especially around scale, resilience, or compliance—a clearer path often emerges toward breaking the monolith into a set of focused services that can be evolved independently, tested in isolation, and deployed with greater cadence. The decision to migrate away from a monolith should be guided by tangible limits in deployment velocity, fault isolation, and the capacity to evolve the system without destabilizing critical business functions.

- Centralized deployment as a single artifact, simplifying release management and rollback.

- Shared codebase and runtime, offering a straightforward debugging and testing model.

- Tight coupling between components, making cross-cutting changes riskier and more difficult to localize.

- Unified data model and database schema, which simplifies consistency but can create bottlenecks for scaling and modernization.

Service-Oriented Architecture (SOA): Characteristics, Benefits, and Challenges

SOA represents a deliberate shift toward breaking the system into discrete services that communicate through well-defined contracts. Each service owns a portion of the business domain and provides a stable interface that other components can consume, often using an enterprise service bus or standardized protocols. The goal is to achieve loose coupling, interoperability, and reuse across organizational boundaries, while still maintaining governance and standardized integration patterns. SOA emphasizes contract-first design, versioning strategies, and a catalog of services that can be composed to deliver end-to-end capabilities. While SOA improves modularity and reuse compared to a pure monolith, it also introduces complexity around orchestration, governance, and network-based communication, which can impact performance and reliability if not carefully managed.

The architecture relies on clearly defined service boundaries and stable interfaces. By separating concerns into services with explicit contracts, teams can evolve individual components with less risk to the rest of the system. Standardization—such as common messaging formats, authentication models, and governance policies—helps maintain consistency across diverse technologies and platforms. However, the increased number of integration points and potential orchestration layers can become bottlenecks if not governed effectively. The design also raises questions about data ownership and consistency: each service may manage its own data store, leading to eventual consistency and the need for robust patterns for data synchronization, cross-service transactions, and failure handling. In well-governed SOA, these challenges are addressed through service contracts, versioning strategies, and clear ownership models, enabling scalable reuse while preserving system integrity.

A mature SOA practice typically includes a centralized service catalog, standardized interfaces, and governance that balances reuse with agility. The service boundaries align with business capabilities, often driven by domain-driven design principles to ensure that each service encapsulates a cohesive, cohesive set of behaviors. Interoperability is a core strength, enabling heterogeneous environments to communicate through common protocols and data formats. Yet, the governance required to prevent duplication, ensure compatibility, and avoid an over-engineered service bus is non-trivial. When implemented well, SOA can decouple teams from one another, reduce time-to-delivery for new capabilities, and provide a scalable path to more advanced architectures, while keeping risk in check through disciplined design and testing.

- Loose coupling via well-defined service contracts and interfaces.

- Interoperability across heterogeneous platforms using standard protocols and data formats.

- Central governance and a service catalog to promote reuse and standardization.

- Potential for over-engineering and central bottlenecks if governance becomes too heavy-handed or poorly aligned with product goals.

Microservices Architecture: Characteristics, Benefits, and Challenges

Microservices take the principle of service orientation to an extreme by structuring software as a suite of small, autonomous services, each responsible for a specific business capability. Each microservice typically owns its own data store, communicates through lightweight protocols, and can be developed, deployed, and scaled independently. The organizational model often aligns with cross-functional, feature-focused teams that own end-to-end delivery for their services. This approach enables rapid experimentation and resilience, as failures are contained within a single service and do not necessarily bring down the entire system. It also supports technology diversity, allowing teams to select the best tools for their specific domain. However, the distributed nature of microservices introduces complexity in areas such as data management, observability, network reliability, and deployment orchestration. Achieving consistency across services, handling cross-cutting concerns, and ensuring end-to-end quality require careful design and mature operational practices.

A successful microservices implementation relies on disciplined domain decomposition, clear ownership of services, and robust infrastructure. Autonomy is empowered by independent deployment pipelines, lightweight network protocols, and resilient patterns like circuit breakers and retries. Decentralized data ownership reduces coupling and allows services to optimize their storage and access patterns, but it also raises questions about data consistency, analytics, and reporting. The architecture often employs event-driven communication and eventual consistency to decouple services further and support scalable, real-time workflows. Teams must invest in observability, tracing, and standardized testing approaches to maintain visibility into the system’s behavior as it grows. While the benefits include faster feature delivery, improved fault isolation, and the ability to scale specific components, the cost is a more complex operational environment that requires investment in automation, culture, and continuous learning.

- Independently deployable services with their own data stores and lifecycles.

- Decentralized data management and polyglot persistence to optimize domain-specific needs.

- Event-driven or asynchronous communication to enable loose coupling and resilience.

- Increased complexity in testing, tracing, deployment, and operational governance, requiring strong automation and platform capabilities.

Choosing an Approach in Practice: Trade-offs, Governance, and Roadmaps

In practice, decisions about monoliths, SOA, or microservices are guided by business goals, organizational structure, and the capacity to operate and evolve complex systems. Organizations with small, cross-functional teams, straightforward domains, and a need for fast initial delivery may favor a monolith or a lightly modularized architecture to begin with. As the product matures, teams can introduce modular boundaries, refactor into services, and selectively extract microservices around high-value capabilities. The critical factor is alignment between architecture and team topology: if teams cannot own end-to-end slices of functionality, autonomy is undermined, and architectural benefits may not materialize.

A practical roadmap often embraces a staged evolution: start with a clean monolithic foundation, implement clear module boundaries, and introduce service interfaces to enable gradual decomposition. The Strangler Fig pattern—replacing parts of a monolith with new services over time—provides a controlled path to migration while preserving business continuity. Platform teams or chapters that own cross-cutting concerns (security, observability, CI/CD, and platform services) help accelerate adoption and reduce cognitive load on product teams. Hybrid architectures, where the core remains monolithic or SOA-based while new features are delivered as microservices, can balance risk and speed. Decisions should be revisited regularly in light of changing requirements, regulatory constraints, and the organization’s evolving capabilities in automation, testing, and incident management.

FAQ

What is monolithic architecture, and when is it appropriate?

Monolithic architecture is a design where the entire application is built and deployed as a single unit, with a unified codebase and runtime. It is often appropriate for small-to-medium-sized applications, startups, or teams that need rapid delivery with minimal orchestration, where the domain is relatively straightforward and the overhead of managing multiple services would outweigh the benefits. In such contexts, a monolith can be simpler to develop, test, and deploy, providing a coherent user experience and easier debugging. As complexity grows, organizations may reach a point where the cost of scaling, updating, and maintaining a monolith becomes prohibitive, prompting consideration of modularization or service-oriented approaches.

How do SOA and microservices relate to domain-driven design?

SOA and microservices are compatible with domain-driven design (DDD) in that they encourage boundary-driven decomposition. DDD emphasizes bounded contexts and explicit domain boundaries, which map well to services in both SOA and microservices architectures. In practice, each bounded context can become a service with its own data, logic, and interfaces, enabling teams to own the lifecycle and evolve capabilities independently. The alignment of service boundaries with domain concepts helps reduce coupling and fosters clearer contracts. However, the complexity of distributed systems in microservices requires additional patterns around integration, data governance, and cross-cutting concerns to ensure the architecture remains coherent as it scales.

What organizational changes are required to adopt microservices?

Adopting microservices typically requires a shift toward autonomous, cross-functional teams that own end-to-end delivery for specific capabilities. This includes investing in DevOps practices, automated testing, continuous integration and deployment, and robust monitoring and observability. Organizations must establish clear ownership for services, define contracts and versioning strategies, and implement platform capabilities (security, logging, tracing, and resilience) to reduce duplication of effort. A cultural emphasis on collaboration, lightweight governance, and a willingness to iterate on service boundaries helps teams move from centralized control to empowered execution while managing the risk of distributed architectures.

How do you handle data management across services?

Data management in distributed architectures involves explicit ownership, bounded contexts, and strategies for consistency. Each service typically owns its own data store, enabling independence and optimized performance for its domain. To coordinate across services, teams use patterns such as event-driven updates, eventual consistency, and sagas for distributed transactions. Shared data across services should be minimized to reduce coupling, with read models or materialized views used for reporting and analytics. Strong emphasis on observability and data governance helps ensure data quality, traceability, and compliance as the system evolves.

What patterns help with reliability and deployment in microservices?

Reliability in microservices is enhanced through patterns such as circuit breakers, retries with backoff, timeouts, and bulkheads to isolate failures. Observability tools—distributed tracing, centralized logging, and metrics—provide visibility across services. Deployment patterns like trunk-based development, feature flags, and blue-green or canary releases reduce risk during updates. Architectural strategies include eventual consistency for data, idempotent operations for retries, and well-defined service contracts to avoid breaking changes. Together, these patterns create a resilient system that can evolve rapidly without sacrificing reliability or user experience.