Now Reading: Neural Networks 101: Deep, Recurrent, and Graph Neural Networks

-

01

Neural Networks 101: Deep, Recurrent, and Graph Neural Networks

Neural Networks 101: Deep, Recurrent, and Graph Neural Networks

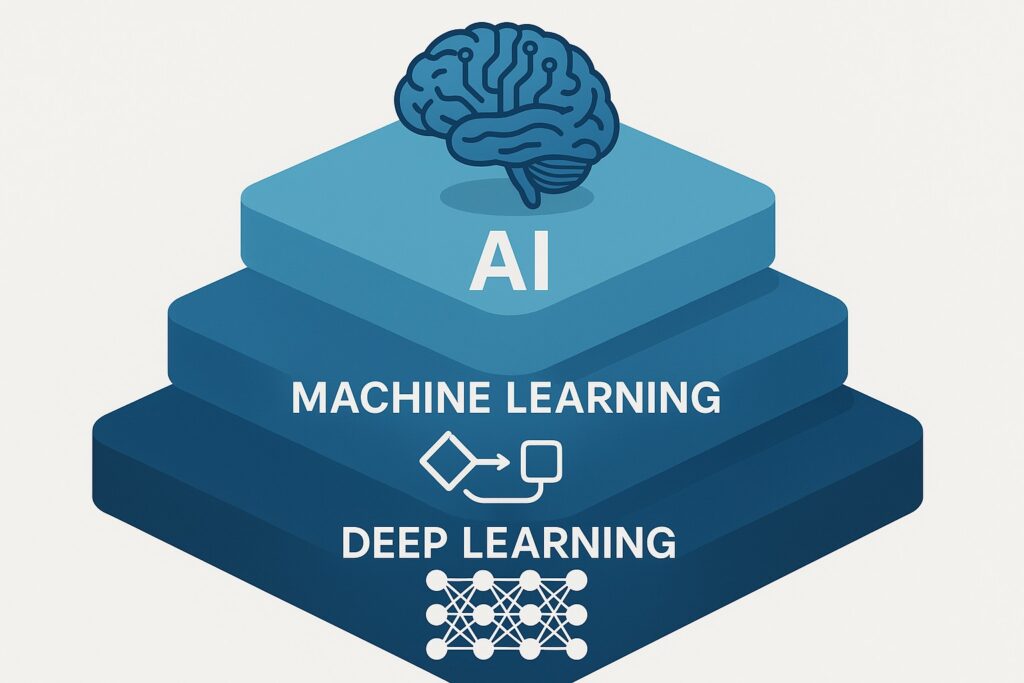

What is a neural network?

Neural networks are a family of computational models inspired by the brain’s network of neurons. They consist of units, often called neurons, organized into layers with weighted connections that transmit signals from one layer to the next. Data enters the network as a set of features, is transformed by a sequence of linear operations and nonlinear activation functions, and yields outputs such as class labels, predicted values, or decisions. Training a neural network involves adjusting the connection weights to minimize a loss function, typically through gradient-based optimization methods like stochastic gradient descent or its variants. This learning process relies on labeled data or well-defined self-supervised objectives, and it benefits from careful data preprocessing, appropriate regularization, and rigorous validation to ensure the model generalizes beyond the training examples.

At a high level, a neural network learns a mapping from input features to outputs by composing simple nonlinear computations across layers. Although a single neuron is modest in capability, the layered composition reveals a powerful capability to approximate complex nonlinear functions. With sufficient depth and properly tuned hyperparameters, a network can model subtle interactions in diverse data domains, including images, text, audio, and structured records. In practice, neural networks are trained on large datasets and deployed in production environments where latency, reliability, and interpretability are important considerations. The mixture of data scale, hardware acceleration, and research-led techniques has driven rapid progress in business applications, from automated customer support to predictive maintenance and beyond.

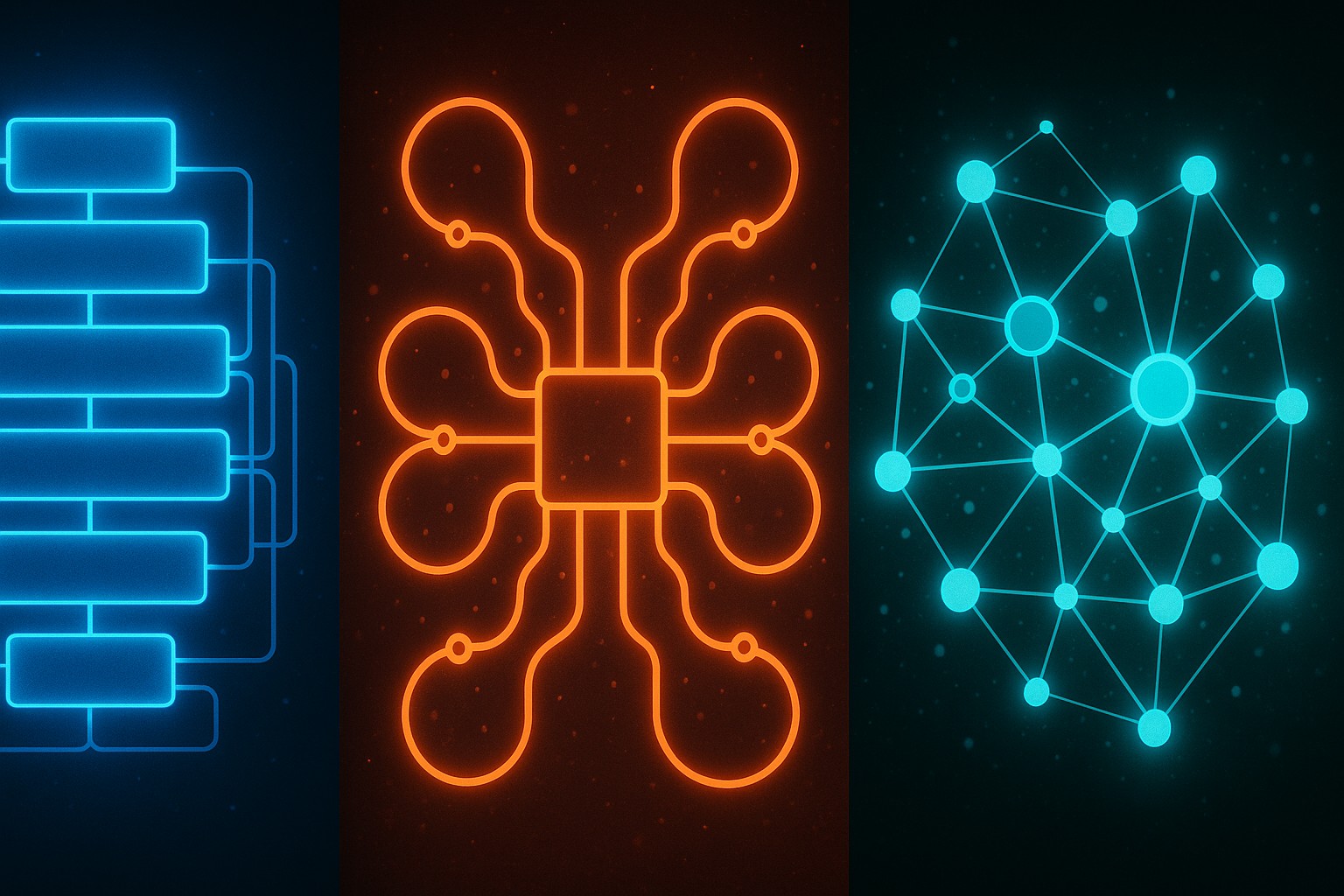

Expanding beyond academic curiosities, neural networks in business aim to produce actionable insights and decisions. They function as data-driven predictors and recognizers that can adapt as new information arrives. In many cases, success hinges on aligning the model with clear objectives, maintaining data quality, and implementing governance around model risk. The next sections describe three major families—Deep Neural Networks, Recurrent Neural Networks, and Graph Neural Networks—and illustrate how each handles data in practical settings, what use cases they are best suited for, and what the typical constraints look like in real-world environments.

Deep Neural Networks (DNNs)

Deep neural networks extend the idea of a basic feedforward model by stacking multiple hidden layers. Each layer learns increasingly abstract representations, enabling the network to extract complex patterns from raw data. Depth enhances expressive power, but it also introduces new training challenges, such as the difficulty of propagating error signals backward through many layers, the risk of overfitting when data are scarce, and higher computational and memory demands. A well-designed DNN balances depth, width, regularization, and data availability to achieve robust performance. In modern practice, architectures are paired with specialized training techniques and transfer learning to unlock performance with practical data budgets.

In practice, successful DNN deployment relies on a combination of architectural choices, training techniques, and data strategies. Modern deep models leverage normalization and regularization to stabilize training, optimization algorithms to accelerate convergence, and data-centric approaches to maximize information content. The following characteristics are central to DNN design and commonly seen in industry deployments:

- Input and output structure that aligns with the task (e.g., image pixel tensors, tokenized text sequences, or scaled tabular features) and an objective that matches business metrics.

- Multiple hidden layers enabling hierarchical feature extraction from simple signals to complex representations.

- Activation functions such as ReLU, Leaky ReLU, tanh, and sigmoid, chosen for gradient behavior, sparsity, and task suitability.

- Loss functions tailored to objective, including cross-entropy for classification, mean-squared error for regression, and specialized losses for imbalanced problems.

- Optimization algorithms such as stochastic gradient descent, Adam, or RMSprop, with learning rate schedules and momentum to improve convergence.

- Regularization and training techniques like dropout, weight decay (L2), batch normalization, and data augmentation to improve generalization and stability.

Domains that frequently benefit from DNNs include computer vision, natural language processing, and predictive analytics on large tabular datasets. Business teams often pair DNNs with transfer learning, pretraining on large corpora, and domain-specific fine-tuning to accelerate deployment while mitigating data limitations. In production, engineers monitor latency, throughput, and model drift, and they implement robust versioning and rollback capabilities to manage risk.

Recurrent Neural Networks (RNNs)

Recurrent neural networks are designed to handle sequential or time-dependent data where the current output depends on previous inputs. By maintaining a hidden state that evolves as each element of the sequence is observed, RNNs can model patterns in language, speech, time series, and other ordered data. The temporal aspect enables the model to capture context, dependencies, and evolving trends, supporting applications such as forecasting, anomaly detection in streams, and sequence labeling tasks like part-of-speech tagging or named-entity recognition. When data exhibit clear order and timing, RNNs often provide a natural and effective modeling framework.

However, vanilla recurrent architectures can struggle with long-range dependencies because gradients can vanish or explode during backpropagation through many time steps. This challenge has driven the development of more powerful gated architectures that regulate information flow, such as Long Short-Term Memory (LSTM) networks and Gated Recurrent Units (GRU). These designs introduce memory cells and gating mechanisms that help preserve and discard information as needed, enabling stable training and improved performance on complex sequences. In practice, RNNs are widely used for natural language processing, speech recognition, and time-series analytics where preserving context over time is crucial.

- Vanilla Recurrent Neural Networks (RNNs): simple recurrent units with a single hidden state, suitable for short sequences or lightweight workloads, but limited in capturing long-range dependencies.

- Long Short-Term Memory (LSTM): memory cells with input, forget, and output gates that retain information over longer horizons and manage information flow with greater granularity.

- Gated Recurrent Unit (GRU): a streamlined gate-based variant that often achieves competitive results with fewer parameters and faster inference, making it attractive for production pipelines with latency constraints.

In business contexts, RNNs are commonly applied to forecasting, demand planning with seasonal patterns, language modeling, and speech-based interfaces. They can be deployed as standalone models or integrated into larger architectures that fuse sequential reasoning with static features, enabling more nuanced analyses of time-dependent behavior and user journeys.

Graph Neural Networks (GNNs)

Graph neural networks operate on graph-structured data, where entities are represented as nodes and relationships as edges. Unlike conventional neural networks that assume fixed input size and regular structure, GNNs propagate information across a node’s neighborhood through multiple iterations, updating node representations with messages from neighbors. This message-passing paradigm allows the model to capture both local structure and global graph properties, enabling powerful reasoning about relational data. GNNs are particularly adept at representing complex interactions and dependencies that are not easily captured by flat feature vectors.

Key advantages of GNNs include their ability to generalize across graphs of varying sizes and shapes, their capacity to incorporate relational context, and their flexibility in handling node-level or graph-level predictions. Typical architectures combine several rounds of neighborhood aggregation with a pooling or readout step to produce a graph-level representation for classification or regression, or to generate node-wise scores for downstream tasks. In many business settings, graph-aware reasoning unlocks value when relationships drive outcomes, such as in network analysis or product recommendation pipelines that depend on user-item interactions.

- Initialize node representations from raw features or attributes (e.g., atom types in chemistry, user metadata in social graphs).

- Perform several rounds of message passing to aggregate information from neighbors and update node states, enabling context-aware representations.

- Compute a readout or pooling operation to obtain a graph-level or global representation for tasks like classification or regression.

- Apply a task-specific head (e.g., classifier or regressor) to generate final predictions and quantify uncertainty where possible.

GNNs have found adoption in drug discovery, social analytics, recommender systems, fraud detection, and network optimization, where relational structure is central to the decision problem. They pair well with existing data pipelines that include feature engineering and graph construction, enabling end-to-end modeling from raw graph data to actionable business insights. As graph data become more prevalent, practitioners are refining training strategies, scalability techniques, and interpretability methods to ensure these models deliver reliable results in production environments.

Practical considerations for deploying neural networks in business

Choosing between DNNs, RNNs, and GNNs depends on the data modality, the problem objective, and the deployment constraints. In business settings, teams often start with a baseline model and iteratively move toward architectures that balance accuracy, latency, memory usage, and maintainability. A typical pipeline includes data collection and labeling, preprocessing and feature engineering, model selection, training and validation, and deployment with monitoring for drift and performance degradation. Sound experimentation practices, clear success criteria, and stakeholder alignment are essential to translate model improvements into measurable business impact.

Beyond model selection, successful deployment requires attention to data governance, security, and governance around model bias and explainability. Operational concerns such as versioning, infrastructure readiness, and scalable inference are critical when moving from prototype to production. Organizations increasingly rely on MLOps practices to manage model life cycles, automate testing, maintain traceability, and ensure reliable rollouts. In practice, teams should plan for data quality checks, monitoring of real-world performance, and a robust rollback plan should a model underperform after deployment.

What is a neural network in simple terms?

A neural network is a computational model that learns to map inputs to outputs by adjusting the strengths of connections between simple processing units. Through many iterations on data, the network identifies patterns and relationships that enable it to predict or classify new examples. In business terms, it acts as a flexible predictor that improves as more labeled data becomes available and as the model is refined.

How do DNNs, RNNs, and GNNs differ?

Deep Neural Networks (DNNs) handle fixed-size input data and learn hierarchical representations through many hidden layers. Recurrent Neural Networks (RNNs) are designed for sequences and time-dependent data, maintaining state across steps to capture temporal patterns. Graph Neural Networks (GNNs) operate on graph-structured data, propagating information along edges to reflect relationships among entities. Each family is optimized for its data modality and often appears in distinct business use cases.

What data types are best for each network type?

DNNs excel with large tabular, image, or audio data where structure can be represented in fixed-size tensors. RNNs are strongest when data are sequential, such as time series, text, or audio streams. GNNs shine with relational data that naturally form graphs, including social networks, molecular graphs, or infrastructure networks, where the relationships drive outcomes.

What are common training challenges and how are they addressed?

Common challenges include overfitting, data requirements, and training stability. Techniques such as regularization, early stopping, and data augmentation help prevent overfitting; modern optimizers and normalization strategies improve convergence; specialized architectures like LSTMs and GRUs mitigate vanishing gradients in sequence models; and graph normalization and careful graph construction help stabilize GNN training. Monitoring and test-time evaluation are essential to ensure robust performance in production.

How can I start learning and applying these networks?

Begin with foundational coursework on neural networks, followed by hands-on practice using standard frameworks, datasets, and tutorials. Start with a simple DNN on a familiar tabular or image task, then experiment with LSTM/GRU for sequences and finally explore GNNs with graph-structured data. Focus on understanding data preparation, model evaluation, and deployment considerations such as inference latency and monitoring in production.