Now Reading: Scala for AI/ML: How Scala is Used in Data Science

-

01

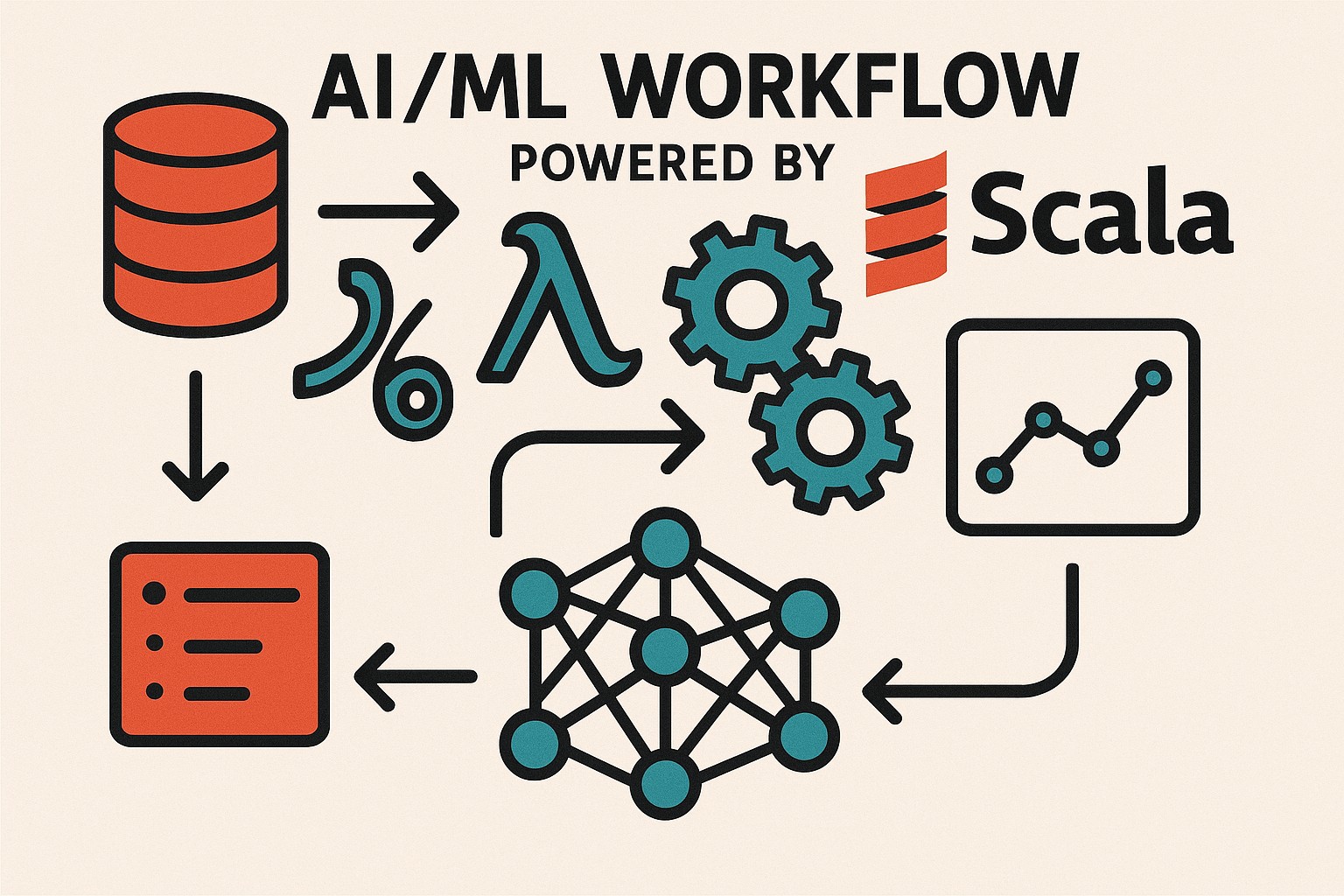

Scala for AI/ML: How Scala is Used in Data Science

Scala for AI/ML: How Scala is Used in Data Science

Scala as a Foundation for AI/ML in Data Science

Scala sits at the intersection of safety, expressiveness, and performance, making it a compelling foundation for AI and ML within data science. By compiling to the Java Virtual Machine, Scala inherits mature tooling, a vast ecosystem, and reliable performance characteristics that are essential for production-grade ML workflows. At the same time, its hybrid object-functional design enables developers to model data transformations and model workflows in concise, composable ways, which translates to faster prototyping and clearer code for complex pipelines. In AI projects, this combination helps teams move from experimental notebooks to scalable, maintainable systems with less friction.

The language’s emphasis on strong typing and immutability reduces the risk of runtime surprises as data volumes grow and models evolve. Teams can encode feature schemas, data contracts, and model interfaces in a way that enforces correctness across distributed components. This is particularly valuable in data pipelines where subtle data drift and schema changes can cascade into degraded model performance. Because of its JVM roots, Scala also benefits from decades of optimization work, mature garbage collection, and robust deployment practices that enterprises rely on for mission-critical ML services.

Beyond Spark and streaming, Scala supports a growing set of numerical and scientific libraries, including Breeze for linear algebra, Saddle for data frame-like structures, and Smile for machine learning primitives. While Python remains a common choice for rapid experimentation, Scala’s static typing, compilation, and seamless Java interop offer a compelling alternative for teams aiming to build robust, scalable AI systems that endure beyond a single project.

Scala in Big Data and Data Processing

In data science, the ability to process and analyze large data streams is as important as the quality of the models. Scala shines here because Spark, the dominant big data framework, provides a natural programming model in Scala. Developers write concise code to manipulate DataFrames, execute SQL-like queries, and build end-to-end pipelines that scale across a cluster. Scala’s type system helps catch mistakes early in these pipelines, reducing the cost of iterations during experimentation and productionization.

- Apache Spark

- Apache Flink

- Hadoop ecosystem components (HDFS, YARN, MapReduce)

Within Spark, the Scala API exposes the full richness of Spark MLlib, Spark Streaming, and the DataFrame/Dataset APIs. Flink offers streaming and stateful processing with strong Scala support, enabling AI workloads to run with low latency. The broader Hadoop ecosystem remains relevant for storage and batch processing, and Scala tooling helps integrate these components with modern ML pipelines. Enterprises often adopt this stack to unify data engineering and data science under a single language and deployment model, reducing context switching and enabling clearer data lineage and model retraining cycles.

Key Scala Features Driving AI Workloads

Scala’s language design emphasizes safety and expressiveness in ways that align with AI workload patterns. Static typing with type inference helps developers write generic, reusable components for feature extraction, normalization, and model evaluation, without sacrificing readability. Pattern matching makes it straightforward to decompose complex data structures and implement robust data validation.

- Strong typing and type inference

- Functional programming and immutability

- JVM ecosystem and extensive libraries

Functional programming encourages building pipelines as a series of composable transformations. Immutable data structures help prevent subtle bugs in concurrent data processing, especially when streaming data is involved. In practice, this translates into more predictable scaling behavior and easier reasoning about how data flows through ML stages. Interoperability with Java and access to the JVM toolchain means teams can draw on mature libraries for numeric computation, logging, security, and deployment, without rewriting critical components. This often shortens time-to-delivery for AI features that must operate inside enterprise data platforms.

Why Enterprises Choose Scala for AI Projects

Enterprises frequently gravitate toward Scala for AI initiatives because it fits well with established JVM-based architectures. The language’s combination of expressive syntax and robust tooling helps teams maintain large, long-lived codebases that support data ingestion, cleaning, and model deployment across environments. In regulated domains, this reliability matters as much as raw performance.

- Seamless Java interoperability

- Rich ecosystem of libraries and frameworks

- Clear expression of data pipelines and ML workflows

The ability to reuse Java components and deploy on existing infrastructure reduces the cost of adoption. Scala also offers a strong type system that catches many errors at compile time, improving stability for production ML services. For organizations focused on streaming data, the language helps express both stream processing and model inference in a coherent, auditable form. From a governance perspective, teams value the reproducibility and traceability that come from explicit data pipelines and typed schemas. When ML features are part of a larger data platform, Scala’s compatibility with existing data governance and observability tooling becomes a strategic advantage.

Practical Considerations: When Scala Fits AI Projects

Choosing Scala for AI work requires evaluating project scope, data scale, and team capabilities. For teams already immersed in the JVM ecosystem, adopting Scala can reduce the friction of integrating data storage, processing, and ML within a single stack. In contrast, startups or teams prioritizing rapid experimentation may lean toward more lightweight Python-based tooling for faster iteration times, especially in early prototyping.

From a production perspective, Scala shines when pipelines must be stable, maintainable, and subject to strict governance. The JVM’s mature memory management, profiling tools, and containerization support help operators manage resources in large clusters. However, compilation times in very large codebases can slow iteration, so adopting modular designs and incremental builds is important. A practical approach is to start with a clearly defined data model, establish CI/CD for model deployment, and gradually migrate critical components to Scala where it adds tangible value, such as streaming ingestion, feature pipelines, or model serving within Spark-based workloads.

From Prototype to Production: Leveraging Spark MLlib and Scala Pipelines

Many AI projects move from notebook experiments to production through Spark MLlib pipelines. The Scala interface provides strong type safety and a fluent API for assembling transformers and estimators, which helps teams maintain versioned, auditable pipelines as data evolves. This approach also supports distributed training and inference across large datasets.

import org.apache.spark.sql.SparkSession

import org.apache.spark.ml.{Pipeline}

import org.apache.spark.ml.feature.VectorAssembler

import org.apache.spark.ml.classification.LogisticRegression

val spark = SparkSession.builder()

.appName("MLPipelineExample")

.getOrCreate()

val data = spark.read.format("csv").option("header","true").load("hdfs:///data/train.csv")

val assembler = new VectorAssembler()

.setInputCols(Array("f1","f2","f3"))

.setOutputCol("features")

val lr = new LogisticRegression().setLabelCol("label").setFeaturesCol("features")

val pipeline = new Pipeline().setStages(Array(assembler, lr))

val model = pipeline.fit(data)

Beyond modeling, Scala-based pipelines integrate with monitoring and governance tools to track data lineage, feature versions, and model performance in production. Organizations that require auditable ML workflows often prefer the visibility that a Spark-based stack provides, along with a common language across data engineers and data scientists.

Developer Community, Tooling, and Future Trends

The Scala ecosystem benefits from a long-running community of practitioners in data engineering, streaming, and ML engineering. Beyond Spark, Scala remains attractive for building microservices, APIs, and streaming applications with frameworks like Akka, which complement ML workloads with low-latency, resilient concurrency. The broad ecosystem accelerates experimentation while offering mature debugging, testing, and deployment practices.

Tooling continues to mature around Scala, with improved IDE support, build tools like sbt, and better integration with cloud-native runtimes. The trend toward unified data platforms that combine batch and streaming workloads makes Scala a practical choice for teams seeking stability and scalability in AI-enabled products. Looking ahead, researchers and practitioners expect deeper integration between ML tooling and JVM-based stacks, plus stronger support for reproducibility and explainability.

FAQ

Is Scala faster than Python for AI workloads?

In many data-processing scenarios, especially when using Spark, Scala tends to outperform Python due to reduced inter-process overhead and the benefits of the JVM’s optimizations. While Python remains popular in rapid experimentation and with certain machine learning libraries, production-grade streaming and batch jobs often run more efficiently in Scala. The actual performance, however, depends on data characteristics, cluster configuration, and how well the code leverages vectorized operations and distributed processing.

How does Scala integrate with SparkML?

Scala provides the native API surface for Spark’s MLlib and the pipelines API. This tight integration gives developers compile-time safety for their feature engineers, estimators, and transformers, along with a fluent DSL for building and tuning models. Because Spark’s core is written in Scala, code written in Scala benefits from direct access to optimization and type information, reducing the risk of runtime errors during feature assembly and model fitting.

What are the trade-offs of using Scala for ML in production?

Trade-offs include a steeper learning curve for teams new to JVM-based languages, longer compile times for large codebases, and a need for JVM-focused operations knowledge. Teams must weigh these costs against benefits such as strong type guarantees, better maintainability for complex pipelines, and easier interoperability with existing data infrastructure. In many cases, Scala delivers greater long-term stability for large-scale AI services, but adoption should align with organizational readiness.

Which projects benefit most from Scala-based ML pipelines?

Projects with substantial data engineering components, streaming data, or integration with existing JVM services typically benefit the most. If a team relies on Spark for data processing, or requires a single language across data ingestion, feature engineering, and model serving, Scala-based pipelines can reduce context switching and improve traceability. Enterprise contexts that prize compliance, governance, and reproducibility also gain from the structured approach Scala encourages.