Now Reading: Software Development Life Cycle (SDLC) Phases Explained

-

01

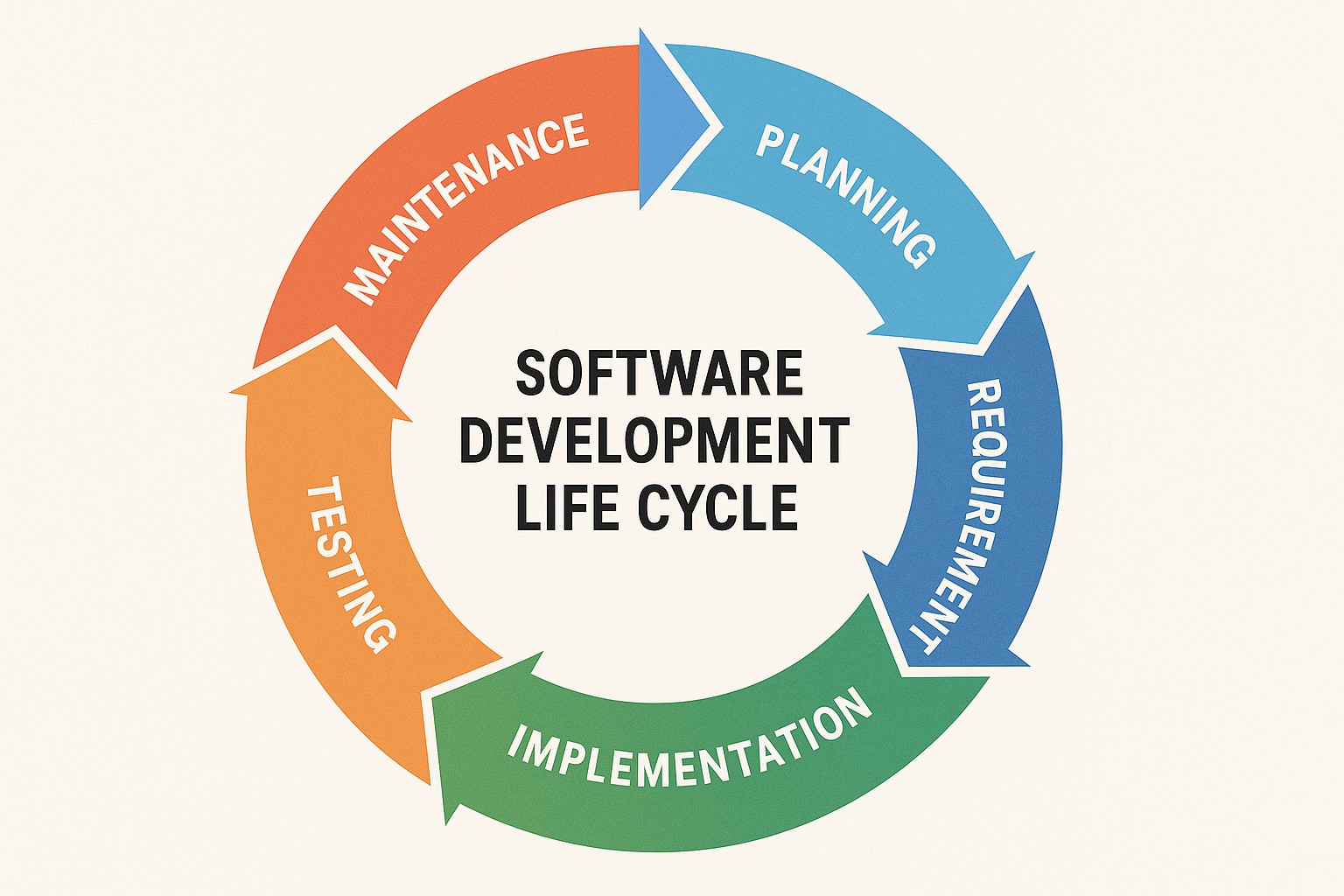

Software Development Life Cycle (SDLC) Phases Explained

Software Development Life Cycle (SDLC) Phases Explained

Planning and Analysis

At the outset of any software initiative, planning and analysis establish the foundation for successful delivery. This phase translates business objectives into concrete, actionable intents and sets boundaries that guide every subsequent activity. It involves close collaboration with stakeholders to articulate goals, define success criteria, and determine how the project’s outcomes will be measured in terms of value, risk, and return on investment. A disciplined planning process helps ensure that scarce resources—time, budget, and talent—are allocated to the features and capabilities that drive the greatest business impact, while also creating a framework for rigorous decision-making as requirements evolve.

During planning and analysis, teams typically conduct high-level feasibility assessments, stakeholder analysis, and requirements discovery. They establish a project charter, outline the scope, and identify dependencies, constraints, and regulatory considerations that may affect design choices. A core objective is to minimize ambiguity by collecting and documenting requirements in a way that is unambiguous, traceable, and testable. This includes defining non-functional requirements such as performance, security, accessibility, and maintainability, which often determine architecture and platform decisions early on. Effective planning also encompasses risk identification and mitigation planning, establishing governance structures, and agreeing on a governance cadence that keeps sponsors informed and engaged throughout the lifecycle.

- Define objectives and scope

- Identify stakeholders and roles

- Gather requirements and constraints

- Assess risks and feasibility

Design

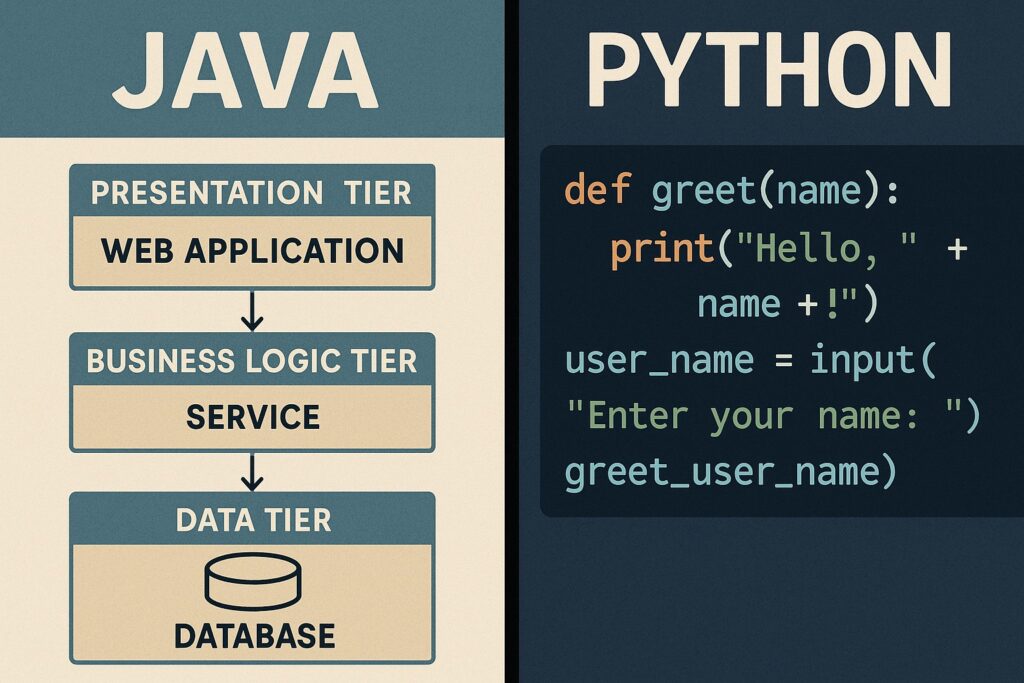

Design translates the expressed requirements into a blueprint for how the system will be constructed. It balances performance, scalability, security, and maintainability while considering the organization’s existing technology portfolio and constraints. A well-executed design phase produces clear specifications, architectural decisions, and modeling artifacts that enable developers to implement features with a consistent approach. The emphasis is on creating modular, loosely coupled components, well-defined interfaces, and a security-by-design mindset that anticipates potential threats and mitigations before code is written.

Effective design requires collaboration across disciplines—systems architecture, UI/UX, data modeling, API design, and operations—so that differences in perspective are reconciled. Design reviews and formal sign-offs help ensure that the chosen approach aligns with business objectives, compliance requirements, and long-term maintainability. Modeling notations, such as component diagrams, data schemas, and sequence flows, provide a shared vocabulary for engineers, testers, and operators. The design phase also prioritizes reuse and standardization, encouraging the adoption of established patterns and frameworks that reduce risk and accelerate delivery, while preserving the flexibility to adapt as the domain evolves.

| Artifact | Purpose | Example |

|---|---|---|

| Architectural Diagram | Communicate system structure | Layered or microservices architecture |

| Data Model | Define data entities and relations | ER diagram or JSON schema |

| API Design | Specify interfaces and contracts | RESTful endpoints with versioning |

Implementation

Implementation, or development, is where ideas become tangible software. It encompasses coding, integration of components, and the rigorous pursuit of quality through practices that promote reliability and maintainability. Teams apply established standards for naming, testing, and documentation, while leveraging version control, continuous integration, and automated checks to catch issues early. A culture of disciplined engineering—pair programming, code reviews, and adherence to design patterns—helps reduce defects and accelerates onboarding for new contributors. Security-conscious coding, error handling, and robust input validation are woven into the fabric of this work, so that risk is managed proactively rather than discovered after deployment.

Within this phase, developers translate designs into working modules, refactor as needed to accommodate changes in requirements or constraints, and integrate with external systems. Environment parity—ensuring development, staging, and production mirror each other as closely as possible—minimizes surprises when features move toward release. Teams often adopt a branching strategy, feature toggles, and automated builds to maintain velocity while preserving the ability to isolate features and roll back if necessary. The following example illustrates a simple, illustrative snippet that demonstrates a clean separation of concerns and input validation, underscoring the importance of clear interfaces between components.

def process_user_request(request):

# Basic input validation

if not isinstance(request, dict):

raise ValueError("Invalid request format")

user_id = request.get("user_id")

if not isinstance(user_id, int) or user_id <= 0:

raise ValueError("Invalid user_id")

# Core processing logic

result = perform_business_logic(user_id, request)

return result

Beyond code, implementation includes setting up the build and deployment pipelines, defining quality gates, and documenting how new code will be integrated into the broader system. This ensures that the software not only meets functional requirements but also aligns with performance targets, security policies, and operational readiness. A disciplined approach to change management—tracking what was changed, by whom, and why—facilitates accountability, reduces risk, and smooths the path to stable releases.

Testing

Testing is the primary mechanism for validating that the software meets its requirements and behaves correctly under a variety of conditions. A comprehensive testing strategy covers functional correctness, reliability, performance, security, and compatibility across environments. The emphasis is on early and continuous feedback to the development teams, enabling rapid iteration and risk-based prioritization of fixes and enhancements. By aligning test cases with user stories and acceptance criteria, teams ensure traceability from requirements to test results, which supports confidence in the product and informs decision-making at release time.

To organize testing activities, teams typically structure their approach around well-defined test types, environments, and automation coverage. This discipline helps reduce the likelihood of defects escaping to production and supports predictable release cadences. Testing also acts as a safeguard for user experience and regulatory compliance, ensuring that interfaces behave consistently and data handling meets established privacy and governance standards. The aim is not only to find defects but to understand root causes, reproduce conditions, and provide actionable information to developers and stakeholders for continuous improvement.

- Unit testing

- Integration testing

- System testing

- Acceptance testing

Deployment

Deployment brings verified software into production where it can deliver value to users. This phase emphasizes repeatability, reliability, and controlled release management. Successful deployment relies on automation, environment parity, and clear rollback procedures to minimize risk during transitions from staging to production. Teams often adopt progressive delivery approaches—blue-green deployments, canary releases, or feature toggles—to reduce the blast radius of potential issues and to observe real-world behavior before wide exposure. Documentation around deployment steps, monitoring expectations, and rollback criteria becomes a critical reference for operators and incident responders alike.

Effective deployment also requires governance—defined approval workflows, audit trails, and alignment with regulatory requirements—so that changes do not introduce unintended consequences or compliance gaps. Operational readiness extends beyond code to include health checks, observability, and measurable service levels. By combining automation with careful monitoring, organizations can shorten lead times, improve stability, and enable faster response when incidents occur. The deployment discipline thus connects development outcomes with the broader business objective of delivering dependable software that customers trust.

Deployment is not a single act but a carefully orchestrated sequence of steps that minimizes risk while accelerating value realization. Automation, visibility, and a clear rollback plan are essential to sustaining confidence across teams and stakeholders.

Maintenance

Maintenance is the ongoing work required to keep software useful, secure, and aligned with changing business needs after it has entered production. This phase involves monitoring performance, addressing defects, implementing enhancements, and adapting the system to evolving regulatory requirements or user expectations. A structured maintenance approach helps prevent deterioration in quality and ensures that the software continues to deliver the intended value over time. Proactive maintenance includes capacity planning, technical debt management, and refactoring efforts that improve code readability and maintainability, ultimately reducing future costs and risk.

Organizations typically establish a formal process for triaging incidents, prioritizing fixes, and scheduling updates. Regularly reviewing logs, metrics, and user feedback informs decisions about when to deploy changes and which areas of the system require refactoring or optimization. Maintenance also encompasses security hygiene—patching dependencies, updating libraries, and auditing for new vulnerabilities—so the software remains resilient in a changing threat landscape. By treating maintenance as an integral part of the lifecycle rather than an afterthought, teams sustain performance, reliability, and user satisfaction throughout the product’s life.

What is the SDLC and why does it matter for business outcomes?

The Software Development Life Cycle (SDLC) is a structured framework that guides the creation and ongoing improvement of software from initial concept through retirement. It matters to business outcomes because it aligns technical work with strategic goals, standardizes practices to reduce risk, and provides measurable checkpoints for value delivery, quality, and compliance. An effective SDLC helps organizations forecast effort, manage costs, and deliver features in predictable increments that satisfy stakeholders while maintaining a clear focus on user needs and business priorities.

How do you measure success across SDLC phases?

Success is measured through a combination of qualitative and quantitative indicators that span the entire lifecycle. Common metrics include cycle time (how long it takes to turn ideas into deliverables), defect density (defects per unit of software), test coverage (the extent to which requirements are validated by tests), deployment frequency, lead time for changes, and customer satisfaction. Beyond metrics, organizations assess governance adherence, risk management effectiveness, and the speed with which issues are detected and resolved in production. A balanced scorecard approach helps ensure that technical excellence, operational reliability, and business value are all accounted for in performance evaluations.

What are common challenges in SDLC adoption and how to overcome them?

Common challenges include unclear or continuously changing requirements, resistance to process changes, integration with legacy systems, and misalignment between development teams and business owners. To overcome these obstacles, organizations invest in clear governance, stakeholder engagement, and flexible but disciplined processes. Techniques such as incremental delivery, continuous feedback loops, automated testing, and robust change management help teams adapt to evolving needs while maintaining quality. Establishing shared goals, transparent decision rights, and measurable outcomes also supports sustained adoption and continuous improvement across the lifecycle.