Now Reading: The End of Moore’s Law? The Future of Computing Hardware

-

01

The End of Moore’s Law? The Future of Computing Hardware

The End of Moore’s Law? The Future of Computing Hardware

Moore’s Law: past, present, and prognosis

For decades, Moore’s Law served as a simple predictor: transistor density would double roughly every two years, delivering more powerful and cheaper chips. This expectation enabled long-range planning across hardware, software, and the broader tech ecosystem. The physics were not trivial—lithography, gate control, variability, and thermal limits pushed engineers to innovate continually. Yet as device dimensions shrink toward single-digit nanometers, leakage currents grow and production economics become more challenging. These real-world constraints mean the neat exponential narrative has gradually given way to a more nuanced reality: improvements arrive not only from smaller transistors but from smarter designs, better memory hierarchies, and specialized accelerators.

In practice, the industry now pursues performance through parallelism, memory bandwidth, and architectural specialization rather than simply pushing clock speeds higher. This shift aligns with observed workloads in AI, data analytics, and simulations, where throughput, energy efficiency, and data movement dominate the cost of computation. The old metric—FLOPS per watt at a given node—still matters, but it no longer tells the whole story about what makes a system fast or cost-effective at scale.

Why the pace is slowing and what it means for strategy

From a technical standpoint, the momentum of scaling slows as we approach fundamental limits on transistor size, leakage, and variability. Advanced lithography, defect control, and yield management require escalating capital and process sophistication, with diminishing returns at each node. The result is a multi-year plateau in single-thread performance on mainstream CPUs, while the industry looks to alternate paths to continue delivering value. In many respects, the question has shifted from “Can we make smaller transistors?” to “What other levers can we pull to improve system performance without prohibitive cost?”

For businesses and suppliers, this means rethinking refresh cycles, ownership of technology risk, and how to monetize performance gains. A growing share of investment now goes into accelerators, high-bandwidth memory, faster interconnects, and software optimization that leverages parallelism and data locality. It also increases the importance of ecosystem collaboration—between silicon vendors, memory makers, software developers, and cloud service providers—to deliver integrated solutions that meet real-world needs rather than idealized metrics.

Implications for hardware design and system architecture

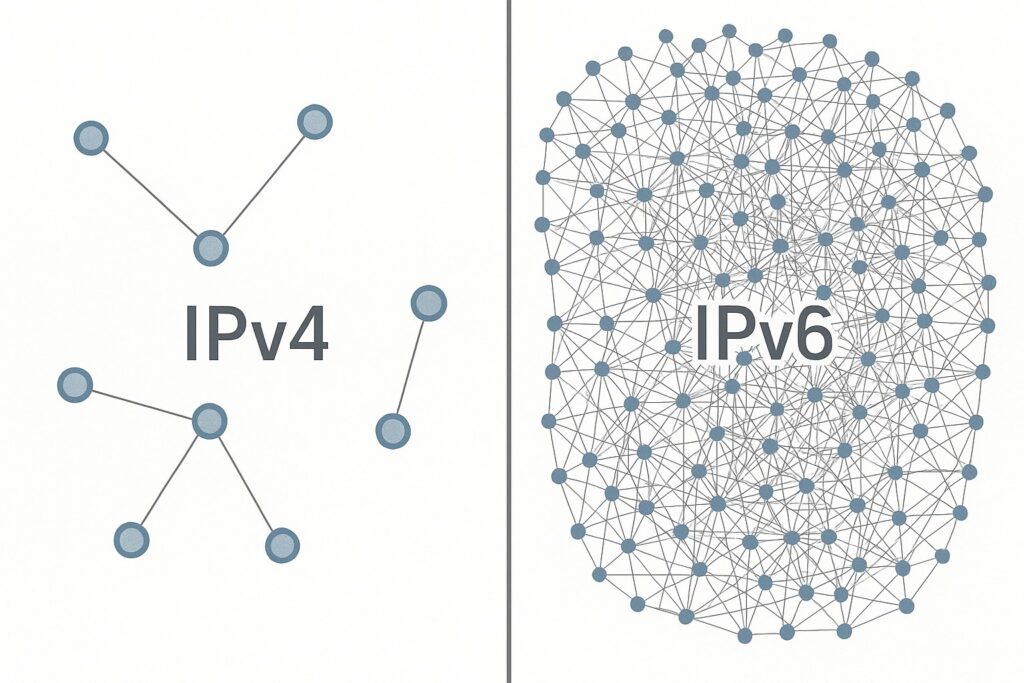

With diminishing returns from raw transistor scaling, system architects are increasingly focusing on data flow and energy efficiency. Memory bandwidth and latency become critical bottlenecks, so designs favor memory-centric architectures, wide interconnects, and near-memory computing paradigms that reduce data movement. This invites a mix of CPUs, GPUs, and domain-specific accelerators, orchestrated through smarter scheduling and compiler technologies that map workloads to the best performing unit while preserving energy budgets.

Software toolchains, compilers, and runtimes play a central role in translating hardware capabilities into real-world performance. Performance now hinges on intelligent task graph optimization, memory layout tuning, and hardware-aware scheduling that can exploit heterogeneity without sacrificing portability. The outcome is a software-defined approach to performance, where future gains come as much from how workloads are organized and deployed as from the silicon itself.

Emerging materials and approaches beyond silicon

To push beyond the traditional silicon envelope, researchers are pursuing a portfolio of materials and device concepts designed to deliver higher efficiency, reduced leakage, and new modes of computing. The path is incremental, with early demonstrations moving toward pilot integrations, then to pilot lines and, eventually, broader production as confidence and supplier ecosystems mature. Each option offers different trade-offs in terms of manufacturing familiarity, reliability, and performance characteristics.

- Wide-bandgap semiconductors such as silicon carbide (SiC) and gallium nitride (GaN) for power electronics and high-frequency switching lead to chips that run cooler and more efficiently, enabling higher performance density in power-constrained systems.

- Two-dimensional materials and heterostructures, including graphene and transition metal dichalcogenides, promise ultra-thin channels with high mobility and novel optoelectronic interfaces that can reshape interconnects or on-chip sensors.

- Heterogeneous integration and three-dimensional stacking that place logic, memory, and specialized accelerators in close physical proximity, shrinking data movement distances and unlocking new memory architectures.

These approaches collectively aim to improve energy efficiency, thermal management, and memory bandwidth, while offering new capabilities for machine learning, simulation, and real-time analytics. The business case depends on how quickly the ecosystem can deliver reliable, scalable manufacturing and how well software can exploit new hardware features without imposing prohibitive porting costs.

Heterogeneous integration and 3D stacking

Heterogeneous integration is moving beyond the traditional two-chip or single-package approach to embrace multi-die stacks and system-in-package designs. By combining logic, memory, and specialized accelerators in a single package, designers can dramatically increase bandwidth, reduce latency, and improve energy efficiency for memory-bound workloads. But this path also introduces new design challenges, including thermal management, reliability testing, and test coverage across disparate processes and materials.

- Tighter integration of compute and memory to minimize data movement and energy use

- Advanced packaging techniques and thermal management to handle stacked dies without performance loss

- Open, interoperable interfaces that enable software portability and ecosystem collaboration

Realizing these gains requires coordinated efforts across device manufacturers, packaging specialists, and software developers. The payoff can be substantial for workloads such as large-scale AI inference, real-time data analytics, and high-performance simulation, where current architectures encounter significant data-transfer bottlenecks.

Quantum computing and co-design with classical systems

Quantum computing offers a fundamentally different paradigm for certain problem classes, with the potential to accelerate simulation, optimization, materials design, and cryptography. However, practical quantum advantage will not come from a naive replacement of classical processors; it will come from thoughtful co-design between qubit hardware, cryogenic control electronics, and the software stack, including compilers, error mitigation, and fault-tolerant architectures. In other words, progress depends on integrating quantum resources with classical systems in a way that delivers measurable value for targeted workloads.

In discussing the strategy behind quantum computing, many executives and researchers ask: why is quantum computing important for the broader computing landscape? The pragmatic answer is that quantum resources can tackle select problem classes with orders-of-magnitude speedups or efficiency improvements when combined with classical systems. This requires careful software tooling, standardized interfaces, and hybrid algorithms that can run on near-term noisy devices while laying the groundwork for fault-tolerant machines in the future. The field is still maturing, but the trajectory suggests incremental capability growth that unlocks new science and engineering use cases.

- Developing scalable qubit platforms with longer coherence times and lower error rates

- Realizing practical error correction and fault-tolerance strategies for larger systems

- Building software ecosystems, compilers, and control infrastructures that make quantum resources accessible and useful

Economic and ecosystem considerations

As hardware moves beyond legacy silicon scaling, the economics of design, fabrication, and deployment lean heavily on system-level value rather than chip-level metrics alone. Capital expenditure for cutting-edge nodes remains high, while actual performance gains hinge on a combination of accelerators, memory bandwidth, software optimization, and the efficiency of the data path. This reality incentivizes co-investment across the supply chain, robust standards, and shared platforms that can deliver consistent improvements across multiple workloads.

Beyond pure hardware costs, the broader ecosystem—regulatory frameworks, open-source communities, education, and workforce availability—plays a decisive role in adoption. Standards for packaging, interconnects, and software interfaces reduce fragmentation and accelerate deployment. Companies that invest in ecosystem health, risk management, and transparent performance measurement stand to benefit from faster time-to-value and broader developer support.

The path forward for industry stakeholders

For chipmakers, the near-term strategy combines co-design with customers, platform-level accelerators, and intelligent memory systems to squeeze value from heterogeneous architectures. This includes investing in reliability, yield, and scalable manufacturing capabilities for non-traditional materials and packaging approaches, as well as strengthening software toolchains that help customers extract performance without costly retooling.

For customers and end users, the priority is to align hardware investments with workloads that gain the most from heterogeneity, while preserving software portability, security, and total cost of ownership. Cloud providers and enterprise data centers can play a pivotal role by offering modular, composable infrastructure, enabling rapid experimentation with new memory hierarchies or accelerators, and reducing the risk of long refresh cycles.

FAQ

What is Moore’s Law, and why is it slowing?

Moore’s Law originated as an observation about transistor density doubling roughly every two years, which historically translated into faster, cheaper computing. In practice, approaching the physical and economic limits of silicon introduces challenges in lithography, variability, cooling, and yield, causing the pace of scaling to slow. The industry now recognizes that performance gains come from architectural innovation, memory improvements, and specialized accelerators as much as from smaller transistors.

What are the main technologies that could sustain performance gains beyond silicon?

Beyond silicon, wide-bandgap materials (for power efficiency), 2D materials and heterostructures (for high-mobility channels and novel interfaces), and three-dimensional stacking (to reduce data movement) are key pillars. Quantum computing represents a complementary paradigm that could accelerate certain workloads when integrated with classical systems. Each path offers opportunities and challenges related to manufacturing, reliability, and software ecosystem maturity.

How will industry adoption of new materials affect costs and supply chains?

Adoption will occur in stages, with pilot programs, standards development, and gradual scale-up. New materials and packaging techniques require new processes, supply chain coordination, and validation across environments. While this introduces near-term complexity and cost, it can yield long-term benefits in performance and energy efficiency if the ecosystem stabilizes around common interfaces and reliability benchmarks.

What is the role of quantum computing today vs. the future?

Today, quantum devices are primarily experimental and serve as testbeds for algorithms and control techniques. In the near term, hybrid quantum-classical workflows can demonstrate niche advantages and guide hardware/product roadmaps. Over the longer horizon, fault-tolerant quantum systems could address specific, hard computational problems, provided there is a mature software stack and affordable, scalable hardware.