Now Reading: The Future of NLP: Trends in Natural Language Processing

-

01

The Future of NLP: Trends in Natural Language Processing

The Future of NLP: Trends in Natural Language Processing

Emerging Trends Shaping NLP’s Trajectory

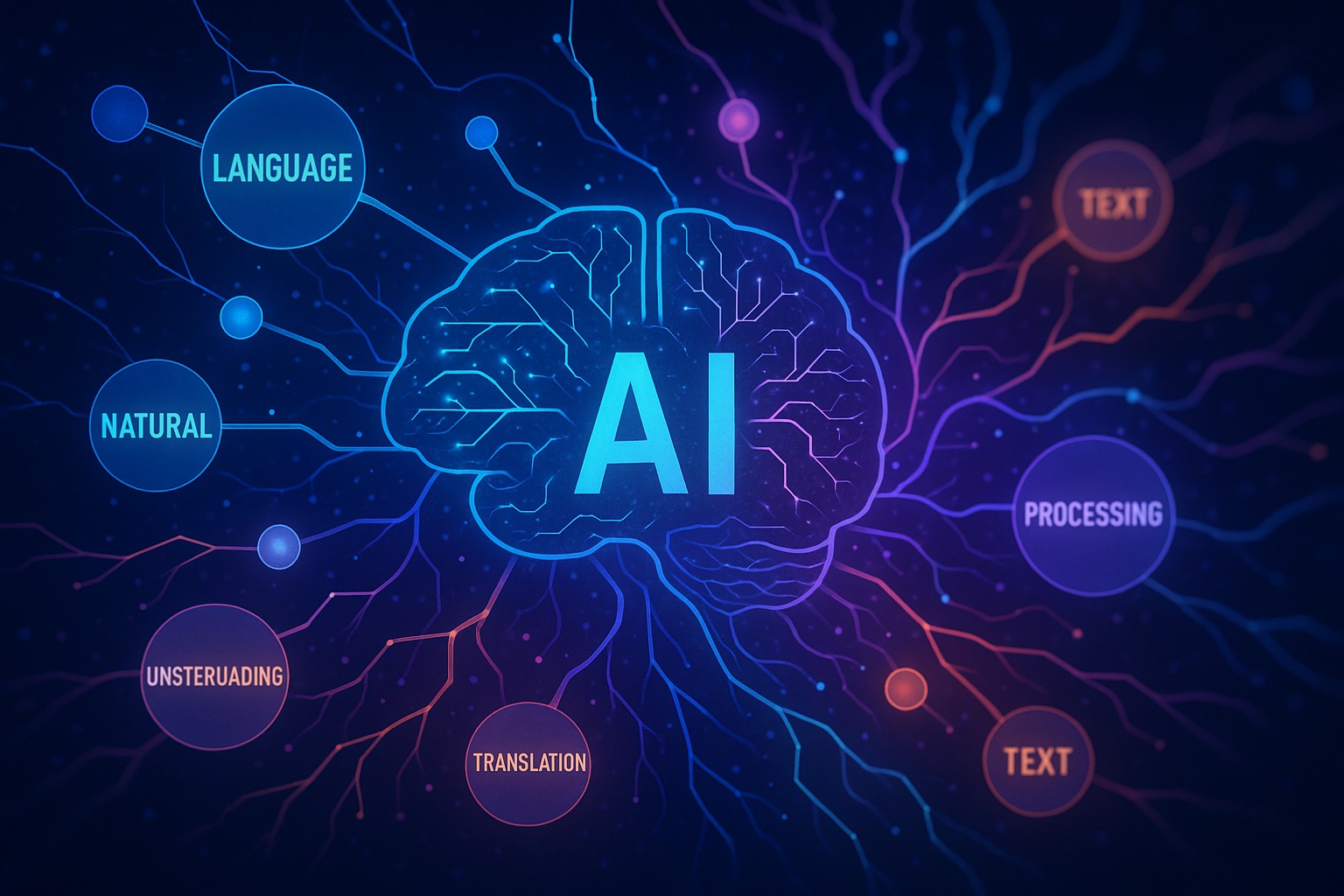

The landscape of natural language processing is undergoing a fundamental shift driven by the rise of large, pre-trained models, increasingly capable multimodal systems, and a renewed focus on responsible AI. Organizations are moving from single-task models toward flexible, foundation-like architectures that can be adapted to a broad range of use cases with relatively little task-specific data. This transition is transforming how businesses think about product development, customer experience, and operational efficiency. In parallel, the demand for real-time, context-aware language understanding across domains—from enterprise software to consumer devices—requires models that can operate with greater efficiency, stronger safety controls, and better alignment with human values. The resulting wave of innovation has profound implications for competitive advantage, cost structure, and go-to-market strategies, especially for firms striving to stay ahead of regulatory and ethical considerations while extracting measurable business value.

As the field evolves, several interlocking forces are shaping the future of NLP: scalable data ecosystems that balance privacy with performance, hardware advances that reduce latency and energy use, and ecosystems that blend open research with controlled deployment. In this environment, enterprises must evaluate not only what a model can do today, but how its capabilities will mature, how quickly it can be updated, and how governance, security, and explainability will evolve alongside capability. The result is a more dynamic, architecture-driven approach to NLP, where decision-making hinges on impact, risk, and the ability to align technology with strategic objectives. This shift elevates the importance of a thoughtful roadmap that connects data strategy, platform decisions, and organizational readiness to the broader trend of the future of NLP.

- Foundation models and fine-tuning: organizations increasingly rely on large-scale, general-purpose models that can be specialized efficiently for specific domains and tasks.

- Multimodal NLP: integration of text with images, audio, and video to enable richer understanding and capabilities like automatic content moderation, search, and accessibility tools.

- On-device and privacy-preserving inference: techniques that reduce data exposure and latency by moving processing closer to the user and employing privacy-enhancing technologies.

- Governance, safety, and alignment: robust frameworks for monitoring, auditing, and controlling model behavior to mitigate risk and uphold ethical standards.

- Data strategies and synthetic data: synthetic data generation and data augmentation become integral to scaling rare-domain tasks while preserving quality and privacy.

For decision-makers, the practical implications of these trends include shifting investments toward platform-level capabilities, establishing cross-functional governance councils, and prioritizing modular architectures that can adapt to evolving requirements. The future of natural language processing thus hinges not only on breakthroughs in accuracy or speed but also on a resilient mix of responsible deployment practices, scalable data governance, and disciplined risk management that can sustain business value over time.

Advancements in Core NLP Technologies

Advances in core NLP technologies are redefining what is possible across industries. The focus has moved from chasing marginal gains in isolated benchmarks to delivering end-to-end systems that can reason, retrieve, summarize, translate, and interact in natural ways. This shift is powered by improved training paradigms, better alignment techniques, and increasingly capable architectures that handle longer contexts, multi-turn interactions, and cross-lingual capabilities with greater reliability. Enterprises benefit from models that can internalize domain-specific knowledge, follow complex instructions, and adapt to user intent with fewer examples, enabling faster time-to-value and more personalized experiences for customers and employees alike.

Improvements in efficiency and scalability are equally important. Techniques such as retrieval-augmented generation, model pruning, quantization, and specialized hardware acceleration are pushing down latency and cost, enabling use in latency-sensitive applications and on-device scenarios. At the same time, researchers are refining methods for robust evaluation, debiasing, and safety, which help organizations manage risk as they scale NLP across workflows, products, and customer touchpoints. The convergence of these capabilities—enhanced reasoning, better alignment, and practical efficiency—creates a richer set of tools for building intelligent conversational agents, content moderation systems, enterprise search, and multilingual support platforms that can operate at enterprise-grade scale.

- Retrieval-augmented generation (RAG) and open-domain reasoning, which combine strong language models with external knowledge sources to improve accuracy and factuality.

- Efficient architectures and optimization, including pruning, quantization, and distillation, to reduce model size, latency, and energy consumption without sacrificing essential performance.

- Multimodal alignment and vision-language modeling, enabling systems to understand and generate content that integrates text with images and other media.

- Instruction tuning and reinforcement learning from human feedback (RLHF), which improve models’ ability to follow complex user instructions and align with user preferences.

- Evaluation frameworks and benchmarks that better capture real-world usage, persistence under distribution shifts, and robustness to adversarial inputs.

As confidence in these technologies grows, organizations should think structurally about how to harmonize model development with product strategy. This includes defining clear success criteria, establishing data governance and validation pipelines, and building cross-functional teams that span data science, engineering, product, legal, and user experience. The practical outcomes are not only more capable NLP systems but also a reproducible path to responsible innovation—one that emphasizes reliability, safety, and demonstrable business impact in the future of natural language processing.

Industry Adoption and Trust in NLP Systems

Across sectors, industry adoption of NLP is moving from pilot projects to enterprise-wide deployments that touch core processes such as customer support, content generation, compliance, and knowledge management. The potential for NLP to reduce manual effort, accelerate decision-making, and unlock new revenue streams is increasingly clear. Yet widespread adoption brings new considerations around risk, transparency, and governance. Enterprises must balance the benefits of automation with the need to maintain control over data, protect sensitive information, and ensure that system outputs are explainable and compliant with industry regulations. This balance is central to building trust in NLP systems among customers, employees, and regulators alike.

To translate capability into sustainable value, organizations are embracing disciplined adoption patterns. These include structured governance, continuous monitoring, and clear performance metrics tied to business outcomes. Vendors and internal teams are aligning on data provenance, model lineage, and incident response protocols to minimize risk and support rapid remediation when issues arise. As models become embedded in mission-critical workflows, practical considerations—such as reliability under changing data distributions, resistance to prompt-based manipulation, and the ability to audit decisions—become differentiators between successful deployments and expensive missteps. The ultimate goal is a replicable playbook for NLP adoption that scales safely while delivering measurable ROI.

- Clear governance and explainability: establish accountability for model behavior, interpret outputs, and document decision paths for audits and compliance.

- Data provenance and consent: track data sources, usage rights, and data-sharing agreements to satisfy privacy and regulatory requirements.

- Model monitoring and incident response: implement continuous monitoring for drift, anomalies, and errors, with predefined remediation workflows.

- Vendor risk management: assess supplier capabilities, security postures, and contractual protections to manage third-party dependencies.

- Compliance with privacy laws and industry standards: align NLP deployments with frameworks such as GDPR, HIPAA, and sector-specific regulations to reduce risk.

The practical takeaway for organizations is to embed NLP initiatives within a governance-enabled ecosystem that supports ongoing learning, compliance, and accountability. When the business context is paired with robust control mechanisms, NLP can deliver consistent performance, reduce risk, and drive sustained competitive advantage in the future of NLP adoption across industries.

Data, Privacy, and Governance for NLP

The data foundation of NLP systems increasingly determines both capability and risk. High-quality, representative data remains essential for training and fine-tuning, but growing concerns around privacy, consent, and data ownership require principled data strategies. Enterprises are leveraging a combination of curated internal data, carefully sourced external data, and synthetic data generation to expand capabilities without exposing sensitive information. Privacy-preserving techniques, such as differential privacy, federated learning, and on-device processing, are gaining traction as ways to protect end-user information while maintaining model usefulness. Data governance programs must address data lineage, access controls, retention policies, and auditability to comply with evolving regulatory expectations and consumer trust.

Beyond privacy, governance encompasses model risk management, quality assurance, and lifecycle management. Organizations should implement rigorous evaluation protocols that test models against real-world distributions, measure robustness to prompts, and monitor for bias and safety concerns. Clear policies about model release, monitoring, and decommissioning help ensure that NLP systems operate within defined risk envelopes. As data ecosystems grow in complexity, governance structures must be adaptable, scale with business needs, and integrate with broader enterprise risk management and compliance programs. A disciplined approach to data, privacy, and governance now is foundational to sustainable performance and trust in the future of NLP initiatives.

Economic and Workforce Implications

As NLP capabilities expand, the economic and workforce implications become more pronounced. Automation of knowledge work can lead to productivity gains, but it also reshapes job roles, skill requirements, and the pipeline of talent across organizations. The most successful firms will combine automation with upskilling and change management to ensure that employees can work effectively with advanced NLP systems while maintaining areas of strategic human judgment, creativity, and governance. The business case for NLP increasingly rests on improved customer experiences, faster product cycles, and the ability to scale multilingual services without proportional cost increases. This shifts investment toward platforms, data management, and developer enablement, rather than solely toward model procurement.

From a workforce perspective, demand is shifting toward roles that blend data science, software engineering, and domain expertise. Skills in model evaluation, prompt design, system integration, privacy-by-design, and regulatory compliance become critical complements to traditional NLP competencies. Organizations should invest in training programs, cross-functional teams, and internal communities of practice to accelerate adoption and ensure that knowledge stays within the organization. Furthermore, a strategic emphasis on experimentation, measurement, and governance will help companies realize the full return on NLP investments while mitigating risks associated with scale and complexity.

The Road Ahead: Strategic Priorities for Organizations

Looking forward, the strategic priorities for organizations seeking to capitalize on the future of NLP center on platform coherence, responsible scaling, and business-driven experimentation. A well-defined data strategy that aligns with product roadmaps, customer needs, and regulatory constraints is essential. Companies should invest in modular architectures that enable rapid iteration, versioning, and safe exposure of NLP capabilities across products and channels. Building cross-functional governance structures—bridging product, engineering, legal, and security—helps ensure that NLP deployments deliver value without compromising safety or compliance. In parallel, organizations should foster a culture of responsible innovation, with clear metrics, risk controls, and continuous learning to adapt to evolving capabilities and market conditions.

Finally, leadership alignment around a clear vision for NLP outcomes is critical. This includes articulating how NLP will differentiate products, improve efficiency, and support strategic objectives. Organizations that couple technical excellence with governance discipline, customer-centric design, and disciplined experimentation will be best positioned to realize durable competitive advantage as the future of NLP continues to unfold. The path is not merely about building smarter models, but about building trusted, scalable systems that integrate seamlessly with business processes and strategic priorities.

FAQ

What is the most significant trend driving the future of NLP?

The most significant trend is the shift toward foundation-model-based systems that can be adapted to a wide range of domains with minimal task-specific data. This approach unlocks rapid experimentation, cross-domain capabilities, and more personalized experiences, while emphasizing governance, safety, and explainability to manage risk at scale.

What are the main risks when deploying NLP at scale?

Key risks include hallucination or factual inaccuracies, data privacy and leakage, bias and fairness concerns, and operational failures due to distribution shifts. Effective risk management requires rigorous evaluation, continuous monitoring, strong data governance, and clear incident response protocols aligned with regulatory requirements.

How should organizations govern NLP deployments to balance value and risk?

Organizations should establish cross-functional governance councils, implement end-to-end data provenance and model lineage practices, and maintain robust monitoring and auditing capabilities. Clear policies on data usage, privacy, model updates, and incident response help balance business value with safety and compliance requirements.

What skills will be in demand for NLP in the coming years?

In-demand skills include prompt engineering, model evaluation and bias auditing, data governance, privacy-by-design, system integration, and regulatory compliance. Domain expertise remains crucial to translate NLP capabilities into tangible business outcomes, while software engineering foundations support scalable, reliable deployments.