Now Reading: AI Audio Revolution: Voice Cloning and Music Generation

-

01

AI Audio Revolution: Voice Cloning and Music Generation

AI Audio Revolution: Voice Cloning and Music Generation

Executive overview: The AI Audio Revolution shaping media, marketing, and product strategy

The convergence of voice cloning and AI-generated music is redefining how organizations create, curate, and distribute audio content. Enterprises now have the ability to reproduce recognizable voices with consent, craft tailored soundtracks for campaigns, and automate routine audio production tasks at scale. This shift creates opportunities to improve customer engagement, accelerate product launches, and reduce dependency on traditional voice talent and studio workflows. In parallel, AI-generated audio enables rapid localization, accessibility enhancements, and dynamic content that can adapt to context in real time. For executives, the promise lies in a more agile audio infrastructure that aligns with data-driven marketing, product experience, and compliance objectives.

Yet the opportunity comes with layers of complexity. Quality concerns—such as natural prosody, emotional nuance, and dialect accuracy—remain central to brand fidelity. Data governance, licensing, and privacy are not ancillary issues; they determine who may speak, what content may be produced, and how listeners perceive authenticity. The landscape now demands a disciplined approach to governance, risk assessment, and partner selection that balances speed and innovation with accountability and trust. As organizations pilot these technologies, they must define clear policies around consent, disclosure, and rights management to avoid unintended consequences that could undermine brand value or invite regulatory scrutiny.

To operationalize the AI audio advantage, leaders should view voice cloning and AI music as components of an integrated audio stack—one that connects content strategy, product experience, and enterprise risk management. This requires not only technical capability but also a governance blueprint that spans legal, security, and brand teams. When properly implemented, AI-generated audio becomes a strategic asset that amplifies messaging, supports multilingual and accessible experiences, and enables data-informed experimentation while preserving the integrity of the physical or human voices that customers know and trust.

- Brand-safe voice synthesis for customer support and training materials

- Personalized audio marketing at scale

- Automated music generation for jingles, ads, and podcasts

- Voice cloning for accessibility and localization (with consent and compliance)

Technical landscape: How voice cloning and AI-generated music work

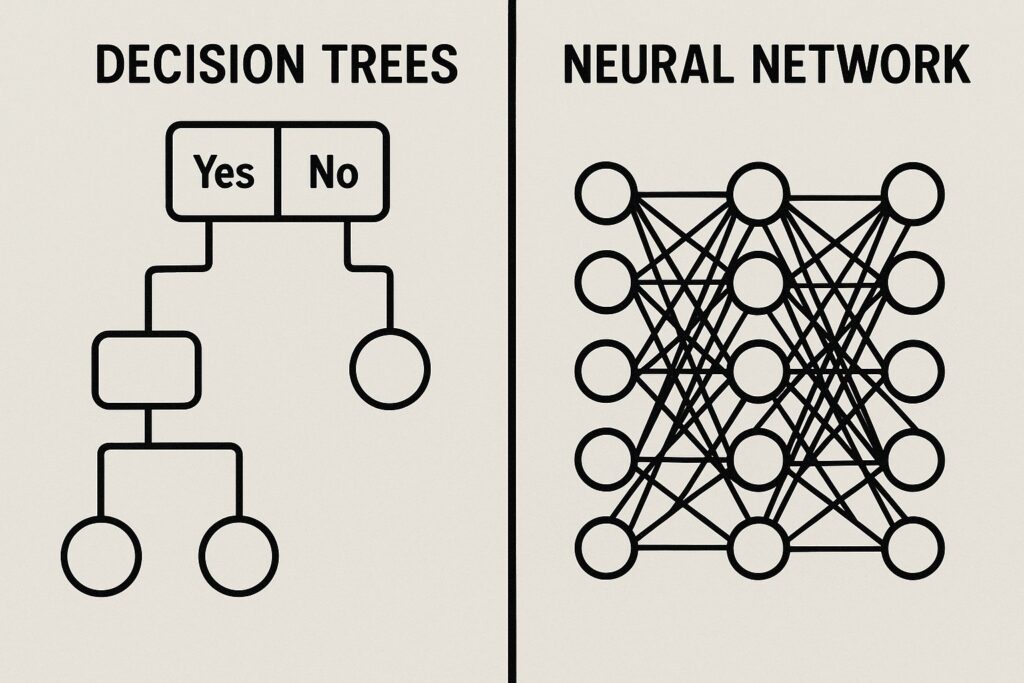

The technical backbone of modern AI audio comprises two intertwined streams: voice cloning and AI-generated music. Voice cloning typically starts with supervised training on a labeled dataset of speech from a target voice, followed by neural vocoder-based synthesis that converts text or prompts into expressive audio. Advances in sequence-to-sequence models, neural vocoders, and diffusion-based audio generation have pushed the quality bar toward voices that sound natural across prosody, emotion, and context. For enterprise use, this often means building a controllable synthesis pipeline that can adapt to tone, speed, accent, and branding requirements while maintaining consistency with existing voice assets.

AI-generated music, including background scores, soundtracks for ads, and personalized audio experiences, increasingly relies on diffusion models and transformer architectures to compose multi-instrument tracks, harmonize melodies, and adapt to user or scene context. These systems can produce royalty-cleared, loop-able content that matches a desired mood and tempo, while metadata and licensing considerations govern how the music can be used across channels. Across both domains, practical deployment emphasizes safety checks, quality assurance, and governance dashboards that help ensure outputs meet brand guidelines, avoid misrepresentation, and comply with licensing terms and privacy laws.

From a governance standpoint, critical factors include data provenance, consent provenance, and model auditing. The provenance of training data determines who owns the resulting voice or music outputs, while consent provenance tracks who allowed the use of specific voice assets. Enterprise teams also implement evaluation protocols that measure naturalness, intelligibility, expressiveness, and resistance to adversarial prompts. In addition to performance metrics, operational safeguards such as watermarking, content provenance tagging, and automated disclosure prompts are increasingly common to facilitate responsible usage and listener awareness within platforms and media ecosystems.

- Model architectures (neural TTS, diffusion-based audio generation, and vocoders)

- Data requirements and copyright considerations

- Evaluation metrics and safety checks (voice similarity, naturalness, and abuse detection)

Ethical, legal, and governance considerations

Ethical questions in AI audio center on consent, deception, and the potential erosion of trust in media. When a voice can be cloned or a track can be generated to mimic a real person, organizations must establish clear permissions, usage boundaries, and disclosure practices. This extends beyond legal compliance to brand integrity: audiences may respond differently to synthetic voices that imitate those they recognize, and misrepresentation can damage credibility even if the content technically complies with licensing terms. Proactive governance—covering who may authorize synthetic content, how outputs are labeled, and how corrections are handled—helps protect both the organization and end users from unintended consequences.

Legally, the evolving landscape involves intellectual property rights, data protection, and consumer protection considerations. Rights holders may assert ownership over the original voice data and musical patterns used to train models, while privacy regimes govern how personal voice data is collected, stored, and processed. Organizations must implement robust data governance programs that document consent, retention periods, data minimization, and the scope of permissible use. Additionally, cross-border data transfers introduce regulatory complexity, requiring careful mapping of data flows, localization requirements, and supplier due diligence to ensure compliance across jurisdictions.

Governance frameworks for AI audio typically include risk assessments, role definitions, and policy enforcement mechanisms. Responsible deployment hinges on cross-functional collaboration among legal, security, product, and brand teams to create controls such as guardrails for high-risk prompts, mandatory disclosure in sensitive contexts, and auditable records of model decisions. Transparency initiatives—like clear labeling of synthetic content and accessible explanations of the source and rights—help maintain listener trust and reduce the likelihood of misinterpretation or harmful use. In practice, this means adopting standards for data provenance, model versioning, and incident response tailored to audio-specific risks.

- Consent and rights management for voice data

- Transparency and disclosure of generated content

- Auditing and accountability frameworks

- Mitigation of deepfake risks and misrepresentation

Business implications and governance: case studies and operational considerations

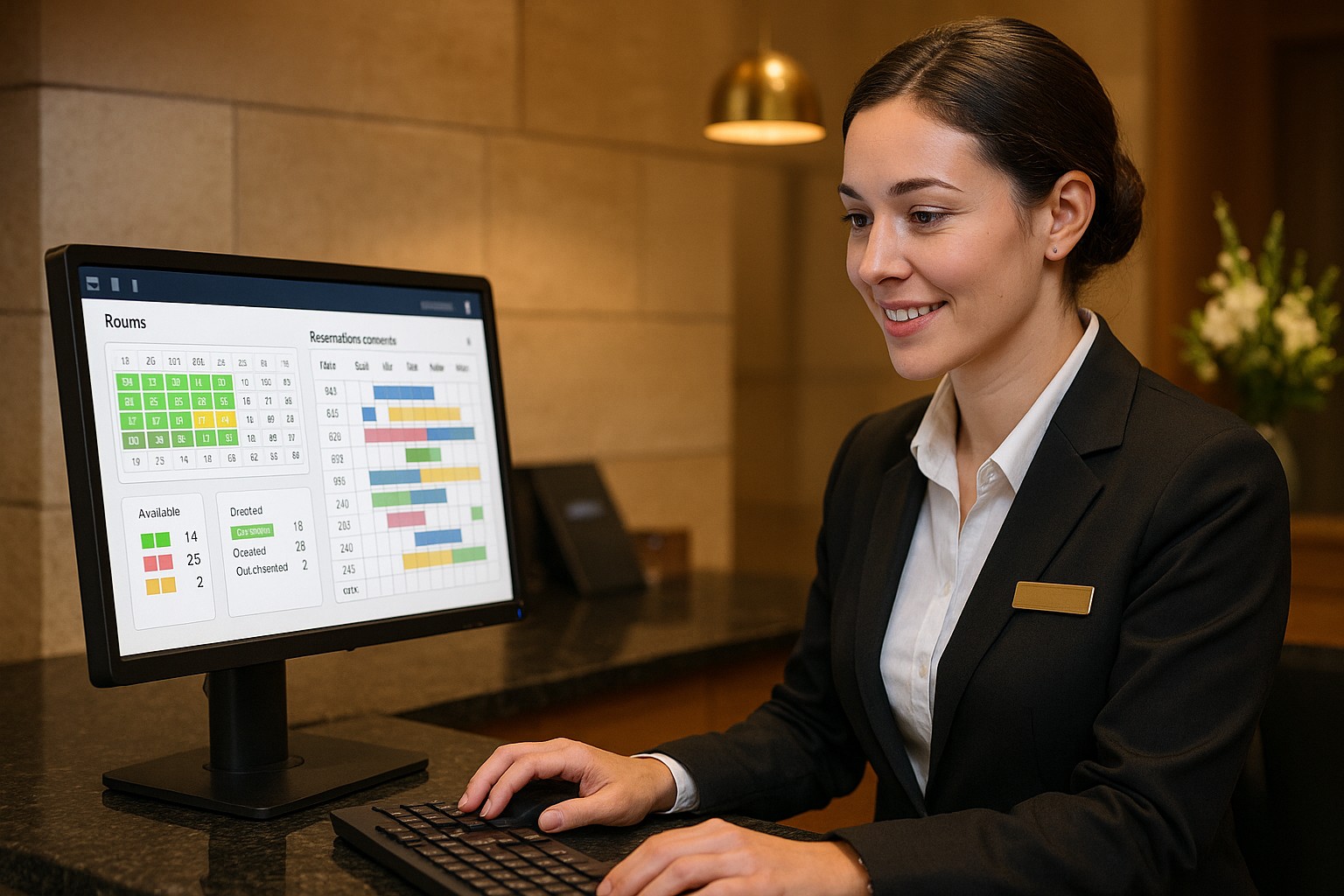

Leading organizations are piloting AI audio programs by starting with clearly defined use cases that align with customer experience, content strategy, and product workflows. A common pattern is to begin with internal communications, training materials, or accessibility features where there is a compelling ROI and lower risk, then expand to customer-facing channels with explicit consent and robust disclosure. Such a staged approach enables teams to refine voice personas, calibrate musical styles to brand guidelines, and validate performance against business metrics like engagement, response times, and content production costs. When scaled, the approach integrates with content management systems, localization pipelines, and governance tools to coordinate rights management, attribution, and version control across diverse markets and languages.

From an operations perspective, the most important success factors include careful vendor evaluation, data governance design, and the establishment of guardrails around who can authorize synthetic content. Security considerations—such as protecting training data, preventing model leakage, and auditing access to synthesis pipelines—are essential to preserving brand safety. Companies should also plan for continuous improvement cycles: monitor outputs for drift in voice quality or musical style, update licensing terms as margins, and implement feedback loops from legal and compliance teams to keep policies aligned with evolving regulations. Finally, a realistic roadmap will balance rapid experimentation with the discipline required to protect stakeholders, including customers, creators, and rights holders.

Implementation strategies for enterprises

Enterprises looking to implement AI-generated audio should start with an auditable framework that connects policy, process, and technology. Begin with an inventory of voice assets and the licenses attached to them, then map the data supply chain to identify where consent is required and how data will be stored, used, and ultimately retired. Next, establish a governance body that includes legal, security, brand, product, and privacy stakeholders to define acceptable use cases, thresholds for risk, and escalation paths for policy breaches. Pilot programs should emphasize measurable outcomes—such as reductions in production time, cost savings, or improvements in accessibility metrics—while maintaining strict controls over the use of any cloned voices and AI-generated music. A deliberate, risk-informed rollout helps ensure that early wins translate into scalable, responsible capabilities.

On the technical front, organizations should implement layered safeguards: access controls for synthesis tools, watermarking or fingerprinting for outputs, and robust auditing to verify provenance and licensing compliance. It is important to align vendor and platform selections with internal policies on data sovereignty and privacy, and to require contract provisions that cover data handling, model updates, and incident response. Finally, integrate synthetic audio into existing content pipelines through standardized APIs and metadata to ensure consistency with branding and regulatory requirements. With careful planning, governance, and monitoring, AI audio becomes a repeatable capability that drives innovation while preserving trust and accountability across all channels.

What is the risk of voice cloning in business communications?

Risks include impersonation, deception, and reputational damage if a synthetic voice is misused to imitate a real person without clear consent. There is also a concern about quality drift, where the voice may unintentionally misrepresent tone or messaging. To mitigate this, organizations should enforce explicit consent, implement robust disclosure practices, apply watermarking and provenance tagging, and maintain human-in-the-loop review for high-stakes communications.

How should organizations govern data used to train voice models?

Sustainable governance requires detailed records of consent, licenses, and usage rights for each data source, along with retention and deletion policies. Data anonymization where feasible, strict access controls, and vendor audits help reduce risk. It is essential to document model versions and training data lineage, so outputs can be traced to their source material if questions or disputes arise.

What best practices can reduce misuse of AI-generated audio?

Best practices include mandatory disclosure when content is synthetic, clear labeling for synthetic voices, and user education about the presence of AI-generated content. Implementing technical safeguards such as prompts that restrict dangerous outputs, content moderation rules, and post-production review processes also helps. Establishing an incident response plan for misuses or breaches and partnering with industry bodies on standard definitions and compliance guidelines further strengthens resilience against abuse.

What regulatory considerations exist for AI music generation?

Regulatory considerations center on licensing, ownership of generated works, and rights to use source material in training datasets. Organizations should ensure clear licensing terms with music creators or licensors, document any transformations applied during generation, and comply with data privacy regulations when training or using audio data that may include personal information. Ongoing monitoring for evolving copyright frameworks and collaboration with rights-holders can help navigate the complexities of AI-created music in commercial contexts.