Now Reading: AI vs ML vs Deep Learning: What’s the Difference?

-

01

AI vs ML vs Deep Learning: What’s the Difference?

AI vs ML vs Deep Learning: What’s the Difference?

Overview: AI, ML, and Deep Learning in Context

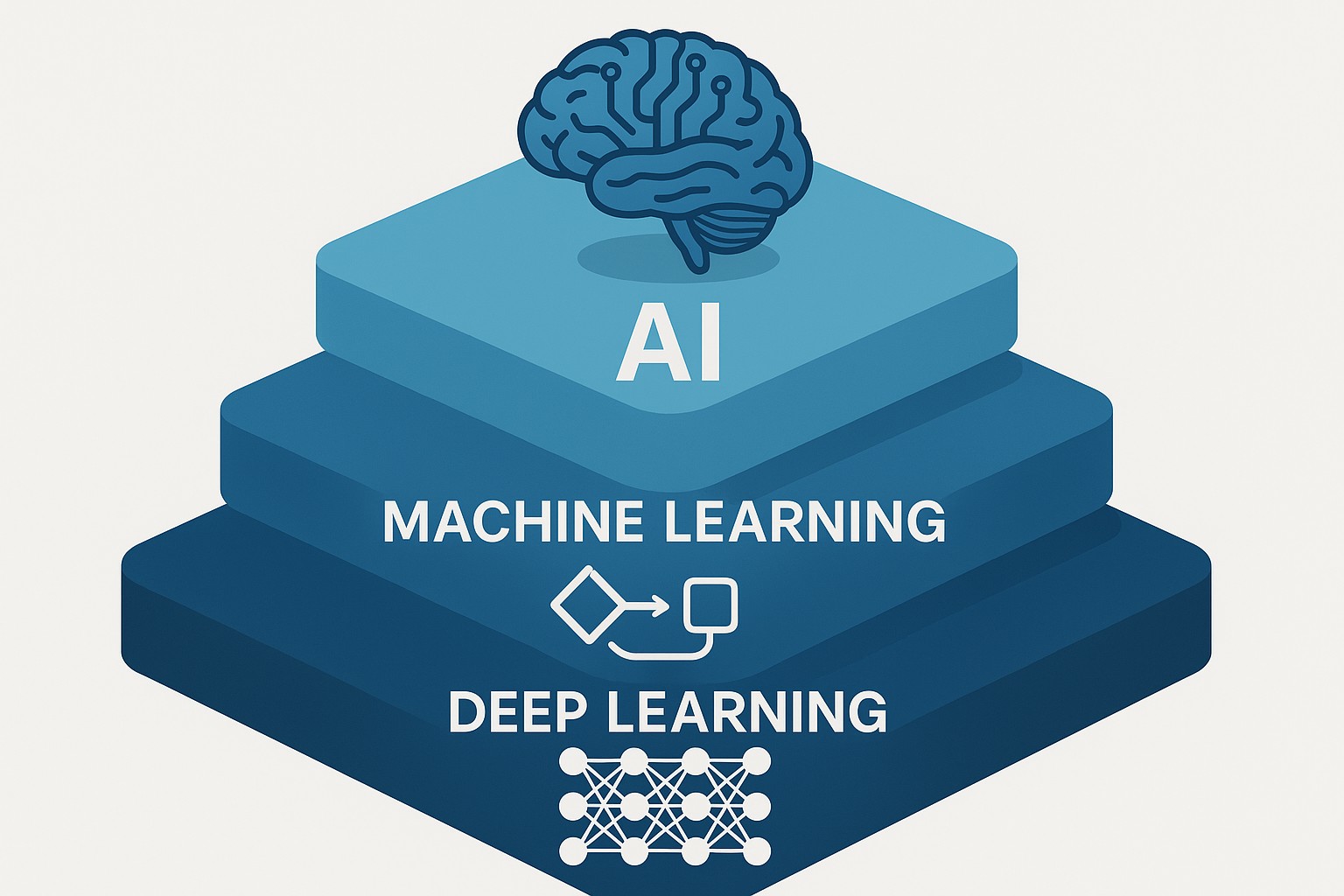

Artificial intelligence, machine learning, and deep learning are terms that get used interchangeably in business conversations, yet they represent different ideas, scopes, and capabilities. AI is the broad umbrella that covers systems designed to emulate intelligent behavior, from simple rule-based programs to sophisticated decision engines. ML is a subset of AI focused on algorithms that improve their performance through data, without being explicitly programmed for every decision. Deep learning is a further specialization—an approach within ML that uses large neural networks with many layers to learn representations of data directly from raw inputs. For enterprises, understanding these distinctions helps align technology choices with strategic goals, data availability, and measurable outcomes.

In practical terms, most modern AI initiatives for organizations start with the goal of automating tasks that require pattern recognition or decision making. Depending on the task, a business might rely on traditional AI techniques, or it might leverage ML models, or more often, DL models when the data and problem complexity justify it. The key in any successful deployment is to connect the technology choice to a clear objective, an available data strategy, and a plan for measuring impact in terms that matter to the business—such as accuracy, speed, cost savings, or customer satisfaction. The landscape is not binary; it’s a spectrum where AI, ML, and DL often complement each other within a single program.

As organizations scale their AI programs, they frequently encounter a continuum from rule-based automation to data-driven learning. Early wins often come from supervised learning tasks that replace manual rule sets with data-driven estimations, while deeper breakthroughs require larger data ecosystems, more compute, and discipline around governance. This interplay of capability, data, and governance is what differentiates a pilot project from a mature, repeatable AI program. In the following sections, we unpack what each term means, how they relate, and what that means for a business pursuing practical, revenue-generating AI initiatives.

What is Artificial Intelligence?

Artificial intelligence refers to systems that perform tasks that would typically require human intelligence. These tasks include perception, reasoning, planning, learning, and natural language understanding. Importantly, AI encompasses both single-purpose programs and more general capabilities, and not all AI requires learning from data. Some AI applications rely on predefined rules and logic to achieve reliable outcomes, while others strive to adapt to new situations through data-driven methods.

In business contexts, AI often manifests as chatbots that understand intent, scheduling assistants that optimize time, or decision engines that weigh multiple signals to select a course of action. These systems may or may not learn over time; when they do learn from data, they are typically considered part of machine learning. The practical implication for executives is to evaluate AI initiatives by the problem being solved, the data available, and the expected value, rather than by the buzzword alone. Clear governance, explainability, and ethical considerations are essential components of any AI deployment, especially in regulated industries or customer-facing applications.

What is Machine Learning?

Machine learning is a subset of artificial intelligence that enables computers to learn patterns from data without being explicitly programmed for every outcome. Rather than relying on fixed rules, ML models identify relationships in historical data and generalize those relationships to new, unseen data. This data-driven approach makes ML well suited to tasks where the environment is dynamic and human-defined rules would be too brittle or incomplete.

A practical way to think about ML is as a toolkit for predictive analytics and pattern discovery. For example, ML models can forecast demand, detect anomalies in financial transactions, or segment customers based on behavior. The core idea is that the model improves as it is exposed to more data, adjusting its parameters so that its predictions align more closely with observed reality. To help organize the field, ML is commonly categorized by learning paradigms, including supervised learning, unsupervised learning, and reinforcement learning. The choice among these paradigms depends on data availability, the nature of the task, and the quality of labels or feedback.

– Supervised learning: The model learns from labeled examples, mapping inputs to known outputs. This is the most common ML approach for prediction and classification tasks.

– Unsupervised learning: The model discovers structure in unlabeled data, such as clusters or latent representations. This is powerful for exploratory data analysis, anomaly detection, and dimensionality reduction.

– Reinforcement learning: An agent learns to make a sequence of decisions by interacting with an environment and receiving feedback through rewards or punishments. This paradigm is well suited to optimization, control, and sequential decision tasks.

In many business settings, the choice between supervised and unsupervised learning hinges on data availability and the desired outcome. If a reliable labeled dataset exists, supervised learning often delivers strong, interpretable results. If labels are scarce or expensive to obtain, unsupervised learning can reveal structure that informs downstream supervised tasks. The phrase supervised vs unsupervised learning is a common consideration when planning data strategies and evaluating model accessibility and usefulness.

What is Deep Learning?

Deep learning is a specialized approach within machine learning that uses artificial neural networks with multiple layers to automatically learn representations from raw data. These networks, often called deep neural networks, excel in processing complex, high-dimensional data such as images, audio, and natural language. The depth of the network allows it to learn hierarchical features—early layers may detect simple patterns, while deeper layers capture more abstract concepts. The power of deep learning comes from large datasets, advances in optimization algorithms, and the availability of substantial computational resources, particularly GPUs and specialized accelerators.

In practice, deep learning has driven breakthroughs across perception, synthesis, and language tasks. Image recognition systems that can identify objects with high accuracy, language models that generate coherent text, and speech recognition systems that translate spoken words into text are all emblematic of deep learning. For businesses, the appeal lies in the potential to automate highly complex tasks that were previously out of reach for traditional ML approaches. However, deep learning also comes with trade-offs, including higher data requirements, longer training times, more complex tuning, and greater challenges in interpretability. Making a successful DL project requires careful data curation, scalable infrastructure, and a clear plan for deployment and maintenance.

How AI, ML, and DL Intersect and Distinguish Themselves

Artificial intelligence provides the broad objective of enabling machines to perform tasks that resemble human cognition. Machine learning narrows that focus to data-driven model-building, where the system improves through experience rather than through explicit programming for each rule. Deep learning sits inside machine learning as a particular method that uses deep neural networks to learn representations from large, high-dimensional datasets.

The distinctions matter in practice because they influence data strategy, investment, and risk management. Traditional AI can be effective when tasks are well-defined, rules are stable, and data is limited. ML expands the set of problems that organizations can solve with data by learning from historical results, but it remains constrained by the quality and quantity of labeled data. DL pushes the frontier further when there is abundant labeled or unsupervised data, powerful hardware, and explicit goals that justify the computational investment. For many businesses, success comes from a hybrid approach that leverages rule-based AI for stable, high-reliability tasks and ML/DL for areas where patterns are too complex for manual coding.

Industry use cases and business impact

Across industries, AI, ML, and DL are driving efficiency, insights, and new value streams. Healthcare organizations use ML to predict readmissions, DL to analyze medical images, and AI-based decision support to augment clinician judgment. Financial services leverage ML for fraud detection, credit scoring, and risk management, while DL enables more natural language processing for customer service and smarter document understanding. Retailers apply ML for demand forecasting and customer segmentation, and DL powers product recommendations and image-based search. In manufacturing and logistics, AI supports predictive maintenance, quality control through image analysis, and optimized supply chains. The common thread across these examples is the ability to translate data into actionable decisions, with DL often playing a central role where the input data is complex and high-dimensional.

– Healthcare: Early warning systems, clinical decision support, imaging analytics, and personalized treatment planning.

– Financial services: Fraud detection, risk scoring, portfolio optimization, and compliance monitoring.

– Retail and consumer markets: Demand forecasting, pricing optimization, customer segmentation, and recommendation engines.

– Manufacturing and logistics: Predictive maintenance, quality inspection, and route optimization.

Practical considerations for businesses

When planning AI initiatives, organizations should align technical choices with business objectives, data availability, and governance requirements. A practical approach begins with a well-defined problem statement and measurable success criteria, followed by assessing data readiness, including data cleanliness, labeling, and provenance. Building a cross-functional team is essential, combining domain expertise, data science, engineering, and operations to ensure that models can be deployed, monitored, and maintained in production.

From a data perspective, a successful program benefits from a clear data strategy that defines what data is collected, how it is labeled or structured, and how privacy and security will be preserved. It is important to set expectations about model performance, maintenance cycles, and the potential need for retraining as business conditions evolve. Organizational readiness—such as model governance, risk controls, and change management—often determines whether a project delivers sustained value rather than a one-off improvement. In short, the path to impact is not only about algorithmic sophistication but also about data quality, collaboration, and disciplined execution.

Implementation considerations for businesses

organizations should prepare for the operational realities of moving from pilot to production. This includes choosing the right level of model complexity for the problem, ensuring explainability where required by regulation or customer trust, and establishing monitoring that detects data drift, degradation in performance, or unusual behavior. Cost considerations—such as compute resources, data storage, and ongoing maintenance—must be weighed against expected gains in efficiency, accuracy, and revenue. Finally, a clear privacy framework and ethical guidelines help ensure the responsible use of AI technologies, reducing risk while sustaining stakeholder confidence.

– Define success in business terms: accuracy, speed, cost, or customer experience that can be measured and tracked over time.

– Invest in data governance and quality: data lineage, labeling standards, and accessible metadata reduce later frictions.

– Build a scalable, maintainable pipeline: from data ingestion to model deployment and monitoring, with automated tests and rollback plans.

Choosing the right approach for a project

The decision to use AI, ML, or DL on a given project starts with problem type, data availability, and required outcomes. Projects with structured, labeled data and clear performance metrics often benefit from supervised learning approaches, with the added advantage of interpretability and faster iteration cycles. When data labels are sparse or unlabeled, unsupervised learning can reveal intrinsic structure or patterns that inform downstream tasks. For highly complex perception or language tasks, deep learning can deliver breakthroughs but requires substantial data, compute, and expertise to manage effectively.

A practical framework for evaluation includes scoping the problem, assessing data readiness, estimating total cost of ownership, and testing a baseline model before investing in more sophisticated architectures. It is also wise to consider organizational readiness for model governance, transparency, and ethics. The most successful programs balance ambition with realism, starting with a pilot that demonstrates tangible business value and then iterating toward a scalable, governed production system.

Common pitfalls and missteps

Even well-conceived AI programs can falter if they overlook data quality, governance, or alignment with business goals. Common pitfalls include overfitting to historical data, which reduces real-world robustness; failure to monitor models after deployment, leading to stale or biased predictions; and underinvesting in data labeling, data quality, or infrastructure, which undermines model performance. Another frequent misstep is treating AI as a set-and-forget solution rather than a living system that requires ongoing retraining, validation, and governance. Finally, organizations that neglect stakeholder engagement or regulatory considerations risk misalignment with customer expectations and policy constraints.

Future trends and what the C-suite should know

The AI landscape continues to evolve rapidly, with growing emphasis on responsible AI, interpretability, and scalable deployment. Advances in cloud-native AI platforms, automated machine learning (AutoML), and edge computing are making ML and DL more accessible across business units. In practice, leaders should expect increased scrutiny of data governance, privacy, and bias, along with opportunities to monetize AI capabilities through improved customer experiences, product optimization, and predictive maintenance. The organizations that succeed will combine strong data strategies with disciplined governance, a culture of experimentation, and a clear link between AI initiatives and measurable business outcomes.

FAQ

What is the simplest way to distinguish AI, ML, and DL?

Artificial intelligence is the broad umbrella term for systems that imitate human reasoning and perception. Machine learning is a subset of AI that learns from data to improve predictions or decisions, without being programmed for every outcome. Deep learning is a specialized, more powerful subset of machine learning that uses deep neural networks to learn complex representations from large amounts of data. In practice, many projects use a mix of these approaches depending on data availability, problem complexity, and performance requirements.

What are the practical differences between supervised and unsupervised learning?

Supervised learning relies on labeled data to train models to predict or classify new inputs, making evaluation straightforward because there is a known ground truth. Unsupervised learning uses unlabeled data to uncover structure, patterns, or groups within the data, which can inform downstream tasks or provide insights for feature engineering. Supervised methods tend to be easier to validate, while unsupervised methods can reveal latent structure that drives more creative or exploratory analyses.

When should a business choose ML over DL?

Choose ML over DL when there is limited labeled data, the problem is well-defined, and the required performance can be achieved with simpler models that are easier to interpret and deploy. ML is typically faster to train, cheaper to operate, and easier to maintain in production. DL becomes advantageous when the data is high-dimensional and rich—such as images, audio, or text—and the problem benefits from learning hierarchical representations, provided there is sufficient data and compute resources.

What metrics matter most when evaluating models for business use?

The most important metrics depend on the objective but commonly include accuracy or precision/recall for classification tasks, mean squared error or R-squared for regression, and business-relevant outcomes such as revenue uplift, cost reduction, or time savings. Beyond accuracy, it is critical to monitor model stability, latency, fairness, and potential drift over time, as well as the interpretability of results for stakeholders and regulators.

Which skills should teams develop to implement AI projects successfully?

Teams should cultivate a blend of domain expertise, data engineering, and modeling capabilities. Key skills include data preparation and labeling, statistical reasoning, experimentation design, and model evaluation. In addition, fundamentals in software engineering, deployment pipelines, and governance—privacy, bias mitigation, and compliance—are essential for turning models into reliable, scalable production systems. Continuous learning and cross-functional collaboration help organizations adapt to evolving technologies and business needs.