Now Reading: AutoGPT and Autonomous AI Agents: The Next Frontier

-

01

AutoGPT and Autonomous AI Agents: The Next Frontier

AutoGPT and Autonomous AI Agents: The Next Frontier

Overview of AutoGPT and Autonomous AI Agents

AutoGPT and similar autonomous AI agents represent a shift from passive, query-driven AI to proactive, goal-oriented systems that can operate with minimal human input. These agents are designed to select tasks, plan sequences of actions, and execute those actions across tools and services, all while monitoring results and adapting as new information becomes available. In business terms, they embody a shift toward agent-based automation that can handle complex workflows, retrieve data from disparate sources, and coordinate multiple steps without continuous human prompting. The practical implication is a new class of software that can augment knowledge work, streamline operations, and unlock efficiencies that are difficult to achieve with traditional automation approaches.

Despite the excitement, it is important to recognize that AutoGPT-enabled agents are not a magic solution. Their effectiveness depends on clear goals, well-defined safety constraints, robust integration with tools and data, and strong governance around how inputs, outputs, and decisions are audited. In real-world deployments, these agents excel when they address repeatable tasks, support decision-making with timely insights, and act as augmenters of human judgment rather than outright replacements. The technology sits at the intersection of natural language processing, planning, tool use, and safety engineering, and its value emerges from how well these components are orchestrated in practice.

AutoGPTs are not magic; they are structured orchestration of capabilities with human oversight and clear guardrails.

How AutoGPT Works: Architecture and Process

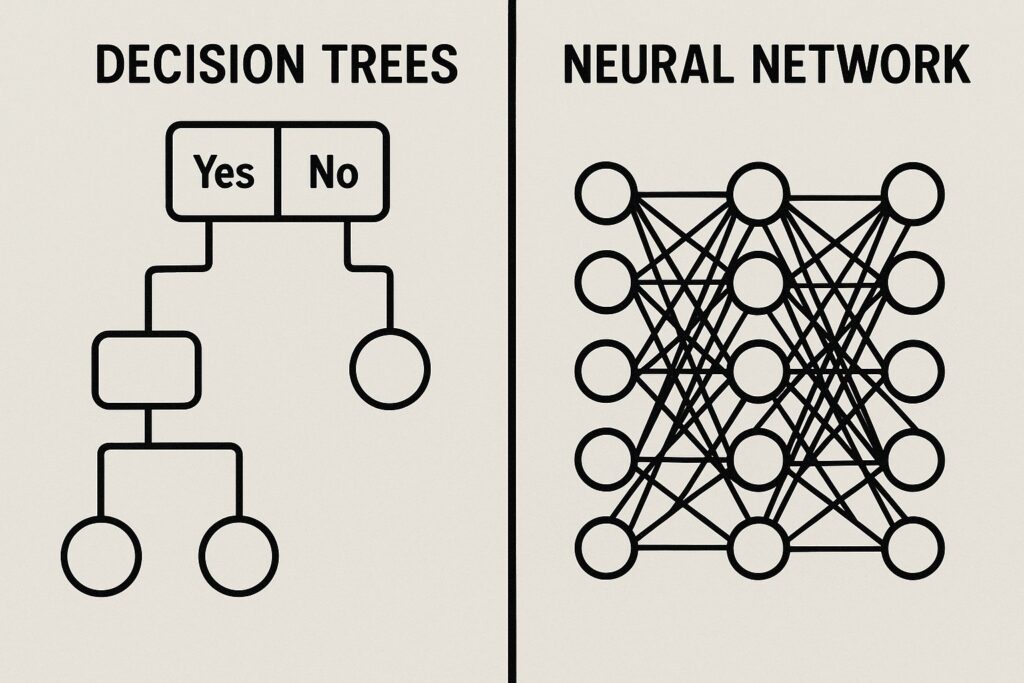

At a high level, an AutoGPT agent follows a loop that begins with a goal description, gathers relevant context, and then generates a plan that outlines the steps needed to achieve the objective. The planning phase leverages language models to decompose tasks, estimate resource requirements, and identify potential risks. Once a plan is generated, the agent proceeds to execute actions through a set of tool interfaces and systems, such as data services, computation engines, or external APIs. After each action, the agent evaluates the outcome, updates its internal state, and chooses whether to proceed with the current plan, revise it, or attempt alternative approaches. This loop continues until the goal is achieved or the agent reaches a defined stop condition.

The architectural stack behind AutoGPT typically includes a few key elements: a planning module that interprets the goal and drafts a sequence of actions; a tool integration layer that can call APIs, run computations, fetch data, or interact with software platforms; a stateful memory or cache that preserves context across steps; and safety monitors that check for policy compliance, data privacy considerations, and potential unintended consequences. In practice, teams build pipelines that connect these elements to business systems, so the agent can, for example, collect market data, synthesize insights, generate reports, and trigger downstream actions with minimal human intervention. The result is a capability that blends reasoning, automation, and real-time decision support into a cohesive workflow.

// Example loop (pseudocode)

while not goal_achieved:

plan = generate_plan(current_state, goal)

for step in plan:

result = execute(step)

current_state = update(current_state, result)

if detect_failure(result):

plan = revise_plan(current_state, goal)

break

if time_budget_exceeded or manual_override:

break

Core Capabilities and System Roles

Autonomous agents built around AutoGPT typically exhibit a core set of capabilities that enable them to operate across complex, multi-step tasks. They can decompose a broad objective into concrete subtasks, select the most appropriate tools for each step, monitor progress, and adjust strategy in response to new information. This capability stack makes them valuable as both problem solvers and orchestration engines, capable of coordinating actions across different services, data sources, and decision points. A practical implementation often assigns the agent a role aligned with a business function—such as a research assistant, an operations optimizer, or a customer interaction enhancer—and then tunes its behavior through prompts, tools, and constraints to fit that role.

Key capabilities include:

- Planning and decomposition: translating high-level goals into executable steps and milestones.

- Tool orchestration: selecting, invoking, and chaining multiple APIs and services to perform tasks.

- Execution and automation: carrying out actions with minimal human prompting and monitoring outcomes.

- Self-reflection and debugging: diagnosing failures, proposing alternative approaches, and learning from past runs.

- Knowledge retention and continuity: maintaining context across sessions to preserve progress and avoid redundant work.

Applications Across Industries

AutoGPT-style agents are being explored across a wide array of industries where routine, data-intensive, or multi-step tasks can benefit from automation and enhanced decision support. In many organizations, the first wave of adoption focuses on lightweight coaching and orchestration roles, where the agent acts as a highly capable assistant that augments human teams rather than replacing them. The most immediate benefits come from speed gains, consistency, and the ability to operate at scale without proportionally increasing headcount. As these agents mature, they are expected to tackle more sophisticated problems that previously required highly specialized human expertise.

Applications span several domains, including customer-facing processes, data analytics, software development, and supply chain operations. The following examples illustrate how autonomous agents can add value in practice:

- Customer service and support automation: handling routine inquiries, routing issues to the right systems, and generating contextual responses for escalation when needed.

- Market research and competitive intelligence: aggregating data from multiple sources, running analyses, and delivering synthesized reports with insights and recommended actions.

- Software development assistance: generating boilerplate code, performing code reviews, and orchestrating CI/CD steps based on high-level requirements.

- Operations and process optimization: monitoring system health, identifying bottlenecks, and initiating corrective workflows across distributed environments.

- Regulatory compliance and auditing support: collecting evidence, compiling logs, and preparing draft reports that align with policy requirements.

Challenges, Risks, and Governance

Beyond the technical capabilities, the deployment of autonomous AI agents raises a set of challenges and governance considerations that organizations must address to achieve durable value. Issues around alignment, transparency, data privacy, and operational risk are central to responsible adoption. The complexity of real-world environments means there will be edge cases, failures, and unexpected interactions with third-party systems. A well-governed implementation includes clear escalation paths, human-in-the-loop checkpoints for high-stakes decisions, and robust monitoring that can detect anomalies early and trigger appropriate mitigations.

Several risk categories deserve particular attention in early deployments:

- Goal alignment and intent leakage: ensuring the agent stays true to business objectives without pursuing unintended subgoals.

- Data privacy and security: protecting sensitive information when agents access external tools, data stores, or customer data.

- Reliability and fault tolerance: handling tool failures, API changes, and network issues without cascading problems.

- Auditability and accountability: maintaining a clear record of decisions, actions taken, and outcomes for compliance and learning purposes.

The Future of Generative AI and Autonomy

The trajectory of AutoGPT and autonomous AI agents points toward systems that combine increasing reasoning depth with broader tool integration while maintaining strong governance and safety rails. As models become more capable, agents may handle longer horizons, multi-domain tasks, and more nuanced collaboration with human teams. The scaling of tool ecosystems, including specialized APIs, domain-specific data sources, and programmable environments, will expand the practical boundaries of what autonomous agents can accomplish. At the same time, responsible deployment will demand clearer standards for transparency, explainability, and risk management, particularly in regulated industries or critical decision contexts.

Industry observers expect a future in which autonomous agents work alongside humans to accelerate innovation, reduce repetitive workloads, and free up time for strategic thinking. The collaboration model will emphasize complementary strengths: humans providing judgment, ethics framing, and domain intuition, while agents execute well-defined workflows, surface insights, and continuously learn from outcomes. Realizing this vision will require careful design of reward structures, governance frameworks, and safety protocols that align agent behavior with organizational values and regulatory expectations. It will also necessitate ongoing investment in tooling, monitoring, and upskilling to ensure adoption remains responsible and beneficial.

As autonomy grows, the focus shifts from building smarter agents to engineering safer, auditable, and more controllable partnerships between humans and machines.

FAQ

What distinguishes AutoGPT from traditional automation tools?

AutoGPT differs from traditional automation by possessing proactive planning, multi-step reasoning, and the ability to operate across tools and data sources with minimal human prompts. Rather than executing a fixed script, an autonomous agent analyzes goals, generates plans, and adapts as conditions change, integrating learning and feedback into its behavior.

What safeguards are essential when deploying autonomous AI agents?

Key safeguards include well-defined goals and guardrails, human-in-the-loop review for critical decisions, robust access controls and data privacy protections, auditable logs of actions and outcomes, and ongoing monitoring for anomalies, bias, or policy violations. Safety also involves ensuring that tool integrations are secure and that failure modes are anticipated and recoverable.

In which scenarios is an autonomous agent most valuable?

Autonomous agents offer the most value in scenarios that are data-intensive, require repetitive or long-running collaboration across systems, or involve multi-step workflows where human capacity is a bottleneck. They excel in research synthesis, operational optimization, and rapid prototyping, where speed, consistency, and the ability to manage complex toolchains provide meaningful advantages.

How should organizations approach governance and ethics for these systems?

Organizations should establish governance frameworks that define purpose, accountability, risk appetite, and compliance requirements. This includes creating clear ownership for model outputs, setting escalation policies, auditing decision traces, and aligning deployment with ethical guidelines and legal standards. A phased rollout with pilot programs, continuous monitoring, and stakeholder feedback helps ensure responsible adoption.