Now Reading: Bad Data Visualization Examples (and How to Avoid Them)

-

01

Bad Data Visualization Examples (and How to Avoid Them)

Bad Data Visualization Examples (and How to Avoid Them)

Introduction: the cost of bad visuals in business decisions

In business contexts, data visuals are tools for rapid comprehension and informed action. When they work well, dashboards and charts accelerate understanding, align teams, and guide strategic choices. When they fail, they mislead, erode trust, and steer decisions toward suboptimal outcomes. The costs can be tangible—missed revenue opportunities, inefficient resource allocation, or biased strategy—and intangible, such as damaged credibility with stakeholders who expect data to reflect reality. This article examines common missteps in data visualization, explains why they occur, and offers practical steps you can apply to your charts, dashboards, reports, and presentations to improve accuracy, clarity, and impact.

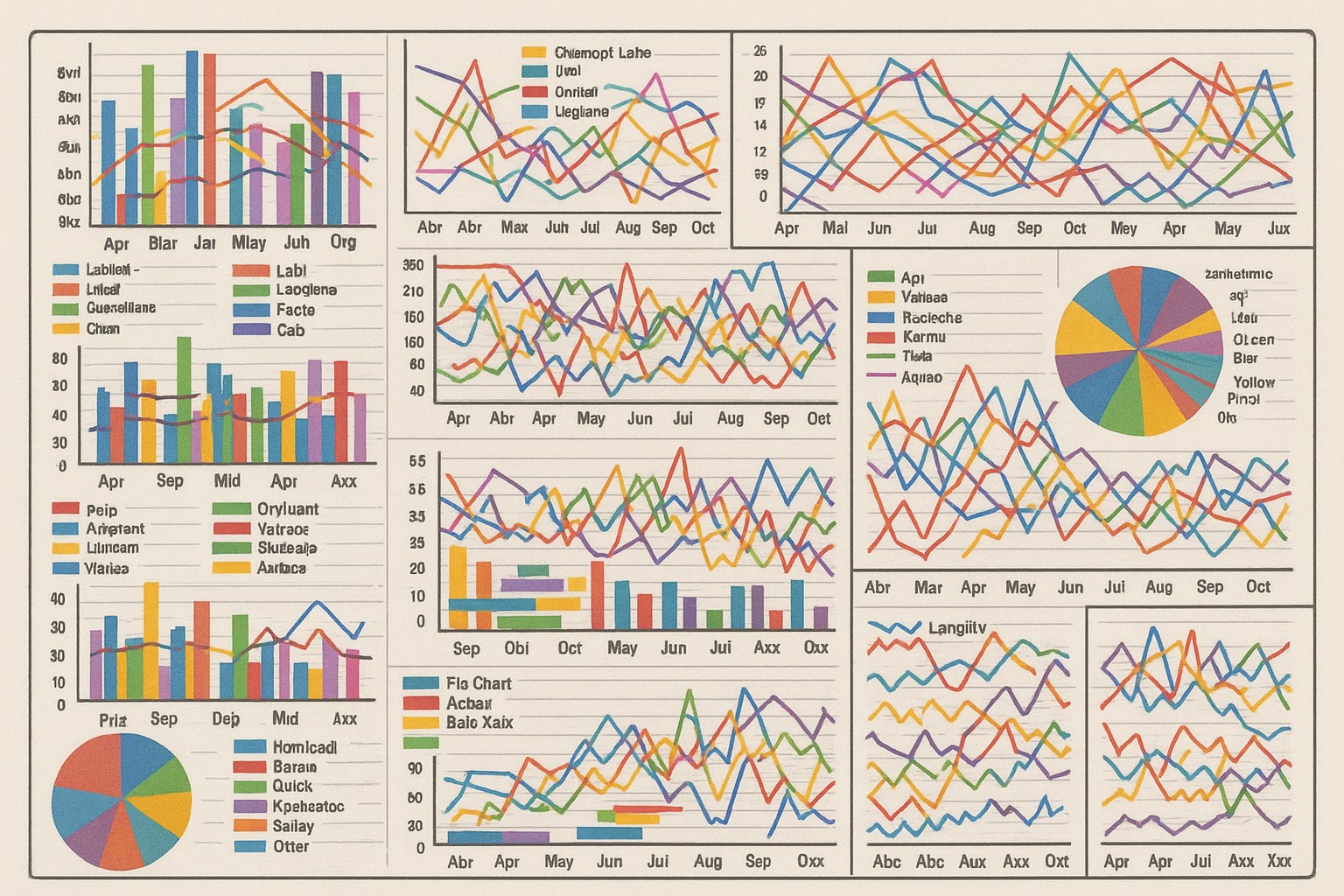

Common visual tricks that mislead stakeholders

Many misleading visuals rely on small perceptual nudges rather than outright fabrication. They exploit how people perceive scale, proportion, and trend. The most persistent issues fall into a few categories: distorted baselines, inconsistent scales, and deceptive grouping or labeling. By recognizing these patterns, you can design or critique visuals so they convey the intended message without unintentionally exaggerating or masking important nuance.

In practice, these tricks often appear in everyday business visuals—from quarterly reviews to investor decks. They are especially dangerous when stakeholders rely on a single chart to judge performance or risk. Below is a representative list of patterns to watch for, followed by concrete remedies you can apply during the design phase.

- Truncated y-axis or baseline not starting at zero, which makes small changes look dramatic

- Non-uniform axis scales across charts that are meant to be compared side by side

- Unnecessary 3D effects or perspective distortions that obscure precise values

- Dual axes without clear labeling or an explicit mapping between scales

- Cherry-picking time ranges or data points that hide volatility or seasonality

- Inconsistent category ordering or color mappings that bias interpretation

Real-world case studies of misleading visuals

Case studies from finance, product analytics, and marketing demonstrate how seemingly minor presentation choices can tilt interpretation. In many instances, the underlying data are sound, but the visualization amplifies certain narratives at the expense of accuracy. The following examples summarize typical patterns observed in real-world materials and offer guidance on how to spot and fix them quickly.

Consider the following representative scenarios that recur across industries. Each highlights how choices in chart type, data aggregation, or labeling can distort perception, and they show how straightforward adjustments can restore fidelity without sacrificing storytelling power.

- A line chart of quarterly revenue that starts the y-axis at a positive value, exaggerating growth between early and later quarters

- A donut or pie chart used to convey market shares where the legend or segmentation is incomplete, leading to misinterpretation of which segments truly dominate

- A stacked area chart meant to show composition over time but that omits absolute scales, making total growth appear more or less dramatic than it is

- A bar chart comparing departments that uses uneven bin widths or inconsistent tick marks, encouraging a perception of bigger gaps than exist

- A dashboard with multiple charts using different color schemes for the same category, causing cognitive dissonance and slowing comprehension

Principles for good data visualization design

To reduce the risk of misinterpretation, it helps to anchor your visuals in a small set of consistent, well-reasoned design principles. These guidelines focus on aligning the visualization with the decision question, using accurate encodings, and preserving context. When applied rigorously, they enable faster, more accurate interpretation while preserving the storyteller’s intent. The following principles are presented in a practical sequence you can apply during chart selection, layout, and annotation.

- Define the decision question first: choose a chart type and encoding that answer that question directly, avoiding extraneous elements that distract or confuse.

- Encode data accurately: ensure the visual encodes magnitude, time, and category in a way that preserves proportional relationships and scale integrity.

- Use perceptually uniform scales: prefer linear scales for quantitative comparisons unless a nonlinear transformation is necessary to reveal a pattern, and always start quantitative axes at a baseline that reflects the data’s nature.

- Choose the simplest appropriate chart: avoid novelty in favor of interpretability; if a bar chart or line chart conveys the message clearly, don’t default to a more complex form.

- Maintain consistency across visuals: use stable colors, fonts, and axis conventions across a report or dashboard, so users don’t relearn the mapping with each chart.

- Provide context and annotations: offer explicit baselines, data sources, timeframes, and notes to explain unusual patterns or outliers rather than leaving them to readers’ inference.

Audit practices for dashboards and reports

Beyond initial design, ongoing critique is essential to maintain trust as data and business questions evolve. A practical audit focuses on the alignment between the visuals and the underlying data story, checks for perceptual biases, and verifies consistency across the entire set of visuals in a dashboard or report. An efficient audit can be integrated into your weekly or monthly data-review cadence, with lightweight checks that do not slow decision-making.

Key checks that teams often adopt include validating axis baselines, confirming that the same data source is used consistently for related charts, ensuring that time ranges align across visuals, and verifying that color and labeling remain consistent when categories appear in multiple charts. Documenting the checks, owners, and timing helps sustain a culture of data integrity and accountability without creating heavy process overhead.

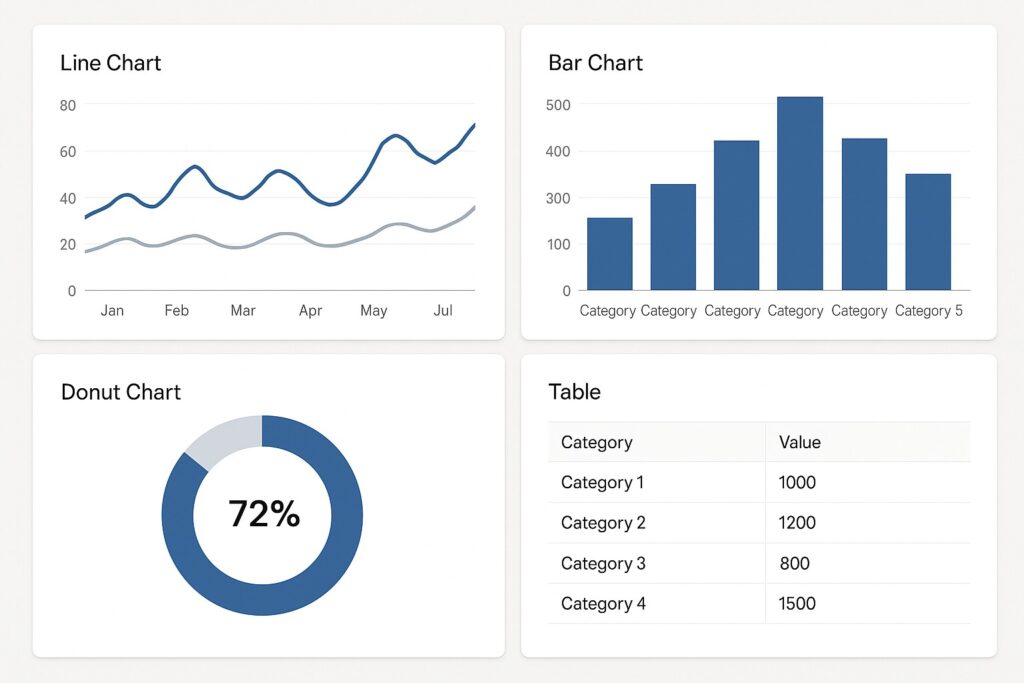

Practical guidelines for dashboards and reports

Dashboards and reports sit at the intersection of storytelling and monitoring. They should deliver a quick read on current status while providing enough depth to understand underlying drivers. The practical guidelines below focus on actionable steps you can apply in most business contexts—from sales dashboards to product analytics and executive summaries.

Start with a clear hierarchy: a primary KPI at the top, with supporting charts that offer context or reveal drivers. Keep layouts simple and scannable, ensuring that the most important chart is easy to locate. Use annotations to flag notable shifts, anomalies, or decisions that hinge on the data. Remember that accessibility matters: ensure sufficient color contrast, provide text alternatives for non-visual users, and keep font sizes legible for readers in both print and on high-resolution displays.

When building visuals, consider your audience. Executives may want high-level trends with occasional deep dives, while analysts will expect precise numbers and transparent methods. Providing a controlled drill-down pathway—where the top-level view links to deeper analyses without overwhelming the initial screen—helps different stakeholders get what they need without compromising clarity.

Conclusion: turning bad visuals into trustworthy practice

Bad data visuals are not a failure of data; they are a failure of communication. By starting with clear questions, choosing appropriate chart types, maintaining consistent scales, and annotating decisions and limitations, you can turn every chart into a reliable instrument for decision-making. The heuristics outlined here are not a one-time checklist but a discipline—an ongoing practice of evaluating, testing, and refining visuals with a focus on accuracy, transparency, and accessibility. When teams adopt these practices, the resulting visuals become a business asset that reinforces credibility and accelerates informed action rather than undermining it.

FAQ

What is considered a bad visualization?

A bad visualization misrepresents or obscures the underlying data. It can result from distorted scales, misleading baselines, selective data ranges, or ambiguous labeling. More subtly, even accurate data can be shown in a way that biases interpretation through chart type choices, color mappings, or poor layout. The key is whether the visual facilitates correct interpretation and supports the intended decision; if it does not, it is likely a bad visualization.

How can I evaluate charts for accuracy?

Begin with the question the chart intends to answer, then examine the axis baselines and scales to confirm they reflect the data’s nature. Check whether the data range is appropriate, whether any data points are omitted or aggregated in ways that alter perception, and whether labels, legends, and sources are clear. Compare related visuals to ensure consistent encoding, and look for annotations that explain anomalies or limitations. Finally, test whether non-expert readers can interpret the chart correctly by explaining it aloud or having a colleague summarize the takeaway.

What steps help ensure accessibility in data visuals?

Prioritize high-contrast color combinations and avoid color alone to convey meaning. Use descriptive titles and labels, provide sufficient spacing, and ensure that readers relying on screen readers can access the information. When possible, offer alternative text for charts and provide numeric data or a summary alongside visuals. Keep chart elements large enough for comfortable viewing and avoid relying on color to distinguish critical categories; use patterns, textures, or labels in addition to color where feasible.