Now Reading: SQL vs NoSQL: Understanding Database Differences

-

01

SQL vs NoSQL: Understanding Database Differences

SQL vs NoSQL: Understanding Database Differences

Introduction to SQL and NoSQL

SQL databases have underpinned enterprise data management for decades, delivering structured storage, strong data integrity, and powerful ad hoc querying through a standardized language. They rely on a rigid, predefined schema that enforces relationships via foreign keys and joins, ensuring that data remains consistent across tables even as the system scales. In many core business processes—financial transactions, inventory management, customer records, and compliance reporting—SQL databases are valued for predictable performance, mature tooling, and well-understood governance practices.

NoSQL databases emerged as a response to the demands of modern web-scale workloads: high-velocity data, varied formats, and the need for flexible deployment architectures. They emphasize schema flexibility, optimized storage for particular data models (documents, key-value pairs, wide columns, graphs), and horizontal scalability across distributed environments. NoSQL systems often prioritize availability and partition tolerance, enabling rapid growth and resilience in the face of shifting workloads. Together, SQL and NoSQL represent complementary approaches to data management, and many organizations adopt a polyglot persistence strategy that uses each where it fits best.

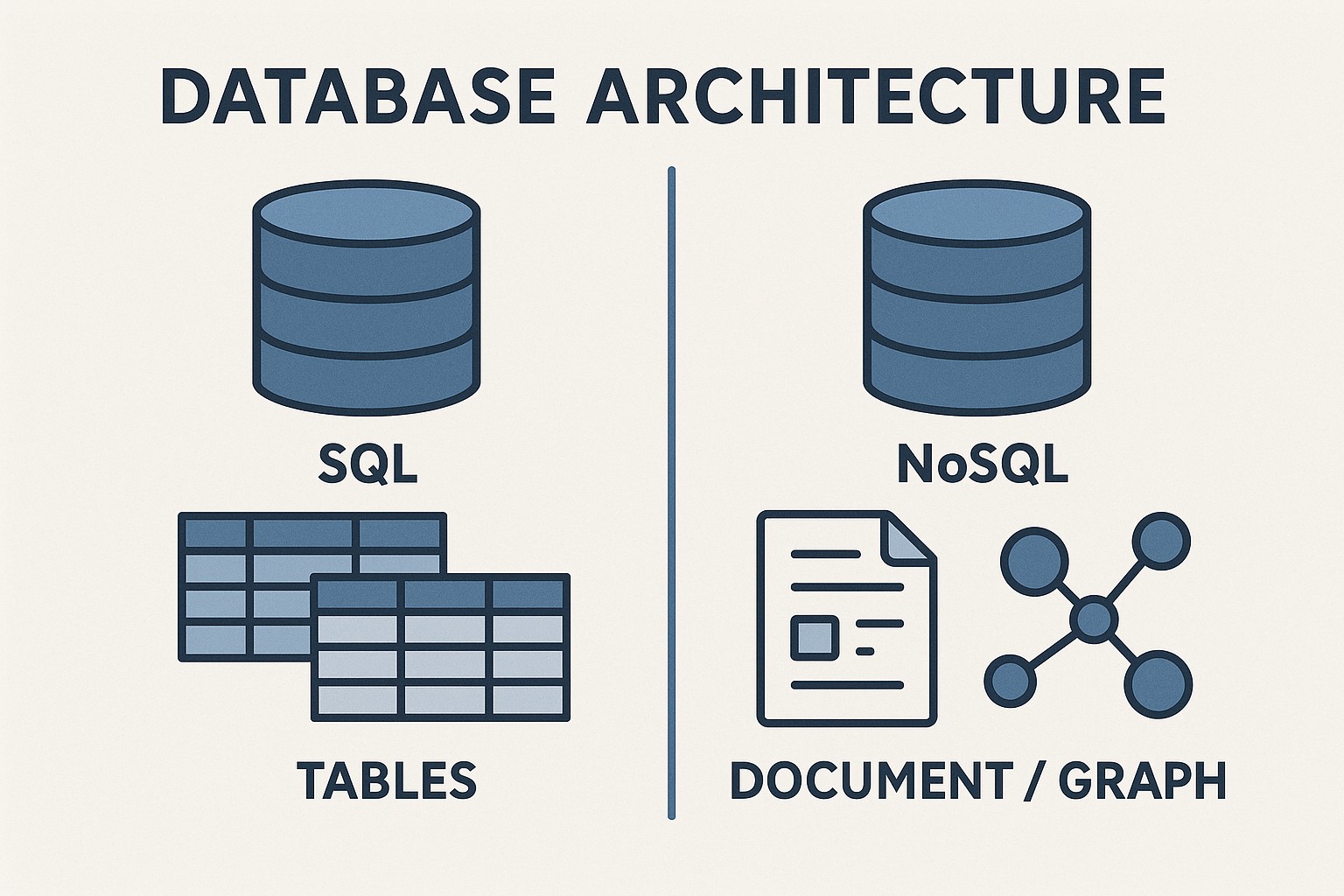

Data models and schemas

In SQL databases, data is organized into tables with rows and columns, and the schema defines data types, constraints, and relationships. Normalization helps minimize redundancy by splitting data into related tables, while foreign keys enforce referential integrity. This model excels when data relationships are well defined and the costs of maintaining consistency are outweighed by the benefits of precise updates, reliable reporting, and complex query capabilities that join multiple entities in a single operation.

NoSQL databases offer a different paradigm: models built around documents, key-value pairs, wide-column families, or graphs, often with schemas that can evolve over time. This flexibility makes it easier to incorporate new data attributes without heavy migrations, and it can support nested structures and varied data shapes within the same collection or bucket. However, the absence of fixed schemas raises questions about data containment, indexing strategies, and the overhead of maintaining consistency across distributed replicas. The choice of data model should be guided by the nature of the data and the types of queries that matter to the business.

- Relational databases rely on tables, rows, and columns with explicit schema definitions and strong normalization.

- NoSQL databases emphasize schema flexibility, enabling formats such as JSON-like documents, key-value pairs, column families, or graph structures.

- Normalization versus denormalization shapes storage, performance, and update patterns, with tradeoffs in query simplicity and write throughput.

- Join capabilities and complex transactional semantics differ across systems, influencing how data integrity is enforced in practice.

- Indexing and query strategies evolve with the data model, affecting read performance and the ease of analytics.

Scalability and performance considerations

SQL databases have historically scaled by increasing the capacity of a single server (vertical scaling) and by thoughtfully partitioning data (sharding) or adding read replicas to handle growing read traffic. In practice, horizontal scale for SQL often involves more infrastructure coordination and carefully designed schemas to preserve performance and integrity as data volumes rise. Modern SQL engines and distributed architectures have reduced some of these barriers, but the core tradeoff remains: strong consistency and rich transactions can complicate distributed execution, especially for very large write-intensive workloads.

NoSQL databases are frequently designed with horizontal scalability as a primary goal. They partition data across many nodes, enabling near-linear growth in throughput by adding capacity. This approach aligns well with workloads that generate large volumes of writes or reads with modest transactional requirements, or that require flexible schemas and rapid iteration. Caching strategies, eventual or tunable consistency, and specialized storage formats help NoSQL systems achieve low latency at scale, but may introduce complexity in guaranteeing up-to-date results or enforcing cross-document constraints. Performance planning for NoSQL often centers on data access patterns, partition keys, and the cost of maintaining consistency across distributed replicas.

Consistency, transactions, and CAP

The CAP theorem states that a distributed system cannot simultaneously guarantee Consistency, Availability, and Partition tolerance in all scenarios. SQL databases have traditionally leaned toward strong consistency and ACID transactions, offering predictable isolation and durable writes across related rows and tables. This makes SQL a natural fit for applications where correctness is non-negotiable and multi-row or multi-table operations must succeed atomically.

NoSQL systems have historically favored Availability and Partition tolerance, opting for eventual consistency or configurable consistency levels to maintain responsiveness in partitioned networks. Over time, many NoSQL platforms added stronger transactional guarantees, sometimes limited to single-document operations or to specific use cases, while others embraced broader multi-document transactions. The practical takeaway is that consistency models are not one-size-fits-all: the right balance depends on workload characteristics, latency requirements, and acceptable levels of data staleness for a given application.

When to choose SQL vs NoSQL

Deciding between SQL and NoSQL depends on a combination of data characteristics, access patterns, regulatory considerations, and organizational capabilities. A careful assessment of current and projected workloads helps prevent over- or under-provisioning and avoids expensive migrations later. In practice, many teams start with a tendency toward one model for core systems and retain flexibility to move or replicate data into other stores as requirements evolve.

- Data relationships and integrity: If your domain features complex inter-table relationships, strong constraints, and transactional consistency across multiple entities, SQL is typically the safer choice. If relationships are simple or can be modeled with denormalized structures and idempotent operations, NoSQL may be suitable.

- Query complexity and reporting needs: SQL shines for sophisticated joins, aggregations, and ad-hoc analytics using established tooling. NoSQL can support analytics well when data is modeled with the query patterns in mind, but extensive cross-collection joins or multi-collection reporting may be less convenient.

- Scale and throughput requirements: For high-velocity, massive-scale workloads that demand elastic, global distribution, NoSQL often provides more straightforward horizontal scaling. If write throughput and transactional consistency across many entities are paramount, SQL or distributed SQL variants can provide clarity and reliability at scale.

- Schema stability and evolution: When the schema is stable and changes are infrequent, SQL’s strong typing and migration processes deliver predictability. If the data model is rapidly evolving or varies across clients, NoSQL’s flexibility can reduce migration friction.

- Regulatory, auditing, and governance needs: Enterprises with strict audit trails, fixed schemas, and mature governance processes often favor SQL for its mature tooling and enforceable constraints. NoSQL can still comply with governance requirements but may require additional layers to ensure traceability and policy enforcement.

- Team expertise and ecosystem: The choice may reflect the skills and experiences of the development and operations teams, as well as the availability of mature tooling, support, and community knowledge for the chosen model.

Operational considerations and modernization patterns

Beyond data modeling and architecture, operational realities guide the path to production. Tooling maturity, monitoring, backups, disaster recovery, and security controls differ across SQL and NoSQL ecosystems. Organizations should assess vendor support, cloud or on-premises options, and the ease of integrating the database with existing data pipelines, analytics platforms, and application runtimes. A thoughtful strategy also considers long-term maintenance costs, vendor roadmaps, and the risk of lock-in when selecting a particular technology stack.

Modern data architectures increasingly embrace polyglot persistence—using multiple data stores intentionally to handle different workloads. A practical pattern couples stable, structured data in SQL with high-velocity, semi-structured data in NoSQL, while leveraging data pipelines to synchronize or replicate data for analytics and reporting. When approaching modernization, begin with a clear mapping of data access patterns, identify source of truth data, and plan incremental migration with rollback mechanisms and robust testing. This approach minimizes disruption while enabling teams to experiment with the most suitable data stores for each workload.

- Start with a hybrid or phased approach: keep core transactional data in a relational store while moving acceleration points, caches, or specialized datasets to NoSQL where they fit best.

- Invest in data pipelines, adapters, and change data capture to synchronize data across stores without causing application downtime.

- Define governance, access controls, and security policies that span multiple data stores and ensure consistent auditing and compliance across environments.

Case studies and practical patterns

In practice, a retailer might use SQL as the system of record for orders and customer accounts, leveraging robust transactions to prevent double charges and ensure accurate inventory alignment. A NoSQL store could power product catalogs, session data, and real-time recommendations, where schema flexibility and low-latency reads at scale are valuable. A media platform might store user profiles in a document store for flexible attributes while maintaining relational data for billing and subscriptions in SQL. The key is to design around access patterns, not just data volumes, and to establish clear data ownership across stores.

Organizations that succeed with mixed stores typically invest in clear boundaries, explicit data synchronization strategies, and monitoring that spans both technologies. They also embrace a culture of continual evaluation—periodically reassessing workloads as traffic, product requirements, and regulatory constraints evolve. The result is a resilient architecture that can adapt to changing requirements without forcing a monolithic choice upfront.

FAQ

What is the key difference between SQL and NoSQL?

The essential distinction lies in data modeling and consistency guarantees. SQL uses structured schemas with relations, supports joins, and enforces ACID transactions for strong integrity. NoSQL embraces flexible data models such as documents, key-value pairs, or graphs, often favoring scalability and availability with tunable or eventual consistency, which can simplify horizontal scaling and rapid development for certain workloads.

Can NoSQL be used for transactional workloads?

Some NoSQL systems offer transactional capabilities, including single-document transactions or limited multi-document transactions, and several have expanded support for broader transactions. However, achieving comprehensive ACID guarantees across multiple documents or collections may be more complex and may involve tradeoffs in performance or latency. The suitability depends on the required isolation level, the scope of transactional boundaries, and the acceptable latency for operations.

Is it possible to migrate from SQL to NoSQL?

Migration is possible but typically non-trivial. It requires translating relational models into the target NoSQL data model, redesigning queries, and often denormalizing data to align with the chosen store. An incremental approach—starting with components that benefit most from NoSQL, implementing data pipelines to synchronize updates, and maintaining backward compatibility during the transition—helps reduce risk and downtime.

What is NewSQL?

NewSQL refers to a family of modern relational databases that aim to deliver the scalability of NoSQL while preserving SQL as the primary language and maintaining ACID guarantees. These systems typically use distributed architectures and advanced optimization techniques to achieve high throughput without abandoning the familiar relational model and strong consistency.

How should a company decide which path to take?

A practical decision framework starts with the business requirements: assess data relationships, query patterns, throughput needs, latency targets, regulatory constraints, and team capabilities. Consider a phased, polyglot approach that uses SQL for systems of record and NoSQL for high-velocity or flexible data, with clear data synchronization strategies. Finally, design for evolution—build with observability, portability, and future migration in mind to avoid locking into a single technology choice prematurely.