Now Reading: Stack Overflow vs AI Coding Assistants: Developer Support

-

01

Stack Overflow vs AI Coding Assistants: Developer Support

Stack Overflow vs AI Coding Assistants: Developer Support

Overview of developer support ecosystems

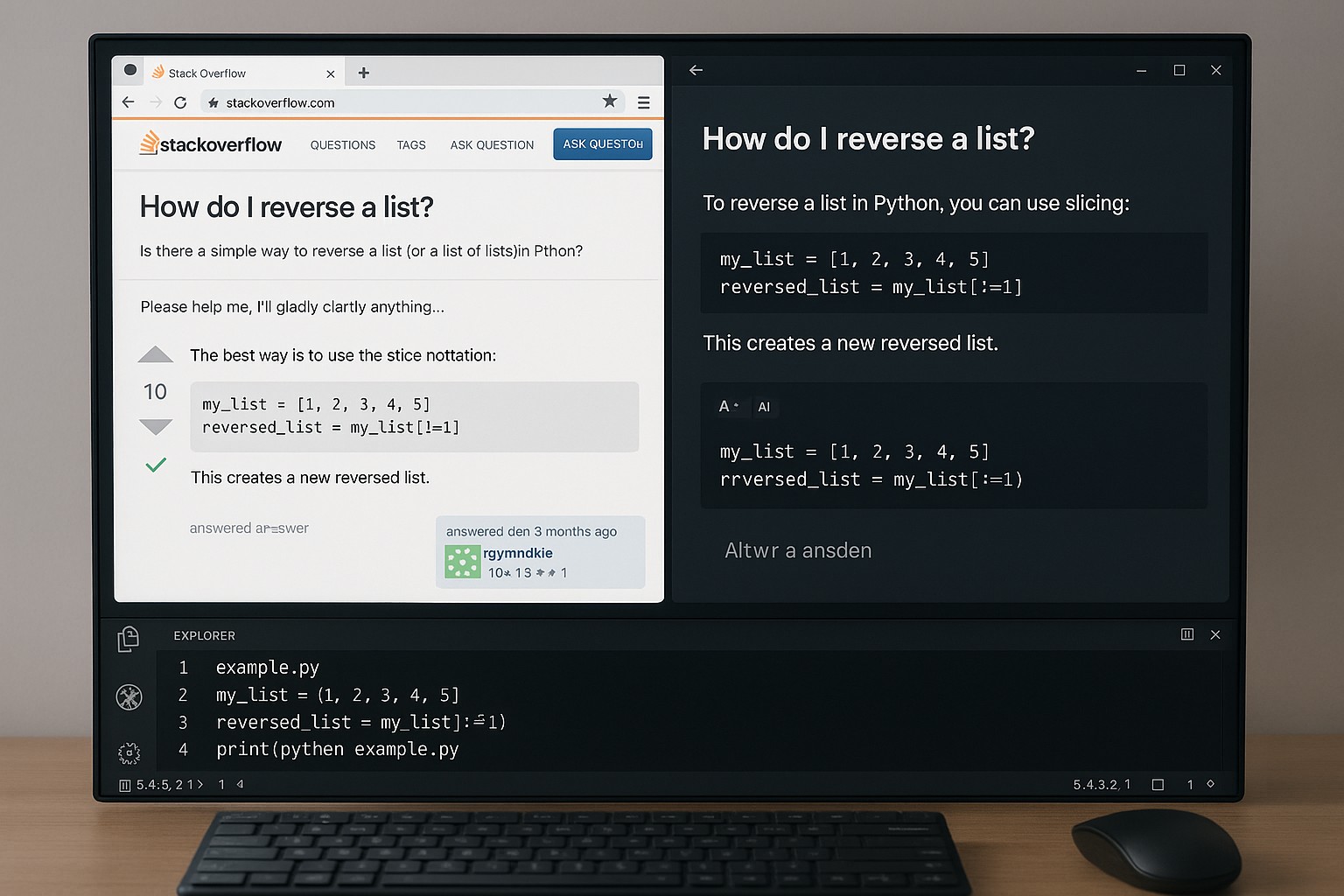

In modern software development, teams rely on a mix of structured documentation, community wisdom, and increasingly, AI-assisted tooling to solve problems quickly. Stack Overflow and similar forums provide a vast, pluralistic repository of real-world coding challenges and proven solutions, created by a broad community of practitioners. AI coding assistants, by contrast, aim to deliver on-demand, context-aware guidance embedded directly within the developer’s workflow. Each modality addresses distinct moments in the problem-solving journey, from discovering a known pattern to generating a first-pass implementation.

Understanding where each approach shines—and where it falters—helps organizations design workflows that maximize productivity while minimizing risk. This article examines traditional community-driven support and AI-powered coding assistants through a practical lens, highlighting strengths, limitations, and how teams can blend them effectively in real-world software delivery.

Stack Overflow: Traditional community-driven support

Stack Overflow’s core value proposition is community-driven problem solving. Developers post clear questions, supply minimal reproducible examples, and rely on peers to review, critique, and refine potential solutions. The upvote–downvote mechanism, reputation incentives, and a long history of resolved Q&As create a living knowledge base that is searchable and continuously updated. It is especially strong for language- or framework-specific questions, debugging edge cases, and integrating snippets into production code with real-world constraints.

However, the model hinges on the availability of domain-savvy peers and the specificity of questions. Complex architectural decisions, proprietary constraints, or organizational guidelines may fall outside the forum’s typical scope. While the platform can surface valuable patterns and quick fixes, it may not always deliver end-to-end guidance or reflect an organization’s internal standards, security policies, or integrated tooling decisions.

- Broad coverage across languages, frameworks, and domains

- Peer-vetted answers with transparent voting and review trails

- Rich, real-world code examples and practical workflows

- Archival knowledge remains discoverable through search and tagging

- Signals of reliability, such as accepted answers and comment feedback

Stack Overflow: Limitations and risks

While powerful, Stack Overflow presents several limitations that organizations should acknowledge. The quality of answers can vary, and context may be missing or misaligned with a specific codebase. Answers can become outdated as libraries and APIs evolve, yet older threads may persist with deprecated guidance. Sensitive or proprietary information can inadvertently be shared in public posts, creating governance and security concerns for teams. The platform is also less suited to addressing large-scale design decisions or internal standards that require alignment with internal tooling and processes.

To mitigate these risks, teams commonly complement Stack Overflow with internal documentation, code reviews, and standardized onboarding materials. It is essential to search for existing, relevant, and up-to-date guidance within organization-specific resources before applying external recommendations. Relying on community content alone for critical decisions increases the chance of misalignment with internal requirements or compliance constraints.

- Variable quality and context misalignment between questions and answers

- Outdated or deprecated guidance that no longer reflects current ecosystems

- Difficulty scaling for architecture-level decisions or governance topics

- Potential for sensitive data exposure when posting code snippets

- Fragmented threads and lack of end-to-end context for multi-faceted problems

AI coding assistants: Accelerating problem solving

AI coding assistants integrated into development environments can dramatically accelerate exploration, scaffolding, and debugging. They can generate boilerplate code, propose alternative approaches, explain complex concepts, and assist with quick refactoring or test scaffolding. When used as an on-demand copilot, these tools can reduce context switching, shorten turnarounds, and help junior developers learn by example, all while staying within the editor where the code resides.

Yet AI assistants are not infallible. They may hallucinate code, misinterpret requirements, or propose solutions that do not align with internal standards or security practices. There are important concerns around data handling, licensing, and proprietary code being sent to external models. Consequently, human review, adherence to coding standards, and governance around the use of AI in production code remain essential components of any strategy that relies on these tools.

- Rapid scaffolding, boilerplate generation, and exploratory coding

- Contextual explanations, debugging hints, and learning support within the IDE

- Consistent, cross-language suggestions and quick refactoring ideas

- Support for unit-test generation and edge-case exploration

Comparative analysis and best practices

In practice, most development teams blend both approaches to balance speed with verifiability. Stack Overflow remains a valuable source of domain knowledge, real-world patterns, and community-tested solutions when the problem has a known, shareable context. AI coding assistants excel at rapid prototyping, repetitive coding tasks, and in-editor guidance, making them particularly useful for onboarding and reducing cognitive load during routine work. The optimal workflow leverages AI assistance for fast iteration and Stack Overflow for deeper domain insight and peer verification, with internal documentation and code reviews tying the threads together.

To minimize risk, organizations should implement governance around the use of AI and external knowledge sources. Clear policies on sensitive data, licensing, and attribution help prevent data leakage and ensure compliant usage. Integrating these tools into a structured development process—where AI-generated code is automatically scanned by linters and reviewed by peers before integration—can preserve quality while maintaining velocity.

+------------------------+------------------------------+---------------------------------+------------------------------+ | Channel | Strengths | Limitations | Best Use | +------------------------+------------------------------+---------------------------------+------------------------------+ | Stack Overflow | Broad coverage; peer-vetted | Variable quality; context gaps | Real-world debugging; search | | AI Coding Assistants | Rapid iteration; in-editor help| Hallucinations; privacy/licensing | Prototyping; scaffolding | +------------------------+------------------------------+---------------------------------+------------------------------+

Practical considerations for teams

Successful adoption requires concrete governance and practical workflows. First, establish policies on what content can be shared with AI tools, and avoid sending secrets, credentials, or proprietary business logic to external models. Second, define when to rely on AI assistance versus traditional sources such as internal docs, design reviews, and code ownership. Third, implement review practices that treat AI-suggested code as a draft, subject to same standards as human-generated code, including security checks and dependency management. Finally, consider licensing and compliance implications for code produced with AI assistance, and ensure your usage aligns with vendor terms and organizational risk appetite.

Measuring impact is equally important. Teams should track time-to-solution, the quality of resulting code, and the rate of post-deployment issues to gauge whether AI and community-driven support are delivering the expected value. Regular retrospectives that examine how effectively each channel contributes to learning, velocity, and risk reduction help organizations fine-tune their balance over time.

FAQ

Common questions teams ask as they balance Stack Overflow and AI-enabled coding tools are below. Each answer is concise and anchored in practical, governance-focused considerations.

What is the primary difference between Stack Overflow and AI coding assistants?

Stack Overflow provides human-generated, peer-vetted answers drawn from a broad community and documented experience, while AI coding assistants offer automated, context-aware guidance and code generation integrated into the developer’s editor. Their reliability and scope differ: Stack Overflow benefits from real-world validation and domain nuance, whereas AI assistants excel at rapid iteration and scaffolding but require careful validation to avoid inaccuracies and policy breaches.

Can AI assistants replace Stack Overflow for complex debugging?

No. AI assistants can accelerate exploration and generate starter code, but they cannot fully replace the depth of domain knowledge, long-term maintainability decisions, and governance that human communities provide. For complex debugging that involves architectural considerations, security constraints, and organizational standards, human input and internal documentation remain essential.

How should developers balance AI assistance with human input?

Use AI as a first-pass helper to reduce repetitive tasks, bootstrap implementations, and assist onboarding. Then validate AI outputs through code reviews, pair programming, and adherence to internal guidelines. Maintain a strong habit of consulting internal docs, design reviews, and subject-matter experts for decisions that affect security, data handling, or long-term maintainability.

What about security and privacy concerns when using AI assistants?

Avoid sharing secrets, credentials, or proprietary logic with external AI services. Prefer enterprise-grade or on-premise AI solutions when possible, and implement guardrails that strip sensitive information from prompts. Align usage with corporate policies, data classification schemes, and licensing terms to minimize risk and ensure compliance.

How do costs and licensing compare between Stack Overflow and AI tools?

Stack Overflow offers a free-to-use experience for individual developers, with enterprise versions and paid support options for organizations. AI tools typically operate on subscription models, with licensing that governs code generation, data handling, and model usage. When evaluating cost, consider not only direct subscription fees but also productivity gains, potential licensing constraints on generated code, and the risk-adjusted value of improved throughput versus the need for thorough human oversight.