Now Reading: AI Governance Frameworks and Tools for Responsible AI

-

01

AI Governance Frameworks and Tools for Responsible AI

AI Governance Frameworks and Tools for Responsible AI

Strategic alignment and governance objectives

Organizations increasingly rely on AI to drive strategic outcomes, but the same power that enables efficiency also creates risk. An effective AI governance framework sits at the intersection of strategy, risk management, and ethics, ensuring that AI initiatives align with business objectives, risk appetite, and regulatory expectations. By codifying decision rights, policies, and disclosure obligations, boards and executive teams can provide clear oversight while empowering product teams to innovate responsibly. A well-defined governance posture translates strategic intent into actionable controls across people, process, and technology, enabling consistent behavior across multiple AI domains and use cases.

To operationalize governance, assign explicit roles and responsibilities using a RACI or RASCI model, including owners for data, models, and deployment pipelines. Establish cross-functional accountability that includes risk, compliance, privacy, security, legal, and business units, with escalation paths for incidents or misalignment. Integrate ethical considerations into product development by embedding privacy-by-design, bias detection, explainability, and auditability into the earliest stages of design and into ongoing monitoring. This approach helps reduce friction with regulators and customers while creating a clear line of sight from strategy to operations.

Governance is not a one-off project but a living capability. Create a governance charter and policy framework that is reviewed and updated on a regular cadence and tied to policy lifecycle management. Define KPIs and metrics to monitor adoption, risk reduction, and transparency, and implement routine audits, red-teaming exercises, and independent reviews. Align governance with procurement, vendor risk management, and third-party AI services to ensure that external solutions meet the same standards as internal ones. The result is a scalable, repeatable pattern of responsible AI deployment that supports innovation without compromising trust or compliance.

- Establish a formal AI governance charter and policy suite.

- Align AI initiatives with enterprise risk management and compliance requirements.

- Define accountability, decision rights, and escalation paths for AI systems and outputs.

- Implement ongoing monitoring, auditing, and remediation processes.

- Integrate vendor and supply chain governance for AI-enabled solutions and services.

Frameworks and control mechanisms

Many organizations anchor their AI governance in established frameworks that provide structured processes for risk management, assurance, and accountability. The NIST AI RMF, for example, offers a lifecycle-oriented approach that spans governance, risk management, data stewardship, model development, deployment, and monitoring. Complementing this are regional and international standards and regulations, such as the EU AI Act’s requirements for transparency, safety, and accountability, and the ongoing work of ISO/IEC JTC 1/SC 42 on AI-related governance and data management. Together, these frameworks help organizations translate high-level principles into concrete controls and measurable outcomes, while preserving flexibility to adapt to rapidly evolving AI technologies.

Turning frameworks into practice requires mapping governance objectives to explicit control activities across the lifecycle. This includes risk assessments at design time, validation and verification during development, ongoing monitoring in production, incident response capabilities, and independent audit trails. The governance model should cover data governance, model risk management, bias mitigation, explainability, security, privacy, and vendor management. By tying these controls to a risk-based taxonomy and a clear policy hierarchy, organizations create a robust, auditable system that scales with the number and complexity of AI applications.

- NIST AI RMF (risk management framework for AI)

- ISO/IEC JTC 1/SC 42 standards for AI governance and data management

- EU AI Act and related regulatory expectations (transparency, accountability, and safety)

- Internal model risk management processes and independent testing and validation

Tools and implementation patterns

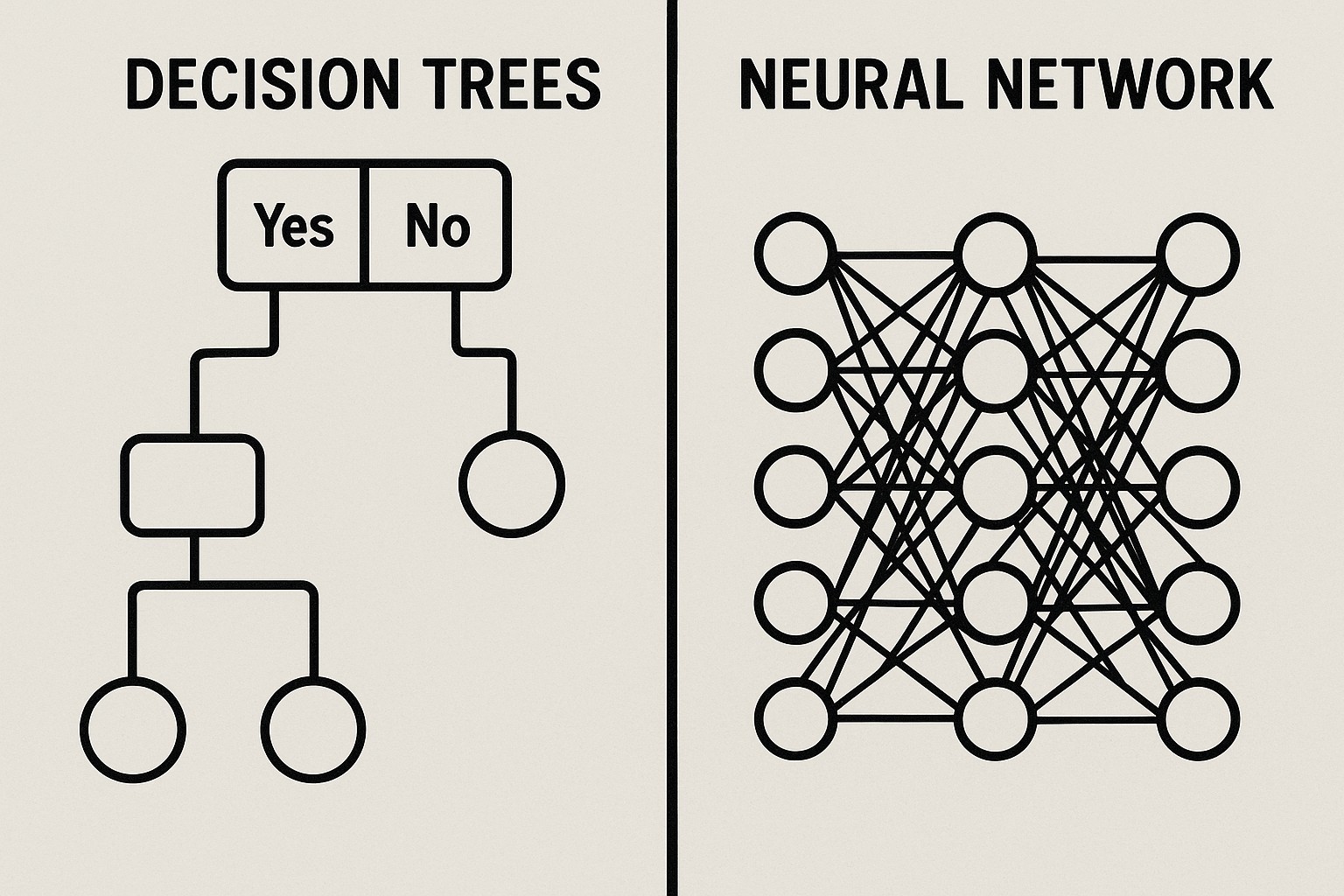

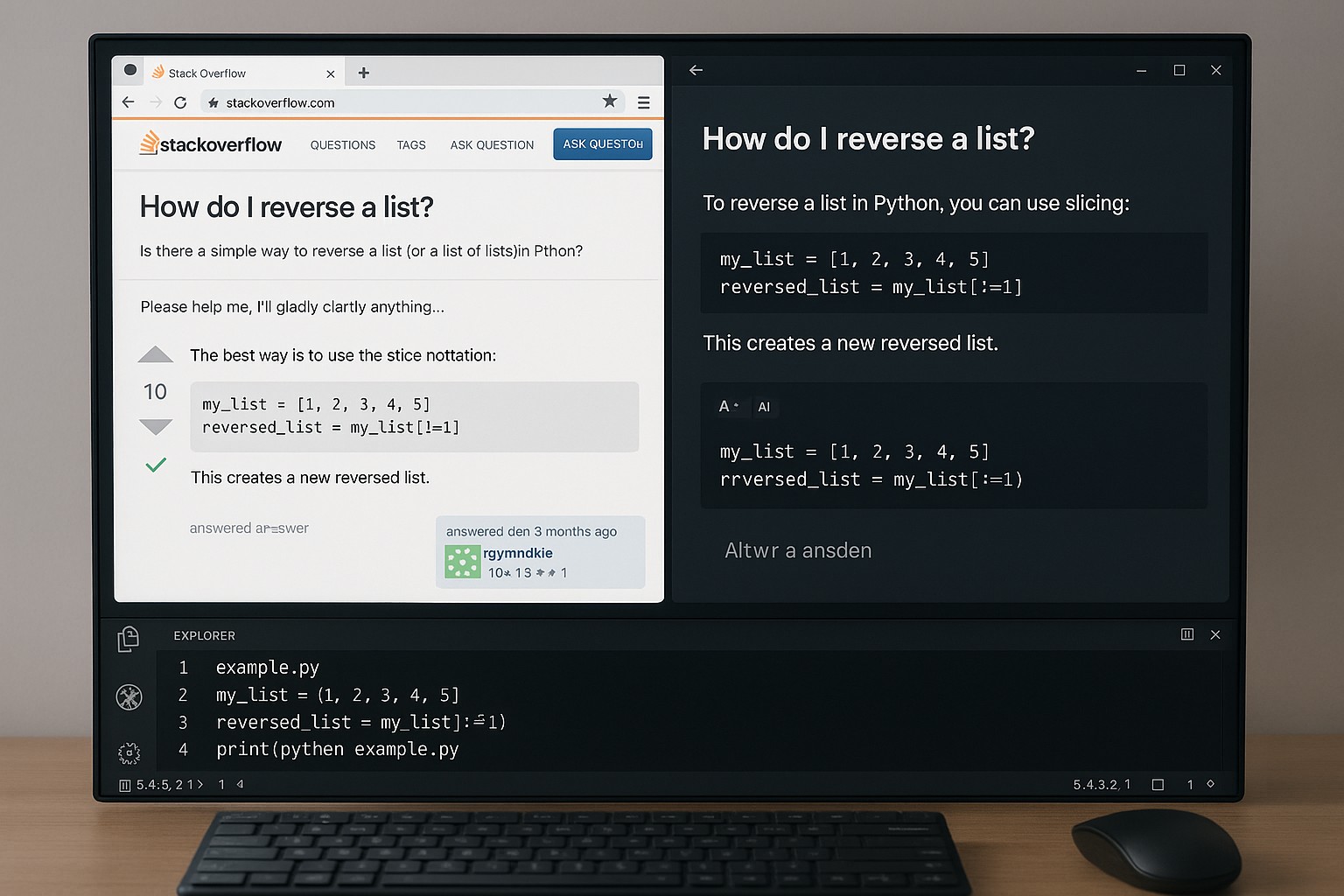

Practically, organizations rely on a suite of AI governance tools to operationalize the framework. Model risk management platforms coordinate development, validation, monitoring, and remediation activities; data lineage tools track provenance and transformations across data pipelines; and policy engines enforce guardrails in deployment environments. In addition, bias and fairness testing tools enable systematic evaluation across demographic groups and scenarios, while explainability and monitoring dashboards provide visibility to product teams, auditors, and regulators. Integrating these tools within a centralized governance platform reduces silos and helps teams demonstrate compliance during audits or regulatory reviews.

Effective implementation also requires repeatable patterns rather than one-off efforts. Start with a risk-based deployment model that emphasizes pilots, staged rollouts, and automatic rollback in response to safety, fairness, or privacy concerns. Build auditability into the pipeline with immutable logs and versioned artifacts, and implement alerting, incident response playbooks, and post-incident reviews. Finally, treat governance as code where policy definitions, data access controls, and monitoring configurations are version-controlled, tested, and deployed with the same rigor as production software. This approach ensures consistency, traceability, and continuous improvement across all AI systems.

- Model risk management platforms and governance dashboards

- Bias and fairness testing and auditing tools

- Explainability, interpretability, and decision-traceability tooling

- Data governance and lineage tooling

What is an AI governance framework, and why is it important?

An AI governance framework is a structured set of policies, roles, processes, and controls designed to ensure AI systems are developed and operated responsibly. It covers fairness, transparency, safety, privacy, regulatory compliance, and accountability across the entire lifecycle—from data collection and model development to deployment and ongoing monitoring. By providing clear decision rights, risk management, and auditability, such a framework helps organizations innovate with AI while safeguarding stakeholders and building trust.

How can organizations start implementing AI governance in practice?

Begin with executive sponsorship and a charter that defines scope, objectives, and success metrics. Build a cross-functional governance team, map the data and model lifecycles for critical use cases, and implement a minimal viable set of controls to address high-risk areas first. Establish risk assessments, policy enforcement mechanisms, and a pilot program to test governance in a controlled environment. Scale through repeatable processes, tooling, and regular reviews that reinforce accountability and continuous improvement.

What metrics indicate effective AI governance?

Effective AI governance is reflected in both process and outcome metrics, including policy adoption rates, time-to-remediation after incidents, coverage of risk domains (data, model, security, privacy), audit findings severity and closure rates, and the proportion of AI deployments governed by automated controls. Additional indicators include stakeholder confidence, regulatory readiness, and demonstrated improvements in model fairness and transparency over time.