Now Reading: Data Lakehouse: Combining Data Lakes and Warehouses

-

01

Data Lakehouse: Combining Data Lakes and Warehouses

Data Lakehouse: Combining Data Lakes and Warehouses

Foundations of the Data Lakehouse

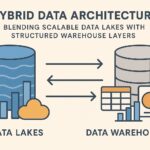

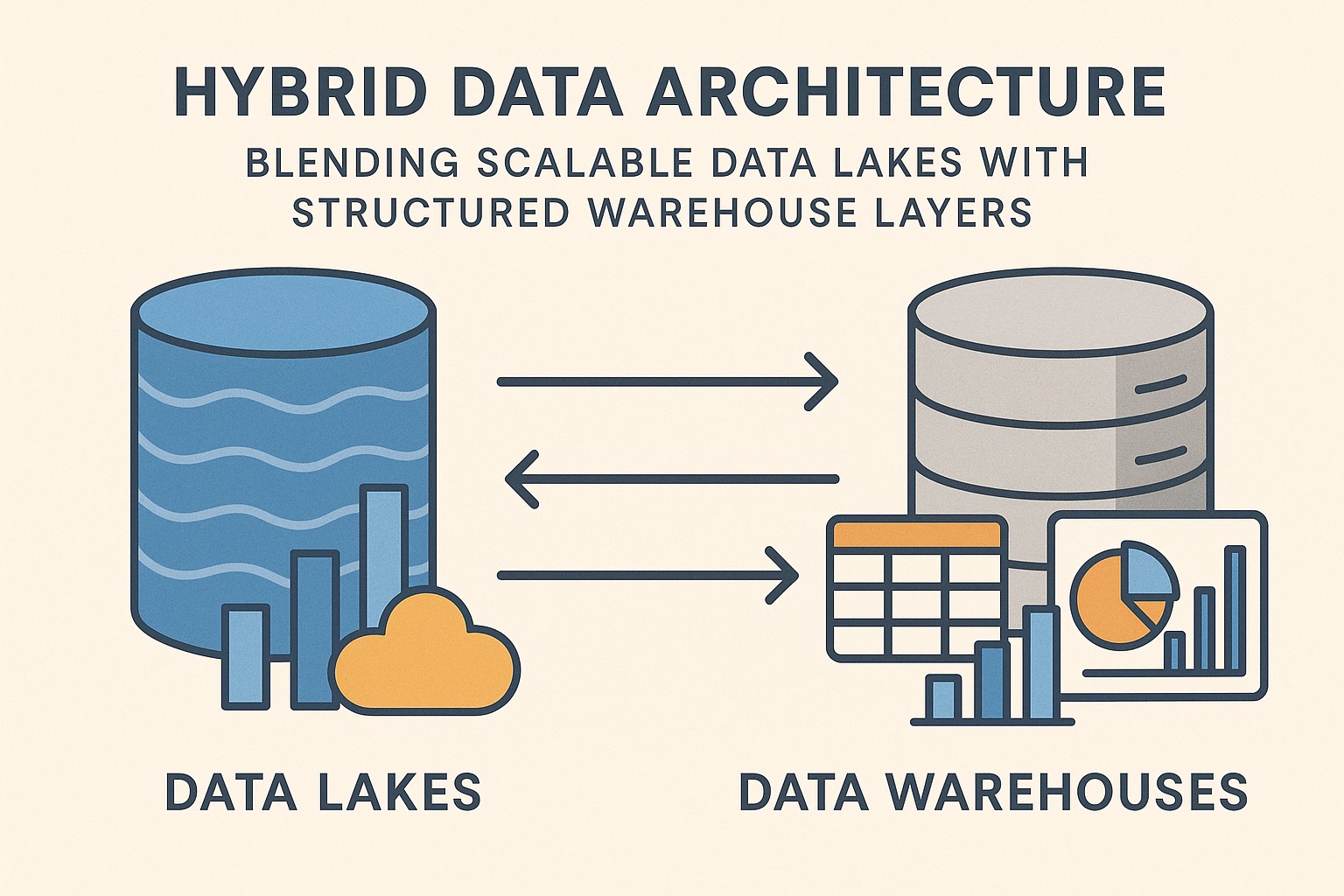

The data lakehouse represents an evolution in how organizations store, process, and analyze data. It combines the scalable, low-cost storage and raw data flexibility of a data lake with the structured governance, performance, and transactional guarantees of a data warehouse. In practical terms, a lakehouse lets you keep diverse data types—structured, semi-structured, and unstructured—in a single repository, while enabling reliable SQL-based analytics, ACID transactions, and consistent data semantics across workloads. For industries handling complex data ecosystems, such as healthcare, this fusion unlocks faster insights without forcing teams to extract data from one system to feed another.

From a business-technical perspective, the lakehouse model reduces data movement and duplication, simplifies data pipelines, and improves governance. Stakeholders can run exploratory data science, operational dashboards, and production-grade analytics against the same dataset. This coherence helps reduce latency between data generation and decision-making, which is particularly valuable when patient outcomes, regulatory reporting, and population health trends hinge on timely information. At the same time, the architecture remains adaptable to evolving data sources and analytics techniques, supporting experimentation while maintaining control over data quality and privacy.

Architectural Pillars of a Lakehouse

A lakehouse rests on several interlocking components that together enable reliable analytics at scale. The storage layer leverages object storage and open data formats, such as Parquet or ORC, to hold large volumes of raw and refined data. A unified metadata and schema layer provides a consistent catalog, lineage, and semantic definitions so that analysts and applications can interpret data uniformly, regardless of its origin. The compute and query layer exposes SQL and other analytical interfaces, with engines that can operate on both batch and streaming data and that support features like time travel and transactional integrity.

In addition to storage, compute, and metadata, a governance and security layer enforces access controls, auditing, data lineage, and data quality rules across the entire data lifecycle. This combination enables concurrent workloads—data engineering, data science, and business intelligence—without the traditional bottlenecks of siloed systems. A practical view of these pillars, in a simplified order, would be:

- Storage and open formats that store diverse data types cost-effectively.

- Compute and query engines that support concurrent workloads with strong consistency guarantees.

- Metadata and data cataloging to ensure discoverability, governance, and semantic clarity.

- Governance, security, and reliability to protect privacy, enable compliance, and sustain trust.

In healthcare contexts, these pillars translate into capabilities such as harmonizing patient records, imaging data, genomics, and claims data; supporting near-real-time monitoring while maintaining strict access controls; and enabling auditable data lineage for compliance with regulations such as HIPAA and GDPR. The architectural design must balance flexibility with discipline so that data remains trustworthy as it scales across departments and partner ecosystems.

Data Management and Governance in Lakehouses

Effective data management in a lakehouse hinges on establishing clear data contracts, standardized schemas, and robust transactional semantics. ACID transactions across data lakes allow multiple teams to read and write concurrently without stepping on each other’s work, which is essential for production analytics and operational dashboards. Time travel, rollback capabilities, and snapshot isolation enable reliable audits and reproducibility of analyses, critical in regulated industries where audits are routine and evidence trails are required.

Governance in a lakehouse extends beyond traditional access control. It encompasses data lineage that traces how data evolves from raw ingestion through transformations to analytical outputs, ensuring accountability and traceability. Data quality checks, data quality metrics, and policy enforcement at ingestion points help prevent dirty data from propagating into downstream workflows. For healthcare data, privacy-preserving practices—such as data masking, differential privacy, and access controls aligned with role-based permissions—are integral to maintaining patient trust while enabling actionable analytics.

Paragraphs here emphasize how the lakehouse supports both explorative analytics and governed production workloads. A well-architected governance model provides end-to-end visibility into data provenance, ensures consistent definitions across teams, and supports metadata-driven automation that reduces manual overhead. This cohesion enables organizations to respond to regulatory changes, audit requests, and new analytical questions with agility rather than friction.

Use Cases and Industry Applications

A lakehouse supports a wide range of analytics, from batch BI to real-time streaming, making it relevant across sectors but especially impactful in healthcare. The following use cases illustrate how the architecture translates into tangible business value:

- Integrated patient analytics that combine electronic health records, claims data, and wearable device streams to identify at-risk populations and optimize care pathways.

- Real-time monitoring dashboards for hospital operations, bed occupancy, and supply chain logistics, enabling timely interventions and improved patient experiences.

- Population health studies that merge clinical data with social determinants of health, enabling deeper insights and more effective public health programs.

- Regulatory reporting and audit-ready data products that satisfy payer and regulator requirements with transparent lineage and reproducible results.

- Research data collaboration platforms that share de-identified, governed datasets with researchers across institutions, accelerating discovery while preserving privacy.

Across these use cases, the lakehouse approach reduces the friction between data scientists experimenting with new models and business users relying on stable, governed data assets. In environments where big data in healthcare involves enormous volumes of imaging, genomics, and clinical observations, the lakehouse provides a scalable foundation for both exploratory research and mission-critical operations without sacrificing governance or performance.

Challenges and Trade-offs

As with any architectural paradigm, lakehouses come with trade-offs and implementation challenges. The most common concerns include data quality management across ingestion pipelines, ensuring consistent semantics when data originates from heterogeneous sources, and balancing cost with performance as workloads shift between batch and streaming modes. While open formats and unified catalogs reduce vendor lock-in, organizations must still invest in robust operational tooling, monitoring, and automation to prevent subtle inconsistencies from creeping into analytics results.

A candid perspective is that a lakehouse is not a silver bullet. It requires disciplined data governance, clear ownership, and a culture that values metadata as a first-class citizen. In healthcare, the sensitivity of patient data amplifies the importance of security and compliance; misconfigurations or gaps in access controls can have serious consequences. A thoughtful implementation includes ongoing validation of data quality, regular audits of data lineage, and well-defined SLAs for data availability and freshness. As teams mature their practices, they typically gain significant ROI through faster time-to-insight, reduced ETL toil, and more reliable analytics outcomes.

“A lakehouse provides the plumbing for scalable analytics, but the real value comes from the governance, discipline, and people who design and trust the data.”

Getting Started: Practical Steps to Implement a Lakehouse

Embarking on a lakehouse journey starts with a pragmatic plan that aligns data assets with business priorities. A recommended approach emphasizes incremental value, strong governance, and stakeholder buy-in. Begin by cataloging data assets and prioritizing use cases that will deliver measurable impact within a short horizon. This helps validate architectural choices, data quality practices, and access controls before expanding to a broader set of data domains.

- Inventory data assets and map them to concrete business outcomes. Identify data sources, ingestion frequency, quality gaps, and privacy considerations.

- Choose an architectural approach and platform that fit the organization’s scale, security posture, and cloud strategy. Consider interoperability with existing tools, teams, and data contracts.

- Design a unified data model with clearly defined schemas, business terms, and semantic consistency. Establish data catalogs, lineage capture, and standard transformation patterns.

- Implement governance and security foundations early. Enforce access controls, encryption, masking, and data retention policies; plan for regulatory audits and quality checks.

- Launch a focused pilot that demonstrates end-to-end data flow from ingestion to analytics with measurable outcomes. Use the pilot to refine SLAs, performance targets, and operational playbooks.

As you scale, maintain discipline around data contracts, metadata quality, and cross-functional collaboration. Invest in training so data engineers, data scientists, and business analysts share a common vocabulary and governance expectations. In healthcare settings, involve compliance teams early to align on privacy, consent, and audit requirements, ensuring that the lakehouse remains a trusted repository for patient data and research data alike.

Operational and Organizational Implications

Beyond the technical layers, a successful lakehouse program depends on organizational readiness. Teams must adopt a shared data governance model, define clear roles (data stewards, data engineers, data scientists, security leads), and establish operating procedures for change management, incident response, and continuous improvement. The data platform becomes a collaboration backbone, where business analysts rely on reliable data products, data engineers maintain robust pipelines, and security teams enforce policy compliance.

Healthcare organizations particularly benefit from formal data literacy programs that help clinicians and researchers understand data semantics, provenance, and limitations. A culture of data quality, reproducibility, and transparent governance helps build trust across departments and with external partners. Practically, this means investing in documentation, training, and automation that reduces manual effort, while providing auditable evidence of data handling practices for regulators and stakeholders.

FAQ

What is a data lakehouse?

A data lakehouse is a unified data architecture that combines the scalable storage of a data lake with the governance, performance, and transactional features of a data warehouse. It enables diverse workloads—batch analytics, streaming analytics, and data science—against a single source of truth, while supporting open formats and metadata-driven governance to maintain data quality and accessibility.

How does a lakehouse differ from a data lake or data warehouse?

A data lake prioritizes storage and raw data fidelity, often sacrificing immediate query performance and strong governance. A data warehouse emphasizes structured, pre-modeled data with strict schemas and high-performance queries but typically requires data to be transformed before loading. A lakehouse blends these strengths: it stores raw and refined data in a lake-like layer, adds transactional guarantees and a robust metadata layer, and provides warehouse-like query acceleration, enabling flexible, governed analytics on diverse data.

What workloads are well-suited for a lakehouse?

Lakehouses excel at workloads that demand both breadth and speed: exploratory data science, real-time dashboards, and production analytics across heterogeneous data sources. They are especially effective for healthcare scenarios that involve integrating EHRs, imaging, claims, and sensor data, while maintaining governance and privacy controls. Time-to-insight is improved because data does not need to be moved between siloed systems for each new analysis.

What challenges should organizations expect when implementing a lakehouse?

Common challenges include establishing consistent data semantics across sources, maintaining data quality at scale, managing costs associated with compute and storage, and implementing robust governance for privacy and compliance. Organizational challenges—such as aligning teams, defining ownership, and cultivating data literacy—also influence success. A thoughtful approach emphasizes incremental delivery, automation, and ongoing collaboration between IT, analytics, and business stakeholders.

How does data governance adapt to lakehouse architectures, particularly in healthcare?

Governance in a lakehouse relies on a comprehensive metadata catalog, lineage tracking, and policy-driven access control. In healthcare, governance must balance openness for research and analytics with strict privacy protections. This includes data masking, role-based access, auditing, and retention policies that align with regulatory requirements. The lakehouse provides the technical foundation, while governance processes and risk management practices ensure that data remains trustworthy, lawful, and ethically used.