Now Reading: Descriptive vs Predictive vs Prescriptive Analytics

-

01

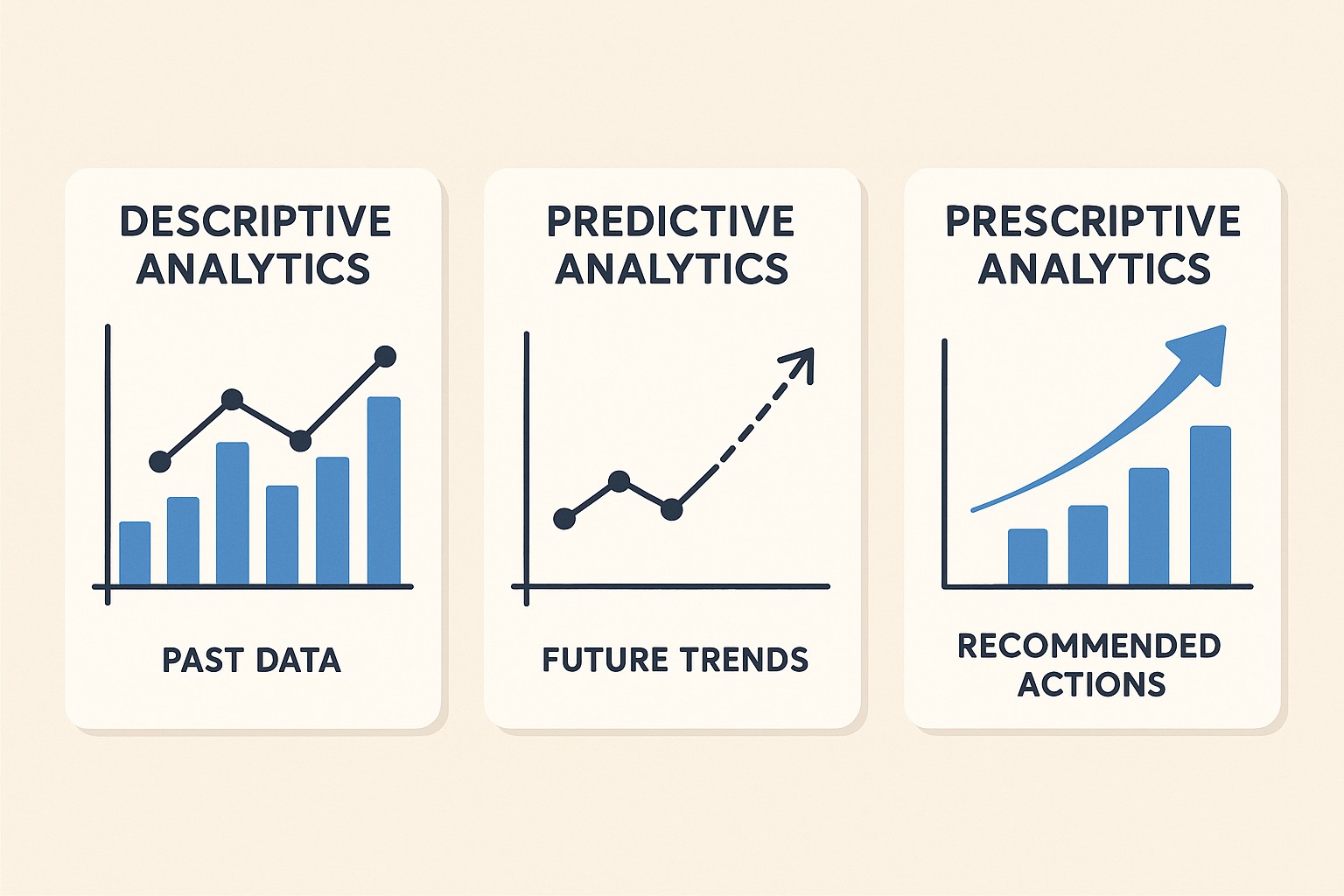

Descriptive vs Predictive vs Prescriptive Analytics

Descriptive vs Predictive vs Prescriptive Analytics

Descriptive Analytics: Understanding the Past to Inform the Present

Descriptive analytics focuses on summarizing historical data to reveal what has happened in the business. It answers questions like what happened, when it happened, where activities occurred, and how much impact was observed. The aim is to convert raw data into clear, actionable insights by transforming transactions, events, and interactions into a coherent picture of past performance. In practical terms, descriptive analytics underpins routine reporting and serves as the foundation for more advanced analytics by establishing the baseline state of the organization.

In a business context, descriptive analytics is often the first step in a data maturity journey. It translates data from disparate systems into digestible metrics and visuals—dashboards, scorecards, and executive summaries—that teams can reference in daily operations and strategic planning. It helps leaders answer questions such as where sales were strongest last quarter, which product lines generated the most margin, or which regions underperformed relative to targets. By highlighting anomalies, seasonality, and trend reversals, descriptive analytics provides situational awareness that informs resource allocation and governance decisions.

To deliver meaningful descriptive insights, organizations rely on a mix of data sources, aggregation techniques, and visualization practices. The typical data sources include transactional systems (ERP), customer relationship management (CRM) platforms, point-of-sale records, website analytics, and operational logs from manufacturing or logistics. Common outputs are KPI dashboards, trend charts, variance analyses, and drill-down reports that let users explore performance at multiple levels of granularity. For teams responsible for data governance, descriptive analytics also highlights data quality issues—missing values, inconsistent units, or duplicate records—that must be cleaned before deeper analyses can be trusted.

- Data sources: ERP systems, CRM platforms, e-commerce logs, supply chain and logistics systems, customer support tickets, website and app analytics.

- Outputs: dashboards, scorecards, period-over-period reports, trend analyses, exception reports.

- Techniques: data aggregation, basic statistics (averages, medians, distributions), segmentation, and visualization best practices to reveal patterns.

- Decision relevance: baseline measurement, performance monitoring, and governance oversight.

- Limitations to manage: historical focus, limited forward-looking guidance, and potential overreliance on surface-level trends.

Predictive Analytics: Forecasting What Could Happen

Predictive analytics moves beyond describing past performance to estimating future outcomes. It leverages historical data, patterns, and statistical models to forecast likely events, quantify risk, and assign probability to different scenarios. The core idea is to translate historical relationships into predictions that help decision-makers anticipate demand, identify at-risk customers, optimize inventory, and plan capacity. While descriptive analytics tells you what has occurred, predictive analytics suggests what is likely to occur next, along with confidence measures that quantify uncertainty.

Businesses use predictive analytics to guide planning and prioritization. For example, a consumer goods company may forecast demand at the SKU or store level to prevent stockouts while minimizing excess inventory. A subscription business might estimate churn propensity to target retention campaigns more efficiently. In manufacturing and logistics, predictive models forecast equipment failure or delivery delays, enabling proactive maintenance and route optimization. The practice also involves evaluating model performance using metrics such as mean absolute error, root mean squared error, precision-recall, or area under the ROC curve, and it requires ongoing monitoring to detect drift and recalibrate models as conditions change.

Achieving reliable predictions hinges on data quality, feature engineering, and appropriate modeling techniques. Analysts select methods ranging from traditional statistical approaches to modern machine learning, balancing accuracy, interpretability, and operational constraints. It is common to start with simpler models to establish a baseline and gradually introduce more complex algorithms as data volume and business needs grow. The end goal is not merely to predict a single number but to provide actionable risk assessments and probabilistic insights that inform risk-managed decisions and strategic direction.

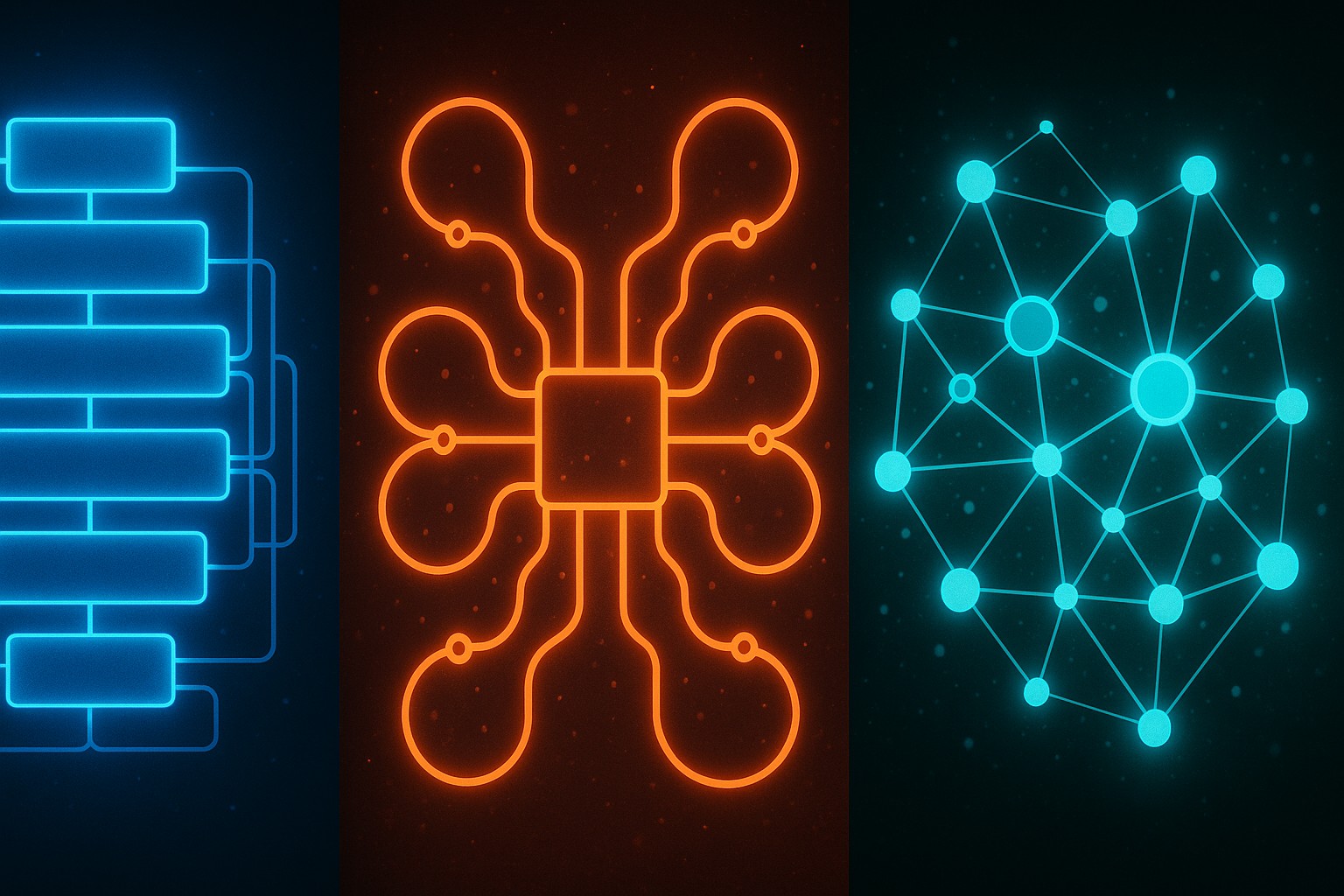

- Common modeling approaches: regression analysis (linear, logistic), time-series methods (ARIMA, Exponential Smoothing, Prophet), and machine learning algorithms (random forests, gradient boosting, neural networks).

- Evaluation metrics: forecast accuracy (MAE, RMSE), directional accuracy, and classification metrics for propensity models (AUC, precision, recall).

- Data requirements: historical records with relevant features, seasonality signals, and process-related covariates; data quality and timeliness are critical.

- Business applications: demand forecasting, customer attrition prediction, risk scoring, yield and pricing forecasts, operational capacity planning.

- Limitations to manage: overfitting, data leakage, feature drift, and the need for explainability to gain stakeholder trust in decisions.

Prescriptive Analytics: Recommending Actions Based on Predictions

Prescriptive analytics goes a step further by not only forecasting what might happen but also recommending actions that optimize outcomes given a set of objectives and constraints. It combines predictive outputs with optimization, simulation, and decision-analysis techniques to identify the best course of action under uncertainty. The essence of prescriptive analytics is to translate data-derived insights into concrete decisions, such as how to price a product, how to allocate limited resources, or which marketing strategies to deploy in a given market condition. In other words, it answers not just “what could happen” but “what should we do about it.”

In practice, prescriptive analytics is most valuable where decision rights, constraints, and trade-offs can be formally modeled. For supply chain teams, it can yield recommended production and distribution plans that minimize cost while meeting service levels. In pricing and revenue management, it can propose dynamic price points that maximize margin while staying competitive. For workforce management, it can suggest shift schedules and staffing levels that balance labor costs with service quality. The effectiveness of prescriptive analytics relies on the integration of accurate predictive signals, a well-defined objective function, and robust optimization methods, all supported by governance processes that ensure decisions align with strategic priorities and ethical standards.

Key components of a prescriptive analytics workflow include formulating the decision problem, selecting appropriate data and predictive inputs, defining the objective (what to optimize) and constraints (what cannot be violated), choosing an optimization or simulation method, and generating actionable recommendations along with scenario analysis. Implementations often require close collaboration between data scientists, operations researchers, domain experts, and decision-makers to ensure models reflect real-world dynamics and practical feasibility. Because prescriptive insights can translate into high-stakes actions, organizations also embed risk controls, monitoring, and feedback loops to validate outcomes and refine models over time.

- Key application areas: dynamic pricing, inventory optimization, production planning, routing and logistics optimization, workforce scheduling, and capacity planning.

- Modeling components: objective function (e.g., minimize cost, maximize profit), constraints (capacity, service levels, regulatory limits), and decision variables (quantities, assignments, routes).

- Techniques: optimization (linear, integer, nonlinear), stochastic programming, scenario analysis, and Monte Carlo simulation to capture uncertainty.

- Data & inputs: predictive signals (from predictive analytics), current state variables, costs, and constraints that reflect business realities.

- Risks and governance: model risk, data privacy considerations, transparency of recommendations, and alignment with policy and ethics.

Integrating the Three Analytics Levels in Practice

Most advanced analytics programs do not operate in silos. The strongest value comes from an integrated approach where descriptive insights establish the baseline, predictive models quantify likely futures, and prescriptive engines translate those futures into concrete actions. In practical terms, this integration begins with data governance and data quality management to ensure consistent, timely inputs across stages. It also requires alignment with business objectives, so that analytics outputs drive decisions that shareholders and customers care about.

Successful integration also demands a clear delineation of roles and decision rights. Data engineers ensure data pipelines are reliable and scalable, data scientists develop predictive and prescriptive models, and business analysts translate model outputs into decision-ready recommendations. Leaders should build feedback loops that monitor outcomes, capture learnings, and recalibrate models as the operating environment evolves. When done well, integration reduces reaction times, improves decision accuracy, and creates a shared mental model across departments about how data informs strategy and execution.

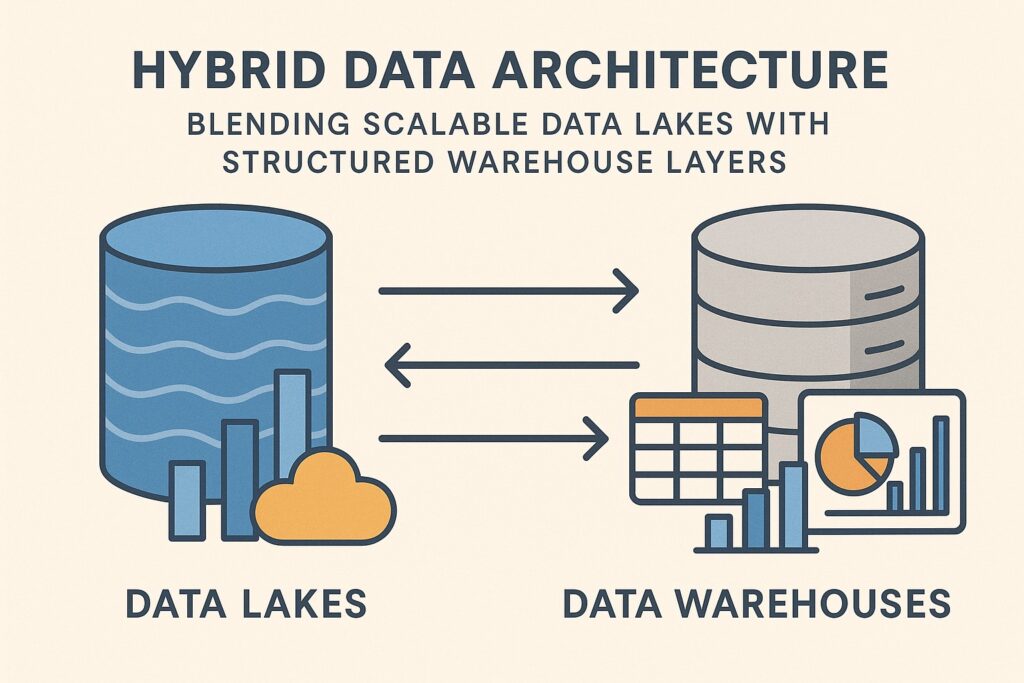

From a technical perspective, integration means adopting a layered architecture that preserves interpretability and traceability. Descriptive dashboards feed into predictive models that continually refresh forecasts, which in turn feed prescriptive optimization or simulation modules. This flow benefits from modular data governance, standardized performance metrics, and unified documentation so stakeholders can audit assumptions, reproduce analyses, and explain reasoning to non-technical executives. Finally, organizations should consider the human element: even the most sophisticated analytics are most effective when paired with change management, training, and a culture that values data-informed experimentation and responsible decision-making.

FAQ

What is the key difference between descriptive, predictive, and prescriptive analytics?

Descriptive analytics explains what happened by summarizing historical data, predictive analytics estimates what could happen in the future, and prescriptive analytics recommends specific actions to optimize outcomes given objectives and constraints. Together, they form a progression from understanding the past to shaping the future with informed decisions.

How should a company start implementing analytics in a practical way?

Begin with descriptive analytics to establish reliable dashboards and baseline KPIs, then introduce predictive models to forecast key metrics. Once confidence grows, pilot prescriptive use cases in low-risk areas to test optimization or decision support. Throughout, invest in data governance, ensure cross-functional sponsorship, and foster a culture of experimentation and continuous improvement.

What data quality considerations are most important for analytics?

Completeness, accuracy, consistency, timeliness, and provenance are critical. Poor data quality can propagate through all levels of analytics, leading to biased forecasts, inaccurate recommendations, and eroded trust. Implement data cleansing, lineage tracking, and validation checks as ongoing disciplines rather than one-off projects.

Which metrics indicate a successful analytics program?

Success is demonstrated by improved decision speed, higher forecast accuracy, better alignment between recommended actions and business outcomes, and demonstrable ROI from analytics-driven initiatives. In prescriptive contexts, tracking the realized value of recommended actions (cost savings, revenue gains, or service level improvements) is essential to justify ongoing investment.

How do governance and ethics influence analytics deployments?

Governance ensures data integrity, model transparency, and accountability for decisions. Ethical considerations include fairness, privacy, and avoiding biased outcomes. A robust framework defines who owns data, how models are validated, how explanations are provided to stakeholders, and how decisions are audited to prevent unintended consequences.